While you’re admiring the latest cloud tech, don’t forget that humans have been debugging pipelines, at least since the Romans built the aqueducts. Any good plumber can give you some hard-won tips on managing data pipelines effectively, insights that might save your career from going down the drain.

Your overalls are cleaner when you work on new construction

Green fields smell sweet, and a nice long design phase can give you a rosy view of your project. The real world is a bit more pungent. That’s why a key goal of DataOps is to expose your best-laid plans to real-life urgencies faster and initiate that crucial feedback loop sooner. With DataOps, you define a framework and a set of best practices to deal with real-world changes and crises. It’s about tapping into that feedback loop earlier to keep your process reliable — and relevant.

It’s not done until you’ve run the water through it

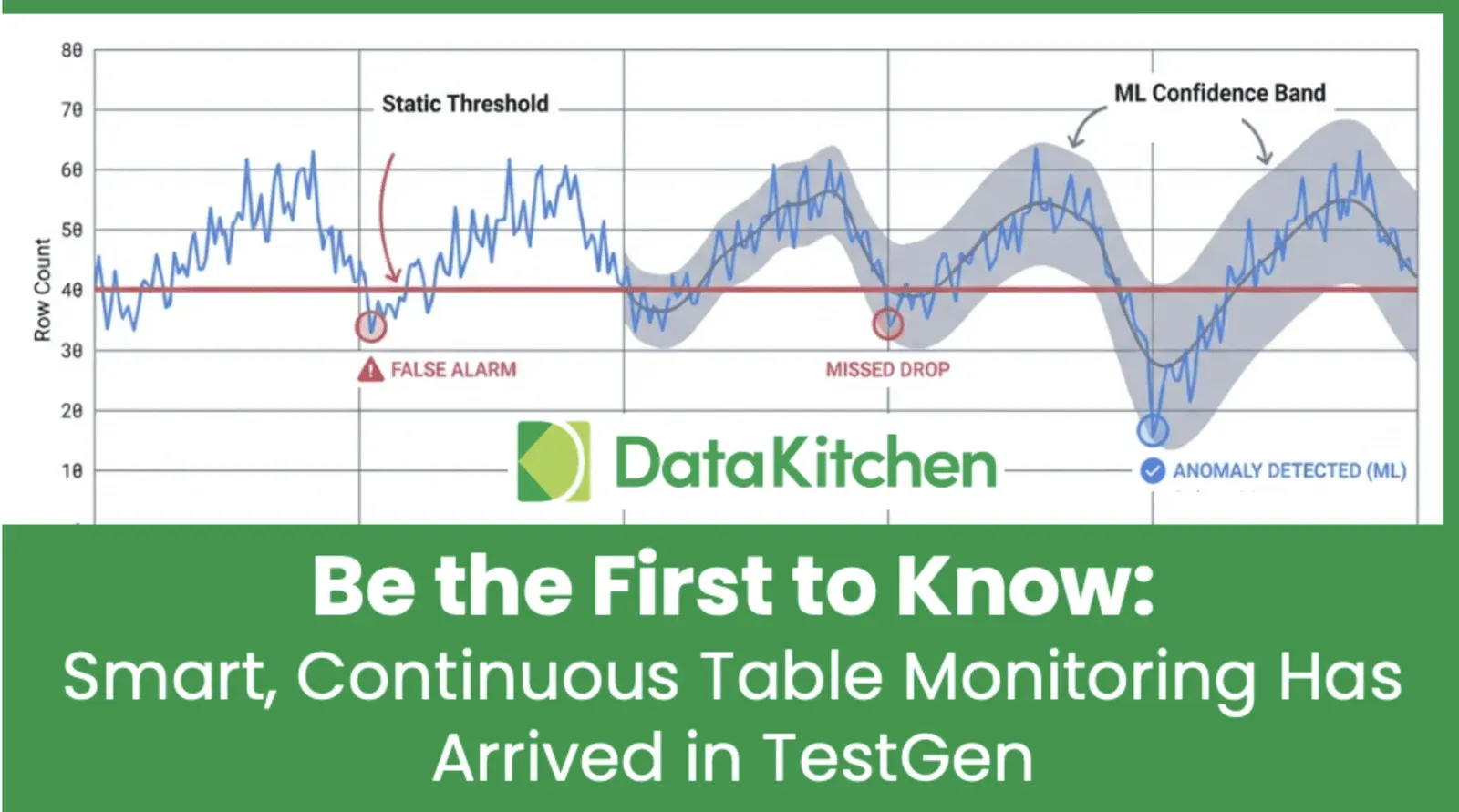

Good testing, like exercise and veganism, is the subject of fervent talk and half-hearted action. There are lots of reasons good people test inadequately. Deadlines are high-profile, and details go unnoticed. The stakes may be high, but the reckoning seems distant. It’s possible, and often irresistible, to move on to the next emergency before the last ingenious solution has been proven out. But water is inexorable, and plumbers quickly face the consequences of their mistakes. Testing is intrinsic to the job.

So you’ve checked that it works — are you done? Of course not. Plumbers don’t just check the water level; they may install a low-water cutoff switch or a relief valve so the system responds to changing inputs. They might install a leak detector to monitor for problems automatically, and send alerts, while the house is unattended. By automating your tests, then running them with each refresh, you build in safety valves for your data pipeline.

Don’t over-tighten your fittings

Some lessons you learn the hard way. Materials expand over time, and today’s perfect fit can become tomorrow’s stuck coupling or busted pipe. In data engineering, too, systems fail when tolerances are too tight. Your schedule between the end of one process and the kick-off of another needs to account for delays that inevitably crop up. Your data types must strike a balance between tight checks and resilient operations. Every process has dimensions of variability you can’t control. Expect perfection, and you’ll guarantee it won’t happen.

Don’t hang junk off your pipes

Every plumber has a story about the folks who found it convenient to hang their wardrobe from the water pipes — until they flooded the basement. Data Engineers take similar risks when they over-complicate their code or tack on extraneous processes at the wrong points in a critical path build. Organize your data flows by function and criticality. You want a simple structure so people can immediately jump in and orient themselves. Your goal isn’t to intimidate people or squeeze the most complexity into the smallest space. Even if it looks simple now, it’s going to get more complicated over time — don’t create an unwieldy mess.

Read the plans, but believe the piping

When maintaining a home, blueprints and schematics can give you lots of information, some of which are even accurate. Plumbing “as-built” can vary greatly from “as-designed,” and the only reliable way to know what you’re working with is to go to the pipes. As data engineers, we strive to divide our grumbling equally between the awful documentation we must decipher and the hassle of documentation we have to produce. It is even easier to skimp on documentation than testing. The gap between specs and reality starts to grow from the day the specs are defined.

There are two solutions to this. One is to set clear rules that documentation must be thorough and up-to-date. These rules will be carefully filed away with your documentation. The alternative is to acknowledge that the primary source of your docs will always be your data structures and code. The strategy: lower the friction to document your team’s work. If you encourage your team to comment consistently, you can rely on tools to automate daily refreshes of your knowledge base for everybody else. Details will be more up-to-date. Gaps will become readily apparent. Your documentation efforts, like your business, will be data-driven.

Tell the residents before you shut off the water on Saturday night

Water and mediocrity flow downhill. Good plumbers realize how much people depend on their work. You don’t have to tell a plumber that sh*t happens. But transparency and good communication will get you out of many jams. People appreciate knowing what’s happening and how it might impact their work. Once you realize that part of your work is managing downstream expectations, you’ll get more of the credit you deserve.

Don’t bite your nails on the job

Data engineers are human, and human frailties are part of the systems you build. Any pipeline that expects people to get it right every time is not just a source of stress – it’s structurally deficient. If you build out systemic solutions to human error, you will have better pipelines and a more engaged, effective team.

There’s a different issue here for plumbers. I won’t get into it. Either way, if you follow the rules above, you will be flushed with success!