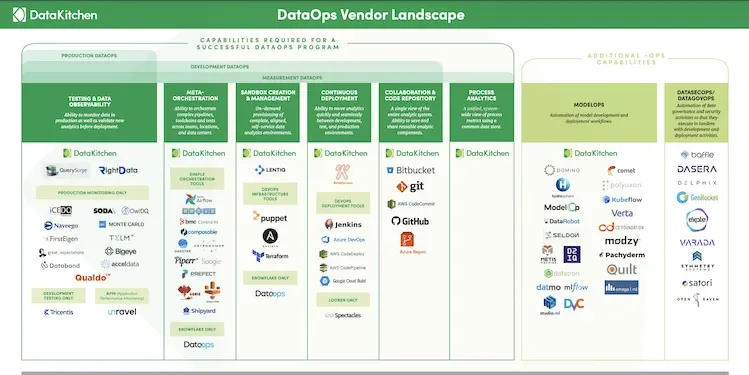

Download the 2021 DataOps Vendor Landscape here. Read the complete blog below for a more detailed description of the vendors and their capabilities.

DataOps is a hot topic in 2021. This is not surprising given that DataOps enables enterprise data teams to generate significant business value from their data. Companies that implement DataOps find that they are able to reduce cycle times from weeks (or months) to days, virtually eliminate data errors, increase collaboration, and dramatically improve productivity.

As a result, vendors that market DataOps capabilities have grown in pace with the popularity of the practice. To date, we count over 100 companies in the DataOps ecosystem. However, the rush to rebrand existing products with a DataOps message has created some marketplace confusion. Because it is such a new category, both overly narrow and overly broad definitions of DataOps abound. It is easy to get overwhelmed when trying to evaluate different solutions and determine whether they will help you achieve your DataOps goals.

To clear up the confusion, we’ve created a DataOps vendor landscape, organized by the 6 key capabilities required for DataOps success.

- Meta-Orchestration

- Testing and Data Observability

- Sandbox Creation and Management

- Continuous Deployment

- Collaboration and Sharing

- Process Analytics

We have also included vendors for the specific use cases of ModelOps, MLOps, DataGovOps and DataSecOps which apply DataOps principles to machine learning, AI, data governance, and data security operations.

Please let us know if we have forgotten anyone or if you have any comments (marketing@www.datakitchen.io).

Meta-Orchestration

DataOps needs a directed graph-based workflow that contains all the data access, integration, model and visualization steps in the data analytic production process. It orchestrates complex pipelines, toolchains, and tests across teams, locations, and data centers.

- DataKitchen – DataKitchen’s “single-pane-of-glass” DataOps Platform creates order out of chaos by flexibly orchestrating all of your analytic pipelines and their components, allowing your teams to work faster and collaborate more effectively.

- DataOps.Live — DataOps for Snowflake. 100% of your DataOps needs in one end-to-end platform.

Other Simple Orchestration Tools

- Airflow — An open-source platform to programmatically author, schedule, and monitor data pipelines.

- Apache Oozie — An open-source workflow scheduler system to manage Apache Hadoop jobs.

- DBT (Data Build Tool) — A command-line tool that enables data analysts and engineers to transform data in their warehouse more effectively.

- BMC Control-M — A digital business automation solution that simplifies and automates diverse batch application workloads.

- Composable Analytics — A DataOps Enterprise Platform with built-in services for data orchestration, automation, and analytics.

- Reflow — A system for incremental data processing in the cloud. Reflow enables scientists and engineers to compose existing tools (packaged in Docker images) using ordinary programming constructs.

- Dagster/ElementL — A data orchestrator for machine learning, analytics, and ETL.

- Astronomer.io — Astronomer recently re-focused on Airflow support. They make it easy to deploy and manage your own Apache Airflow webserver, so you can get straight to writing workflows.

- Piperr.io — Pre-built data pipelines across enterprise stakeholders, from IT to analytics, tech, data science and LoBs.

- Prefect Technologies — Open-source data engineering platform that builds, tests, and runs data workflows.

- Genie — Distributed big data orchestration service by Netflix.

- Saagie — Seamlessly orchestrates big data technologies to automate analytics workflows and deploy business apps anywhere.

Testing and Data Observability

DataOps reduces errors by monitoring analytics in production as well as validating new analytics before deployment.

Production Monitoring and Development Testing

- DataKitchen – Enables users to add tests at every step in their production and development pipelines.

- RightData – A self-service suite of applications that help you achieve Data Quality Assurance, Data Integrity Audit and Continuous Data Quality Control with automated validation and reconciliation capabilities.

- QuerySurge – Continuously detect data issues in your delivery pipelines.

Production Monitoring Only

- ICEDQ — Software used to automate the testing of ETL/Data Warehouse and Data Migration.

- Naveego — A simple, cloud-based platform that allows you to deliver accurate dashboards by taking a bottom-up approach to data quality and exception management.

- FirstEigen — Automatic data quality rule discovery and continuous data monitoring

- Great Expectations — Helps teams save time and promote analytic integrity with a new twist on automated testing: pipeline tests. Pipeline tests are applied to data (instead of code) and at batch time (instead of compiling or deploy time).

- Enterprise Data Foundation — Open-source enterprise data toolkit providing efficient unit testing, automated refreshes, and automated deployment.

- CompactBI — TestDrive is a testing framework for your data and the processes behind them. (Acquired by Informatica, July 2020)

- Databand — Data pipeline performance monitoring and observability for data engineering teams.

- Soda Data Monitoring — Soda tells you which data is worth fixing. Soda doesn’t just monitor datasets and send meaningful alerts to the relevant teams. It identifies and prioritizes data issues that are causing your business the most damage, and walks you through a resolution workflow.

- BigEye(formerly Toro Data) — Detect data quality problems and anomalies automatically. Then fix them before they hit production.

- OwlDQ — Predictive data quality

- Monte Carlo Data — Data reliability delivered. Data breaks. We ensure your team is the first to know and the first to solve.

- AccelData — Observability for analytics & AI. Observe, optimize, and scale enterprise data pipelines.

- Validio — Automated real-time data validation and quality monitoring.

- LightUp Data — Proactively detect and understand changes in product data that are symptomatic of deeper issues across the data pipeline – before they are noticed.

- BigEval – Get the most professional tools to validate enterprise data and maintain a high level of information quality.

- Telm.ai — Telm.ai helps data engineers and data architects to design, build and maintain robust and reliable data systems

Development Testing Only

- Tricentis — Continuous testing platform is fully automated, codeless and driven by AI.

Application Performance Monitoring

- Unravel — Manages the performance and utilization of big data applications and platforms.

- SelectStar — Database monitoring solution with alerts, monitoring, and relationship mapping.

- Redgate — SQL tools to help users implement DataOps, monitor database performance, and provision of new databases.

Sandbox Creation and Management

Successful DataOps requires the on-demand provision of complete, aligned self-service analytics environments. The ability to copy and paste an entire analytic platform and then use it to iterate on new ideas is one of the most effective ways that DataOps improves velocity

- DataKitchen — a DataOps Platform that supports the deployment of all data analytics code and configuration.

- Lentiq — Lentiq is the data science environment that brings your projects to life.

- DataOps.Live — DataOps for Snowflake: 100% of your DataOps needs in one end-to-end platform

DevOps Infrastructure Tools

- Puppet – Manages and automates infrastructure and complex workflows.

- Ansible – Delivers simple IT automation that ends repetitive tasks and frees up DevOps teams for more strategic work

- Terraform – Open-source infrastructure as code software tool that provides a consistent CLI workflow to manage hundreds of cloud services.

Continuous Deployment

DataOps increases agility by enabling analytics to move quickly and seamlessly between development, test, and production environments.

- DataKitchen — a DataOps Platform that supports the deployment of all data analytics code and configuration.

- Amaterasu — is a deployment tool for data pipelines. Amaterasu allows developers to write and easily deploy data pipelines, and clusters manage their configuration and dependencies.

- Spectacles — Deploy your LookML with confidence. Spectacles automatically tests your LookML to ensure Looker always runs smoothly for your users.

Database Deployment

- Liquibase — Database release automation for software development teams

- DBMaestro — DevOps for the database

DevOps Deployment Tools

- Jenkins — a ‘CI/CD’ tool used by software development teams to deploy code from development into production

- Azure DevOps

- AWS Code Deploy

- AWS Code Pipeline

- Google Cloud Build

Process Analytics

DataOps requires that teams measure their analytic processes in order to see how they are improving over time. A complete DataOps program will have a unified, system-wide view of process metrics using a common data store.

- DataKitchen — The DataKitchen Platform provides unprecedented visibility into the state of your data operations with process metrics that show how your teams are increasing collaboration, improving productivity, expanding test coverage, reducing errors, speeding deployment cycle times, and consistently meeting deadlines.

Collaboration and Code Repository

DataOps fosters collaboration through a single view of the entire analytic system, as well as the ability to save and share reusable analytic components.

- DataKitchen — Fosters collaboration by providing a common place to work and a single view of the end-to-end analytic process. Team members innovate and experiment in separate but aligned Kitchens. Users can easily save and share commonly used parts of pipelines.

- Bitbucket – Git code management. Bitbucket also gives teams one place to plan projects, collaborate on code, test, and deploy.

- Git – A free and open-source distributed version control system.

- GitHub – A provider of Internet hosting for software development and version control using Git. It offers the distributed version control and source code management functionality of Git, plus its own features.

- AWS Code Commit – A fully-managed source control service that hosts secure Git-based repositories.

- Azure Repos – Unlimited, cloud-hosted private Git repos.

ModelOps/MLOps

ModelOps and MLOps fall under the umbrella of DataOps,with a specific focus on the automation of data science model development and deployment workflows

- DataKitchen — A DataOps Platform that supports the testing and deployment of data science models and the creation of sandbox data science environments.

- Domino — Accelerates the development and delivery of models with infrastructure automation, seamless collaboration, and automated reproducibility.

- Hydrosphere.io — Deploys batch Spark functions, machine-learning models, and assures the quality of end-to-end pipelines.

- ModelOp — Governs, monitors, and orchestrates models across the enterprise.

- ParallelM — Moves machine learning into production, automates orchestration, and manages the ML pipeline. (Acquired by DataRobot June 2019)

- Seldon — Streamlines the data science workflow, with audit trails, advanced experiments, continuous integration, and deployment.

- Metis Machine — Enterprise-scale Machine Learning and Deep Learning deployment and automation platform for rapid deployment of models into existing infrastructure and applications.

- Datatron — Automates deployment and monitoring of AI models

- DataMo – Datmo tools help you seamlessly deploy and manage models in a scalable, reliable, and cost-optimized way.

- MLFlow – An open-source platform for the complete machine learning lifecycle from Databricks.

- Studio.ML — A model management framework written in Python to help simplify and expedite your model-building experience.

- Comet.ML — Allows data science teams and individuals to automagically track their datasets, code changes, experimentation history and production models creating efficiency, transparency, and reproducibility.

- Polyaxon — An open-source platform for reproducible machine learning at scale.

- Kubeflow — The Machine Learning Toolkit for Kubernetes

- Verta.ai — AI and Machine Learning deployment and operations for enterprise data science teams.

- Omega | ML — Python AI/ML analytics deployment & collaboration for humans

- CD Foundation SIG on MLOps

- Quilt Data — Versions and deploys data. Like Docker for data.

- Pachyderm — version control for data, similar to what Git does with code.

- DVC — Open-source Version Control System for Machine Learning Projects … data version control

DataGovOps/DataSecOps

DataGovOps and DataSecOps tools apply DataOps principles to data governance and security activities so that they execute in tandem with development and deployment activities.

- Delphix — A software platform that enables teams to virtualize, secure and manage data.

- Privitar— More data-driven decisions without compromising on privacy. Get more business value from sensitive data — while enhancing privacy protection.

- Hazy — Generates smart synthetic data that’s safe to use and actually works as a drop-in replacement for real data science, model training and analytics workloads.

- GenRocket — Achieve Continuous Testing with Enterprise Test Data Generation Our System was designed to help QA teams generate the exact test data they need at a low cost.

- eXate — DataSecOps solution simplifies the way organizations access and share data.

- Varada – Self-optimizing cloud data virtualization platform.

Other Vendors Talking DataOps

In addition to the tools above, there are many data and analytic toolchain vendors that message DataOps. However, these solutions are independent components of the data toolchain that collect, store, transform, visualize, and govern the data running through the pipeline. Although all of these technologies play an important role in the value pipeline, they do not meet the definition of DataOps tools defined above. Some of these vendors have redefined DataOps to fit what their product does. Others correctly define DataOps but pursue “halo effect” marketing (e.g., DataOps is great, but use our tool first).

- Nexia — Scalable and secure Data Operations platform that allows business users to send, receive, transform, and monitor data.

- Switchboard Software — fully managed, cloud-hosted data operations solution that integrates, cleans, transforms and monitors data.

- Tamr — enterprise data unification solution that uses a bottoms-up, machine-learning-based approach.

- StreamSets — The industry’s first data operations platform for full life-cycle management of data in motion.

- Infoworks — Use big data automation to simplify data engineering and DataOps

- Lenses — The enterprise overlay for Apache Kafka R & Kubernetes

- IBM – IBM renamed several of their products as DataOps

- Hitachi Vantara – Digital operations, infrastructure solutions, IOT applications, data management, and multi-cloud acceleration.

- Atlan – A data workspace for data catalogs, quality, lineage, and exploration

- Zaloni – The leading augmented data management platform.

- Qlik – End-to-end, multi-cloud data integration and analytics solutions.

- SentryOne – SentryOne is a leading provider of database performance monitoring and DataOps solutions on SQL Server, Azure SQL Database, and the Microsoft Data Platform.

- Rivery – Automate, manage, and transform data so it can be fed back to stakeholders as meaningful insights.

- Quobole — Big data-as-a-service company with a cloud-based platform that extracts value from huge volumes of structured and unstructured data.

Service and Consulting Organizations with some DataOps experience

There are a growing number of service and consulting organizations developing expertise in DataOps.

- Kinaesis — Works with our clients within the Financial Services to leverage investment into Data Solutions and generate real value.

- Capgemini — Capgemini is building a practice area around DataOps

- John Snow Labs — Data curation, data science, data engineering, and data operations services. specializing in healthcare and life science.

- XenonStack — DataOps, DevOps, decision support, big-data analytics, and IoT services

- Locke Data — Data science services

- Cognizant – Data and analytics consulting practice

- Wipro – Data, analytics, and AI consulting

- IBM — In addition to selling DataOps-branded products, IBM offers DataOps consulting services

- Great Data Minds – Data modernization consulting

- LEIT — Specialists in data and analytics solutions

- Cynozure – Data and analytics strategy consulting