Gartner Research “Introducing DataOps Into Your Data Management Discipline”

Published: 31 October 2019 ID: G00376495

Analyst(s): Ted Friedman, Nick Heudecker

Summary

“To relieve bottlenecks and barriers in delivery of data and analytics solutions, organizations need to… introduce DataOps techniques in a focused manner, data and analytics leaders can affect a shift toward more rapid, flexible and reliable delivery of data pipelines.”

Key Challenges

-

“speed and reliability of project delivery they desire because too many roles, too much complexity and constantly shifting requirements” – see our analytics at Amazon speed: https://www.www.datakitchen.io/high-velocity-data-analytics-with-dataops.html

-

“In most organizations, this complexity is exacerbated by limited or inconsistent coordination across the roles involved in building, deploying and maintaining data pipelines.” At DataKitchen we’ve written a lot about what collaboration means in DataOps: Intra-team coordination DataOps Teamwork (Aug 2019) Inter-team coordination: Warring Tribes (April 2019) and Centralization vs. Freedom (Oct 2018)

-

“Data and analytics leaders often have difficulty determining the optimal pace of change when introducing new techniques.” This creates problems that we recently that captures in our high-level views: What is DataOps? And What is DataOps – Top Ten Questions

Recommendations

-

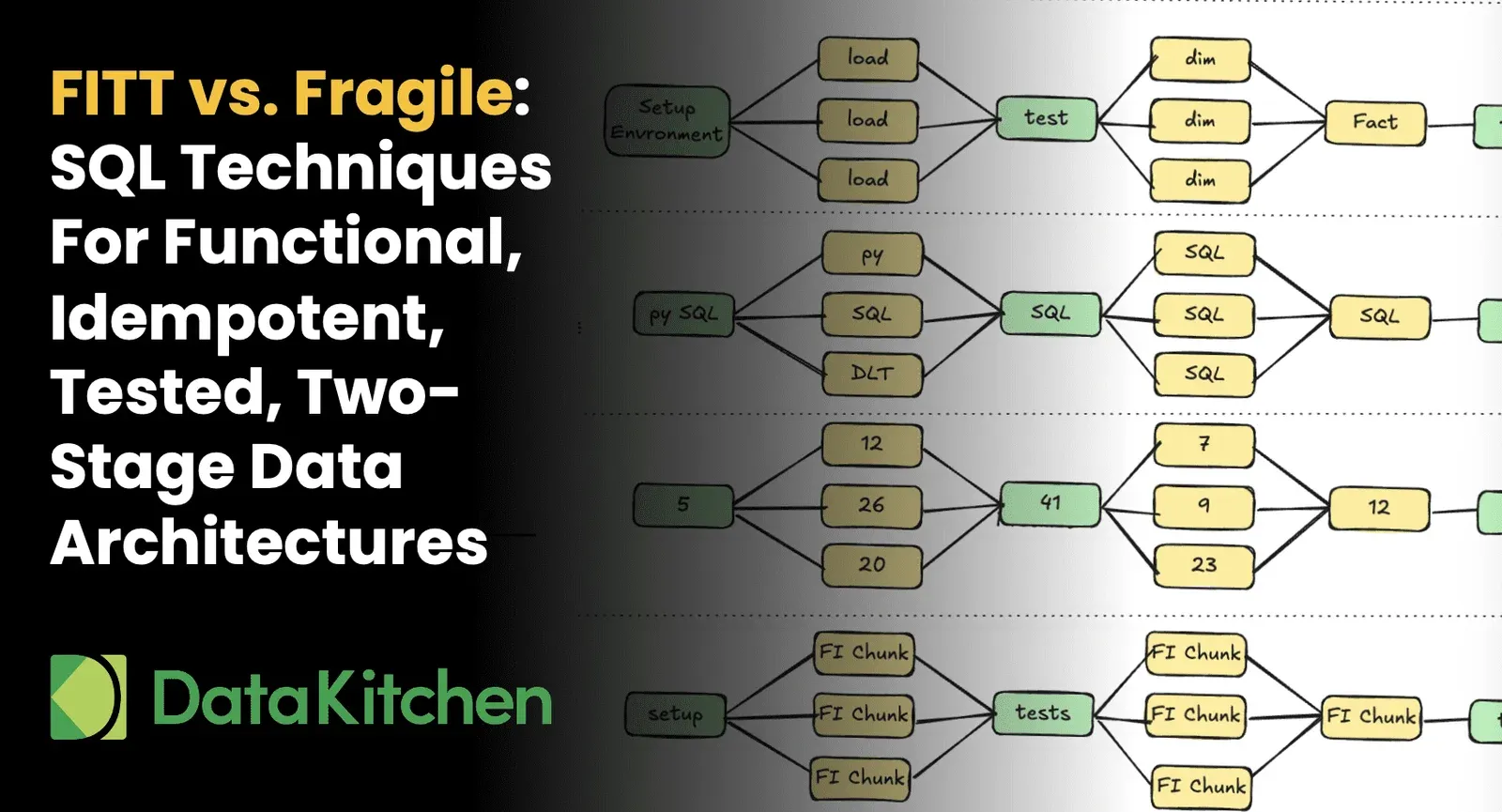

“Enable greater reliability, adaptability and speed by leveraging techniques from agile application development and deployment (DevOps) in your data and analytics work”: https://www.www.datakitchen.io/dataops-vs-devops.html

-

Increased deployment frequency —rapid and continuous delivery of new functionality https://www.www.datakitchen.io/analytics-at-amazon-speed.html;

-

Automated testing — don’t manual test. It create an error fiesta https://medium.com/data-ops/dataquality/home;

-

Version control —tracking changes across all participants in data pipeline delivery https://medium.com/data-ops/the-best-way-to-manage-your-data-analytics-source-files-7559d48db693; and https://medium.com/data-ops/how-to-enable-your-data-analytics-team-to-work-in-parallel-e0cb2a5c2289

-

Monitoring — constantly tracking behavior and testing of pipeline(s) in production https://medium.com/data-ops/how-data-analytics-professionals-can-sleep-better-6dedfa6daa08

-

Collaboration across all stakeholders — “ … essential to speed of delivery” Enable collaboration across key roles (data engineer/scientist/visualization/governance, etc.) by including them in a common process. Warring Tribes (April 2019) and Centralization vs. Freedom (Oct 2018).

-

Complex data pipelines can involve all roles — and lack of consistent communication and coordination across them adds time and introduces errors DataOps Teamwork (Aug 2019)

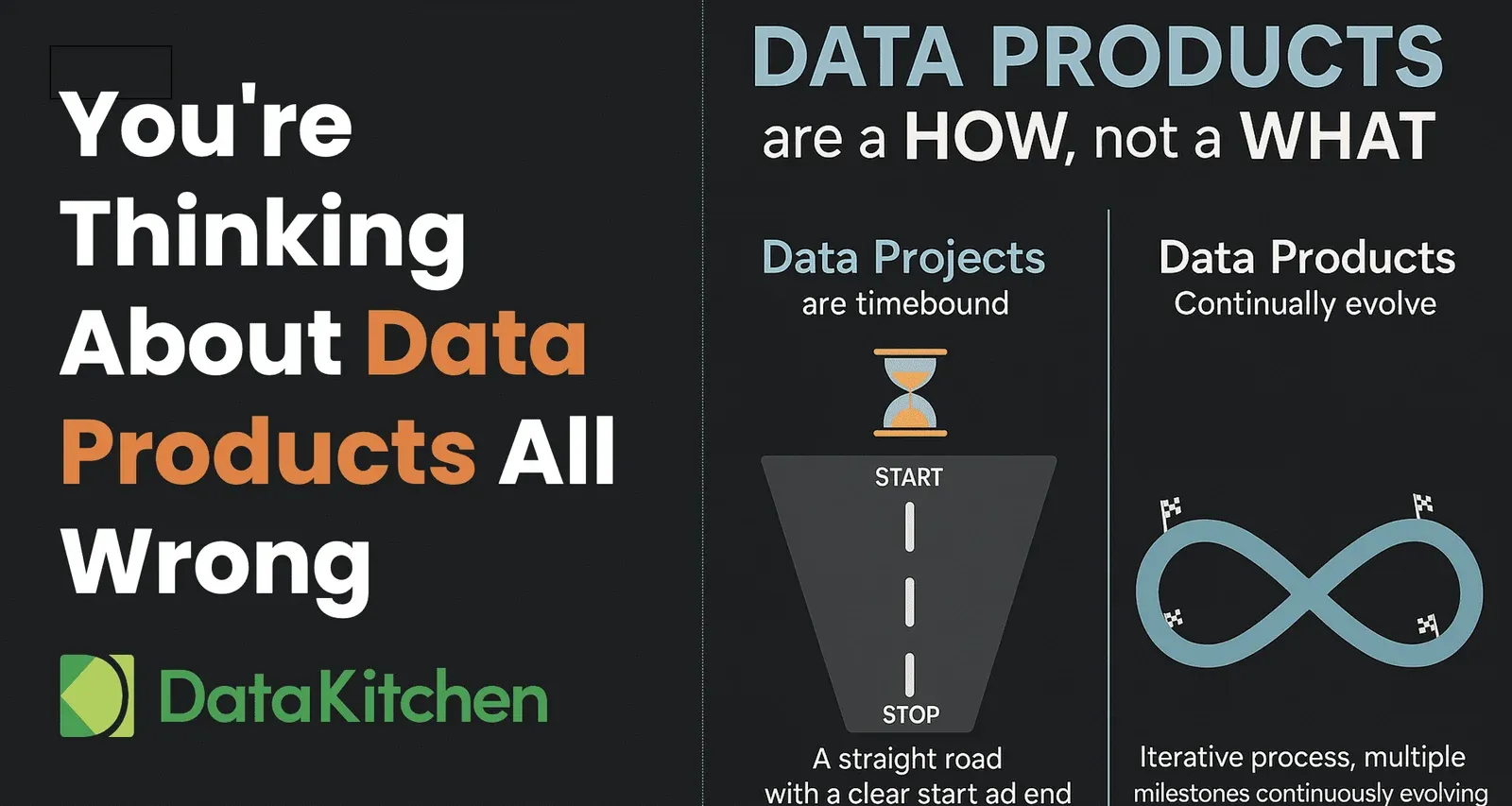

Introducing these DataOps capabilities

“To avoid introducing too much change too quickly, data and analytics leaders can focus on a subset of the steps in the value chain, rather than immediately introducing new approaches in every step executed by every role“

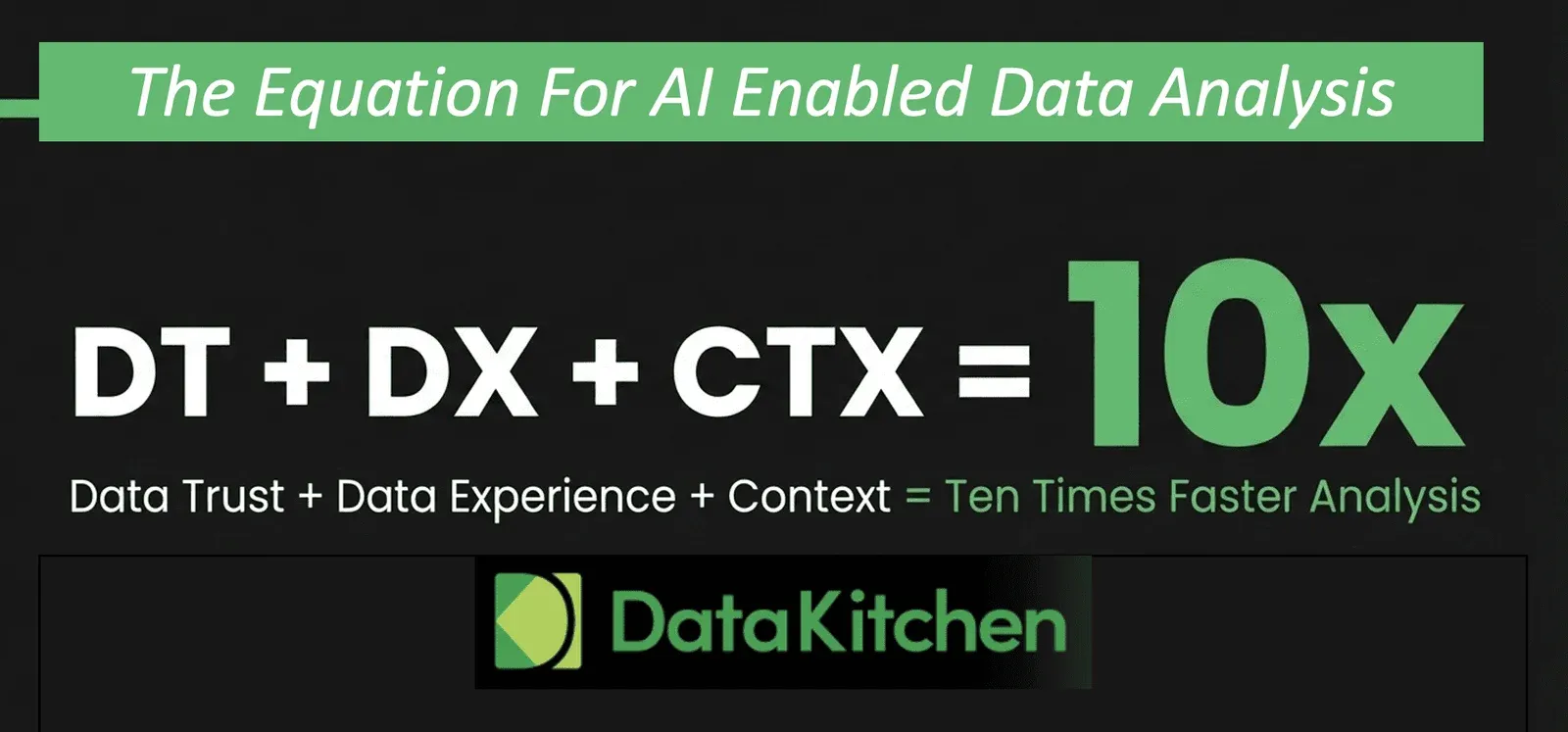

Analytics teams need to move faster, but cutting corners invites problems in quality and governance. How can you reduce cycle time to create and deploy new data analytics (data, models, transformation, visualizations, etc.) without introducing errors? The answer relates to finding and eliminating the bottlenecks that slow down analytics development https://www.www.datakitchen.io/whitepaper-dataops-bottlenecks.html

How can you do this with design thinking in mind? https://medium.com/data-ops/enabling-design-thinking-in-data-analytics-with-dataops-4765bcbf8211

DataOps Uptake

Gartner end-user client inquiry data shows a 200% YoY increase in 2019 YTD on DataOps related questions