How does a data team prevent poor data from poisoning AI when they have piles of raw and imperfect data?

Teams responsible for data used to train AI models (e.g., LLMs) face a persistent problem: piles of raw, imperfect data. Pressure builds to process quickly, publish promptly, and push data into pipelines. But passing problematic data into production-powered models can produce biased predictions, polluted patterns, and poor performance.

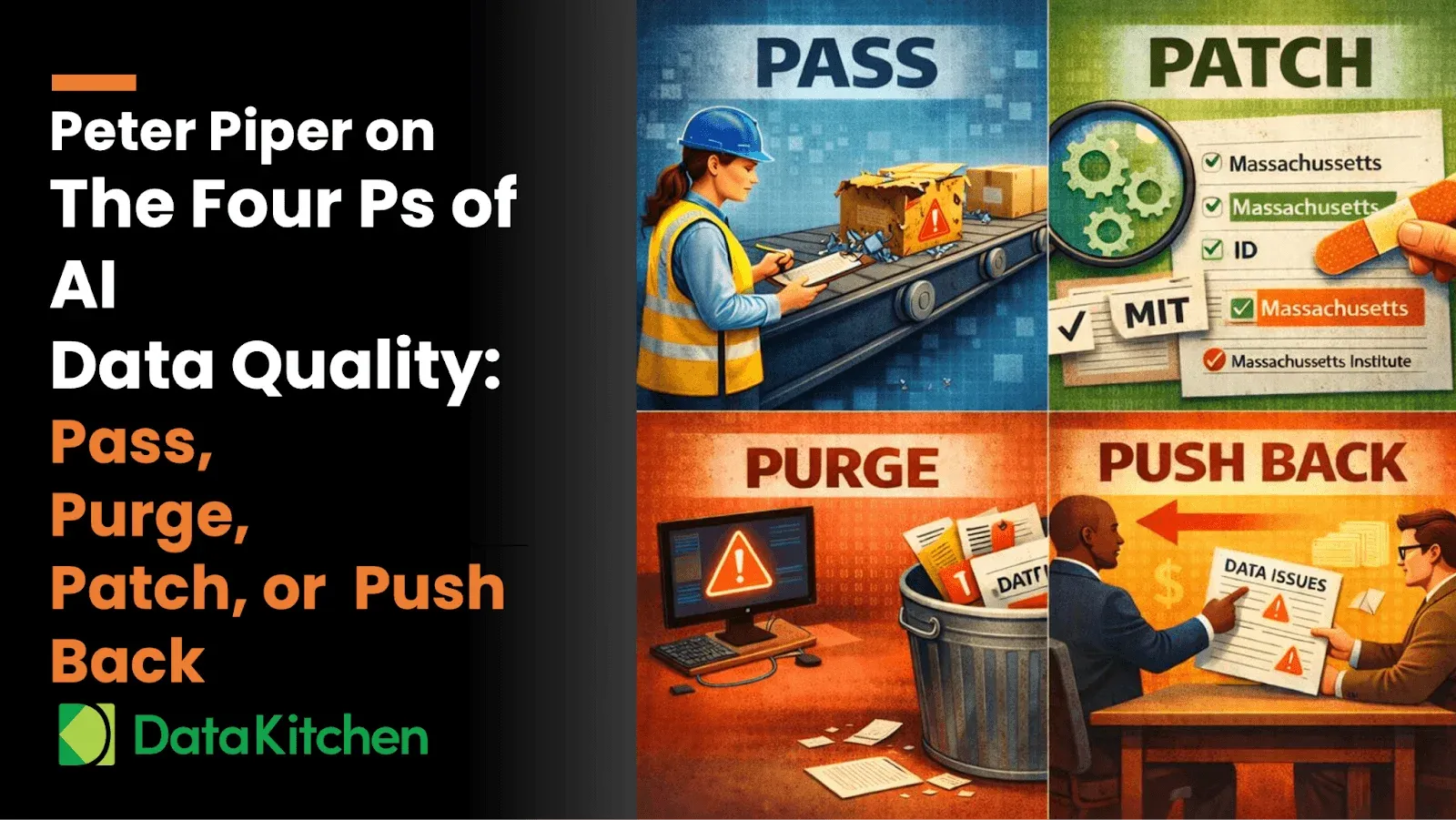

Before pipelines proceed, managers must pause and pick a path. In practice, there are four practical options for handling raw data: Pass, Purge, Patch, Push Back

Let’s walk through the four options in a pragmatic progression.

1. Pass: The Path of Least Preparation

You can do nothing and simply pass all data to the model. No profiling. No policing. No protection

This path promises speed and simplicity. It is the least amount of work, but also the largest potential risk. Poorly prepared data propagates problems downstream, where models may:

- Learn patterns that never persist

- Produce predictions based on polluted fields

- Perpetuate problems at production scale

Passing should be a conscious, calculated choice – not a default.

Before passing, teams should profile data quality and consider the remaining paths.

2. Purge: Preventing Poisoned Patterns

When a record contains a clear data quality problem, the most prudent path may be to purge it.

Purge means delete.

Examples include:

- A date in the future when future values are impossible

- A required field that is missing

- A primary key that is null or malformed

Purging prevents polluted records from poisoning patterns learned by AI models. While purging reduces volume, it protects validity and preserves precision.

This is not punishment – it is protection.

3. Patch: Precise, Programmatic Problem-Solving

Sometimes, problems are predictable — and patchable.

If your team knows how to fix an issue safely, patching is powerful.

Examples include:

- Misspelled fields or values

- Multiple phrases for the same concept: “MIT” vs. “Massachusetts Institute of Technology.”

- Missing identifiers that can be populated from permitted public sources”. Example: NPI numbers from the National Plan and Provider Enumeration System (hhs.gov)

Patching preserves records while improving precision. It is particularly powerful when:

- Problems are patterned

- Fixes are provable

- Processes are programmable

Patch with purpose – not guesswork.

4. Push Back: Partner Pressure for Proper Data

Sometimes the problem is upstream.

When data comes from providers, platforms, or partners, teams can push back:

- Send suppliers a precise list of problematic elements

- Provide proof, percentages, and problem patterns

- Request correction at the source

You have more leverage when:

- You pay for the data

- You have published data quality requirements

- Your contracts include quality provisions

Pushing back promotes partnership, not punishment. It improves future feeds, reduces repeated patching, and produces more predictable pipelines.

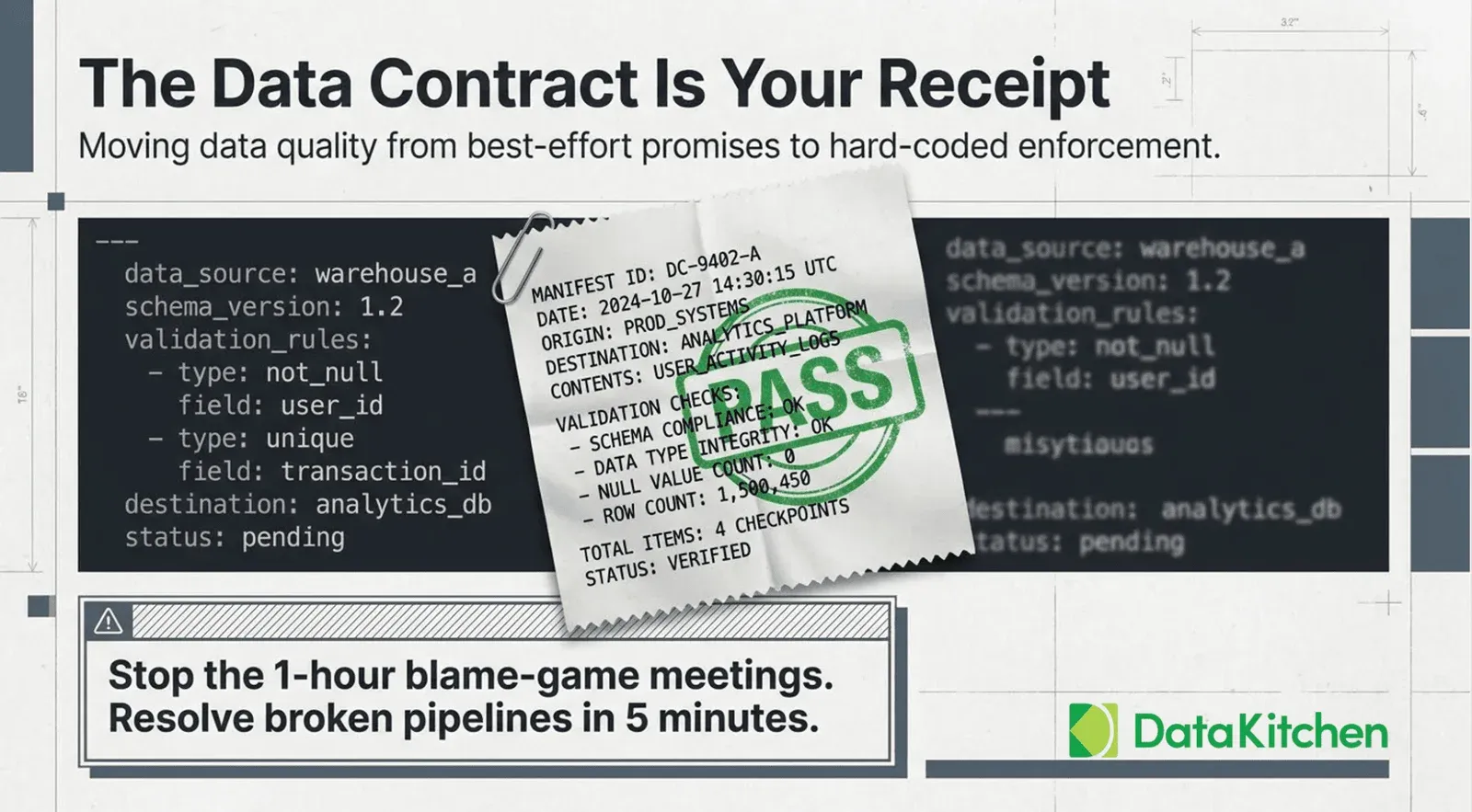

Assess Data Quality with DataKitchen TestGen

Before picking an option that requires action, teams must assess data quality.

DataKitchen’s TestGen enables teams to:

- Profile datasets quickly

- Pinpoint problematic records

- Produce precise data quality tests

- Output detailed issue listings

- Publish professional reports for partners and providers

TestGen helps teams decide:

- Which records to patch

- Which records to purge

- When to push back

- When it’s safe to pass

Most importantly: don’t let bad data pass blindly.

Conclusion: Purposeful Preparation Produces Powerful Predictions

Passing poor data produces predictable problems. Purposeful preparation prevents polluted pipelines. By profiling proactively, purging problematic records, patching predictable problems, and pushing back on poor providers, teams gain control, confidence, and credibility.

With precise profiling, principled processes, and practical platforms like TestGen, managers can protect pipelines, promote performance, and produce powerful, polished AI models — not by chance, but by plan.