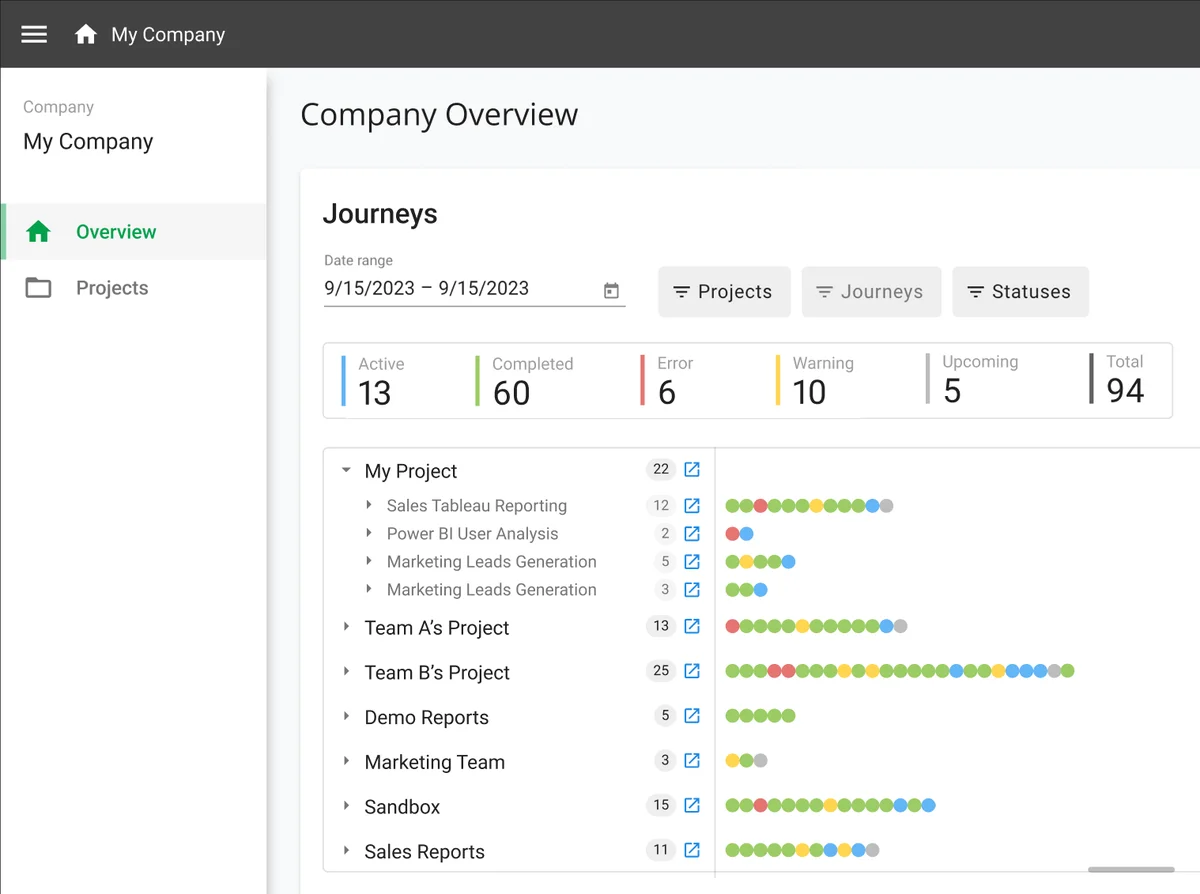

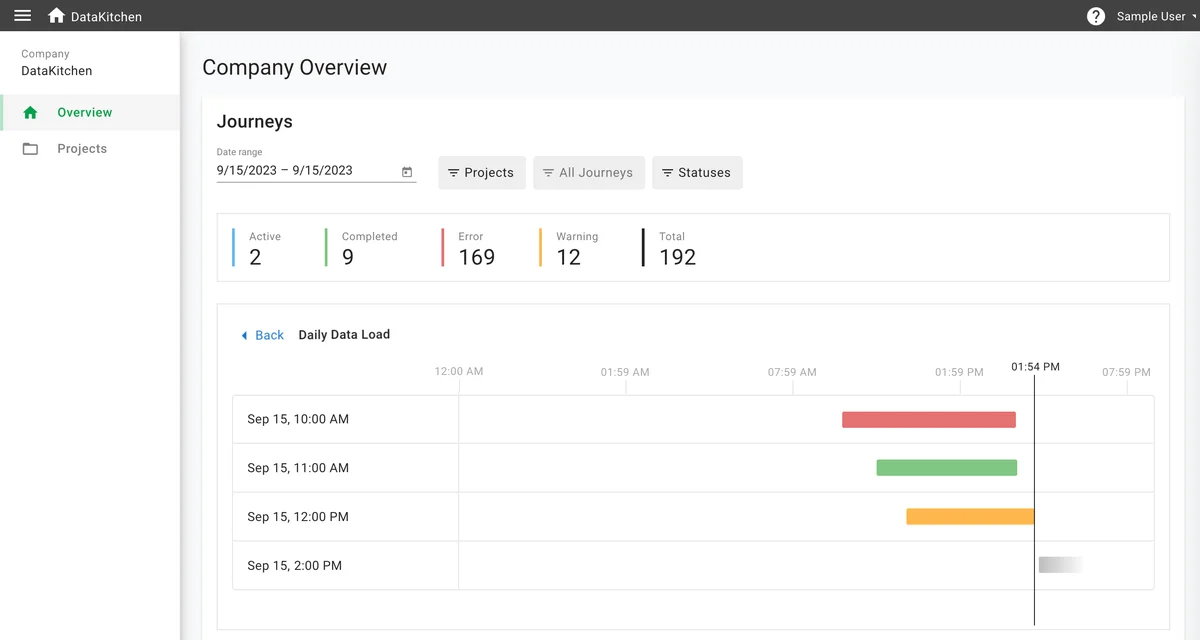

Mission Control for Your Data Estate

DataOps Observability is mission control for every data journey you run. Instead of monitoring individual pipelines in isolation, it gives you a unified view across every tool, team, and environment, so you see the problem, know which component caused it, and know which downstream consumers are affected.

"After implementing, we reduced errors to just about one per quarter. We kept adding tests over time; it has been several years since we've had any major glitches. This has dramatically increased our team's efficiency and our end stakeholders' confidence in the data."

— Associate Director, Insights, Top 10 Global Pharmaceutical Company

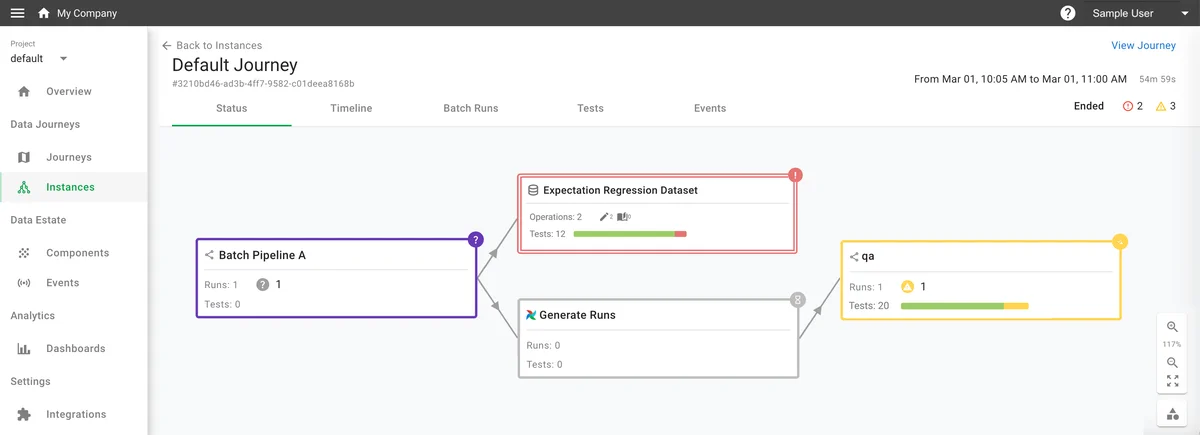

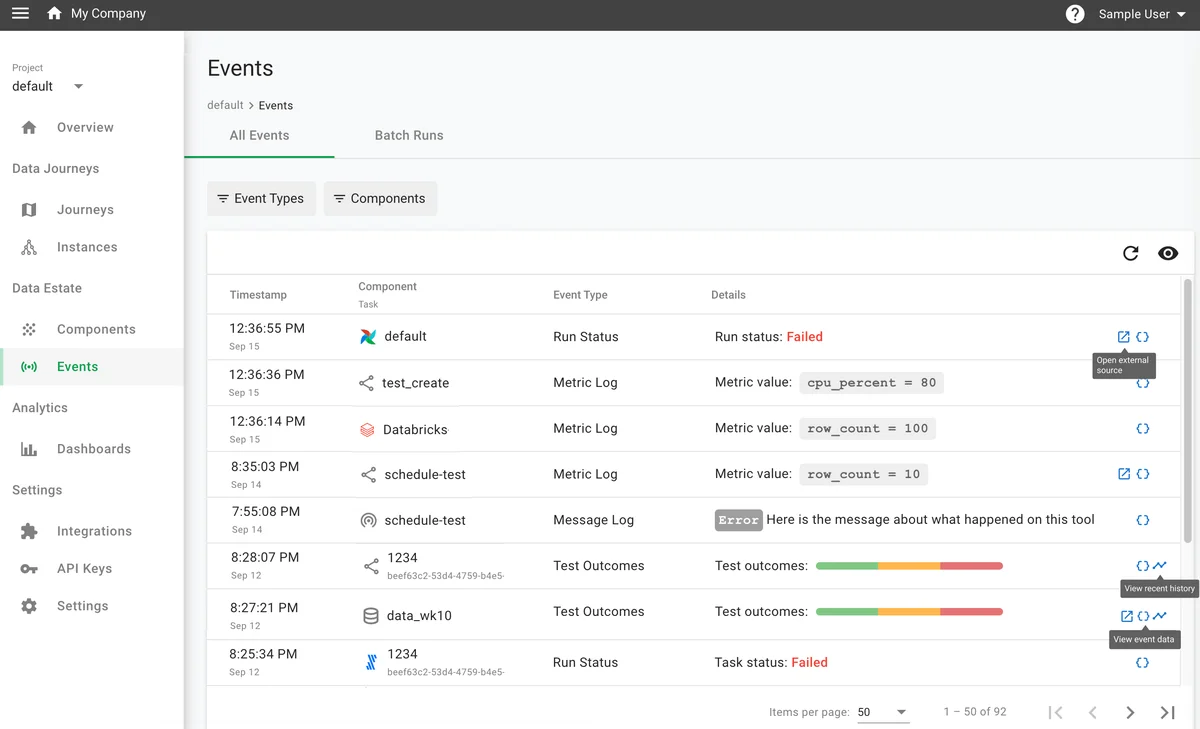

Components

The building blocks: batch pipelines, streaming pipelines, datasets (tables and files), and infrastructure. Every event attaches to a specific component, so you know what happened and where.

"When you start looking underneath those pipelines, you start seeing how many places things can go wrong."

— Head of Data Engineering, Top Ten Pharmaceutical Company

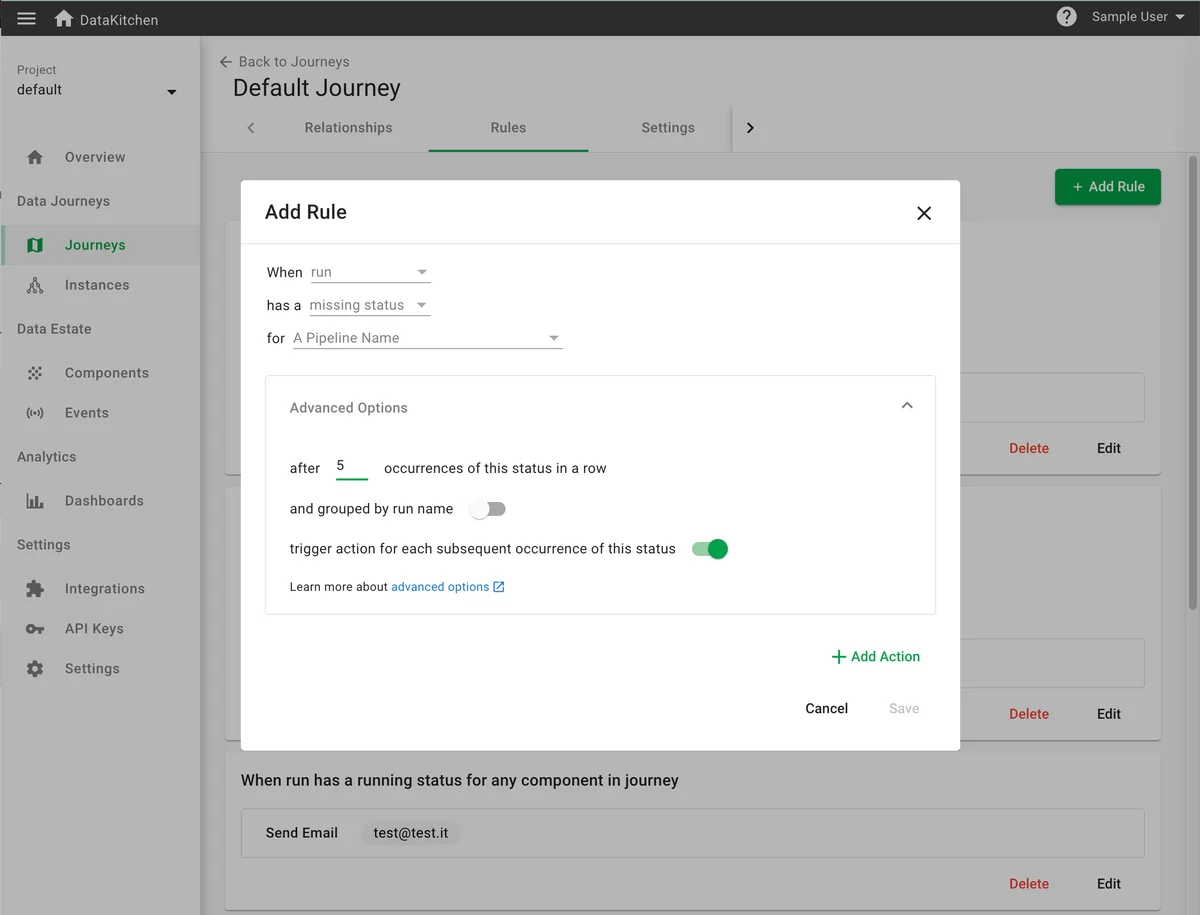

Rules

Trigger-condition-action rules define what Observability watches for and how it responds. Set a rule to fire when a run fails, a dataset arrives late, or test results regress. Route the notification to email, Slack, Teams, or Jira.

"Within 5 minutes, we started seeing events flow into the system."

— Director of Data Engineering, Large Online Store