In Gartner’s recent write up for technical professionals, “2020 Planning Guide for Data Management “, they highlight 10 critical trends for 2020. The most exciting one for us at DataKitchen is #10, DataOps.

We are most excited that Gartner is advocating the need for a DataOps Platform: “Select a DataOps platform to integrate functions that support rapid deployment and governance.” As a provider of a DataOps platform, we are biased, but could not agree more!

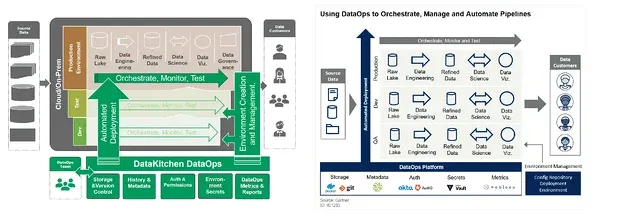

For the first time, Gartner describes the architectural components of a DataOps Platform. You can learn more by following this LinkedIn post and reading the the 2020 Planning Guide for Data Management.

The document expresses ideas similar to what we have written in about in our blog and white papers. I wanted to highlight a few key points and use them as jumping-off points to our writings and features that we have built-in our product.

- “…, test, monitor, … and alert for failure … Detect data drift automatically”

- Check out what we have written about error reduction and quality.

- Testing and monitoring in production and functional and regression testing in development are central to our platform.

- “Capture metrics and reports at the right granularity”

- We have written extensively on the need for process analytics.

- Our product captures all those metrics in reports.

- “Provide Version Control”

- “Manage .. metadata … and activity logs”

- Keeping data about data is central to moving fast

- We built that in our product as Orders.

- “Automate deployment of the code, frameworks, libraries and tools”

- We are a big fan of this idea — to see how it compares to CI and CD in software read our most popular blog post, DataOps is Not Just DevOps for Data.

- Our product brings all these things together in Recipes.

- “Create and maintain the environment …“

- We think environments that teams work in are central ideas for collaboration and coordination.

- A considerable part of our product is Kitchens. We are DataKitchen, remember?

- “ DataOps can span the entire gamut from data ingestion all the way to data delivery. This is a complex chain of components.”

- We agree. We have described these components as in “Seven Steps” blogs.

- You can see that in our product in the graphs, nodes, and data sources/sinks.

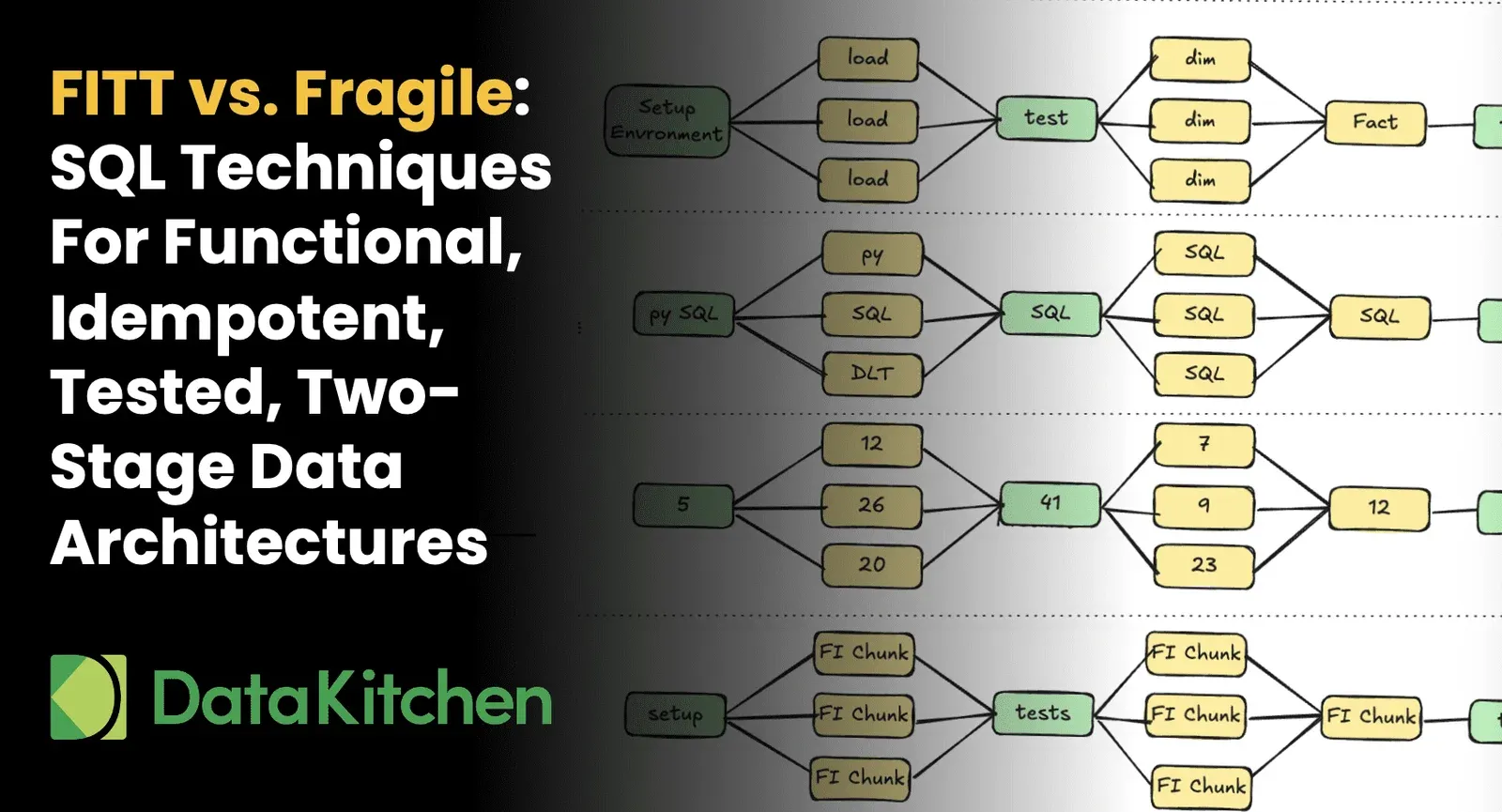

We do feel very proud that one of our articles on DataOps Architecture has had some influence on Gartner. Check out the similarities below (one small nit: Gartner mixed up the order of Dev and QA environments).

Finally, we do have a bone to pick with the authors at Gartner. They said: _“ And because this is a nascent process, there are no end-to-end tools or products that provide an all-encompassing solution for DataOps.” _

WE DISAGREE. We have built an end-to-end product for DataOps here at DataKitchen!