How This Research Started (And Why We’re Telling You)

DataKitchen co-founder Gil Benghiat recently posted a question to r/dataengineering — one of the largest and most active data engineering communities on the internet. The question was genuine: “What is actually stopping teams from writing more data tests?”

The problem? He didn’t disclose who he was. Within hours, a lead data engineer named SearchAtlantis spotted that just one week earlier, Gil had posted in a separate thread and explicitly said: “full disclosure, I co-founded DataKitchen and we built TestGen for exactly this problem.” The callout got 102 upvotes. The replies were swift and merciless. “His picture tells a thousand words,” wrote another commenter. The community, as it tends to do, did not let it slide.

To Gil’s credit, he acknowledged it directly: “That is fair. I co-founded DataKitchen and should have said so here. The question is still genuine, though.”

And it was genuine. The thread went on to generate over 60 thoughtful comments from working data engineers, and the insights that emerged are some of the most candid and practically useful we’ve seen on the topic. We’re sharing them here — with full transparency about their source.

Lesson learned. On to the findings.

What’s Actually Stopping Teams From Writing Data Tests?

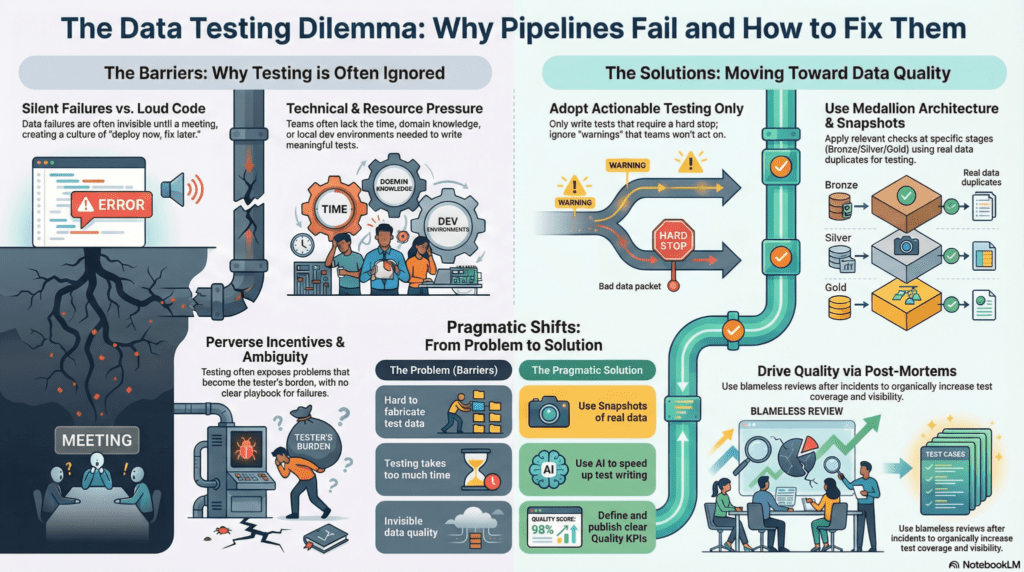

1. Writing Good Tests Is Genuinely Hard

The most upvoted response in the entire thread cut straight to the heart of it: writing tests that catch real bugs without being noisy is just difficult. This isn’t false modesty — it’s a real skill gap that takes time and painful experience to develop.

The problem isn’t the mechanics of writing a test. It’s knowing which tests are worth writing. Teams that skip this step and add tests just to “check a box” often end up worse off: tests that fire on irrelevant conditions, break jobs for illegitimate reasons, and eventually get deleted. Bad tests erode trust in the whole testing practice. Once engineers stop believing their alerts mean something, the entire system collapses.

One engineer described the downstream effect plainly: “I tried implementing a basic testing practice at my workplace… my manager insisted we need some version of unit testing on every table too as part of the policy. Just adding tests for the sake of claiming we test has been unnecessary extra work… then when those tests eventually fail and break a job it turns out we actually don’t care or the dev never verified the rule was legitimate in the first place.”

The compounding problem: DAG timing makes this worse. When pipelines have multiple arms refreshing on different cadences, false positives multiply. Engineers start ignoring alerts. And once alerts get ignored, you’ve lost the whole point.

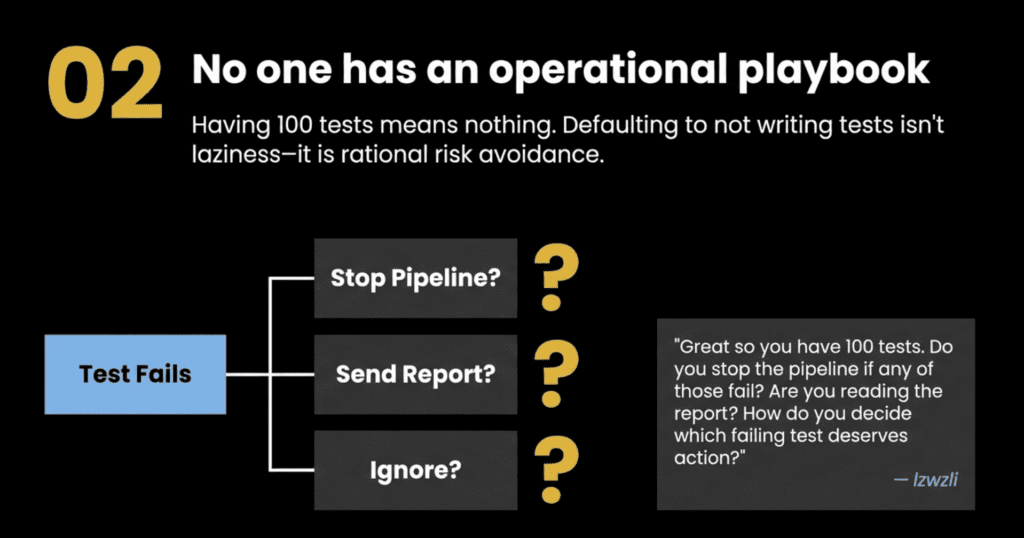

2. No One Has Thought Through What Happens When a Test Fails

One of the most insightful comments in the thread reframed the entire problem: “Writing tests isn’t the hard part. It’s deciding the operational playbook for each of the tests that gets tricky.”

The commenter laid out the question chain that most teams never work through:

- You have 100 tests. Which ones stop the pipeline when they fail?

- For those who don’t stop the pipeline, what do you do with the results?

- Is anyone actually reading the report sent for every pipeline run?

- How do you decide which non-critical failing test deserves action?

Without answers to these questions, tests become noise. And in the absence of a clear playbook, teams default to not writing them at all. It’s not laziness — it’s rational risk avoidance.

Gil’s own response in the thread is worth noting here: “Not all tests are equal. Some should stop the pipeline, some should be warnings that are investigated later or documented as release notes for data consumers, and others are more metric tracking than tests — a way for the data engineer to stay calibrated on how the data behaves. If a test is constantly failing and nobody acts on it, then remove it.”

3. Feature Delivery Always Wins

At least four separate commenters called this out directly, and it’s probably the most universal barrier on the list. During a sprint, tests lose. Every time.

The dynamic is simple: delivery has a deadline, stakeholders, and visibility. Testing has none of those things — until something breaks in production and suddenly it has all three.

One senior data engineer summed it up bluntly: “From what I’ve seen, it is more of that last one. No one cares, there’s too much work and too little time to do it in. Just deploy and if there are bugs, take them up in subsequent sprints.”

This isn’t just an individual failure. It’s an organizational one. When teams are too small for the workload, testing is the first thing to get cut. And data testing gets cut silently, without anyone explicitly making that decision.

4. Tests Rot — And Nobody Budgets Time to Maintain Them

Even teams that invest in testing often find that investment decays. Upstream schemas change. Business logic shifts. A vendor updates their API without warning. Tests that were accurate six months ago now fire constantly — or, worse, silently pass bad data because the world has changed around them. One commenter described it well: “a lot of teams don’t have stable expectations, schemas and upstream logic shift, so tests rot fast unless you budget time to maintain them.”

Maintenance rarely gets prioritized. It doesn’t appear on the roadmap, doesn’t get sprint points, and doesn’t have a deadline. So tests slowly rot, confidence erodes, and eventually someone deletes the whole suite and starts over — or doesn’t bother starting over at all.

One commenter captured the fatigue well: teams that have been burned by upstream changes enough times learn to write schema validation and bail early rather than invest in elaborate fixtures, because fixtures require their own maintenance, which requires its own tests — a matryoshka doll of testing infrastructure that most teams don’t have the bandwidth to sustain.

5. You Don’t Know What to Test Until It’s Already Broken

Data testing knowledge is largely reactive. Most engineers learn what to test by getting burned — a pipeline that produced zero rows, a join that silently dropped 40% of records, a schema change from upstream that nobody announced.

Until you’ve lived through a few of those, it’s hard to know where the landmines are. And without that experiential knowledge, tests tend to be shallow — row counts, maybe nulls — and miss the things that actually matter.

One commenter put it plainly: “Lack of experience. Those of us who have been burned like you were with that four-hour pipeline that did nothing write data tests.” Another echoed it: “You don’t realize you need them until you’ve scrambled to get shit fixed cuz things are broken and the heat is on and it’s embarrassing. That’s when you realize oh wow it doesn’t have to be like this.”

The deeper version of this problem is domain knowledge. One commenter observed that they’d seen teams with hundreds of tests still miss “revenue went to 0″—because nobody on the data team understood the business well enough to recognize that it was a possible failure mode worth testing for. As Gil put it in response: “Many data teams just move data and don’t understand the business.” Technical competence and business context are both required, and the latter is often missing.

6. Nobody Senior Enough Cares — Until It’s Too Late

Code breaks loudly. A build fails, a deploy errors out, and an exception gets thrown. Data failures are different. A pipeline that produces garbage rows completes successfully. The job turns green. No alert fires. The wrong numbers sit quietly in a dashboard until someone notices them in a meeting — usually the wrong meeting, at the wrong time, in front of the wrong people.

One commenter nailed the asymmetry: “Code breaks loudly. A pipeline that silently produces garbage? That one sneaks through.”

Because data failures are invisible until they’re embarrassing, there’s rarely organizational pressure to prevent them in advance. Leadership doesn’t feel the cost of missing tests until a crisis makes it undeniable. And by then, the damage is done.

7. Catching the Error Makes It Your Problem

This one is uncomfortable but widely recognized. One commenter articulated it clearly: “If you caught an error, it turns into your problem. If you delivered wrong data where the root cause is not the pipeline, it is not your problem.”

The incentive structure in many organizations actively punishes engineers who look too hard. Finding a problem means owning the investigation, the fix, the communication to stakeholders, and the post-mortem. Not finding it means the problem is the upstream’s fault.

Rational engineers in irrational systems respond accordingly.

Gil’s take: the only fix is leadership that actively rewards people who surface problems rather than penalizing them for finding them. This is ultimately a culture-and-values issue, not a tooling one.

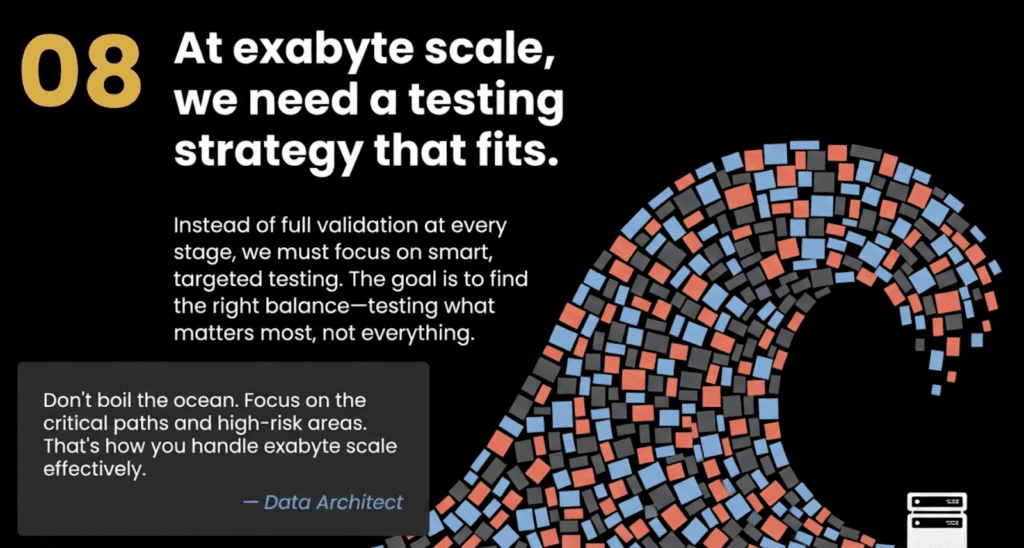

8. At Scale, Comprehensive Testing Must Fit

One commenter described processing close to an exabyte of data per day. At that volume, full validation at every stage is too slow, too expensive, and practically impossible. Their solution — sampling, anomaly detection, and back-testing on pipeline deployments — is the pragmatic answer to a genuine constraint. As they put it: “At our volume something that is 1 in a billion will happen several times a day, so if it can happen, it will happen — plan for the possibility.”

This is worth naming clearly: for some teams, the barrier to comprehensive testing isn’t skill or culture. It’s physics and economics. The right answer at hyperscale looks very different from the right answer at a mid-market company running 50 pipelines.

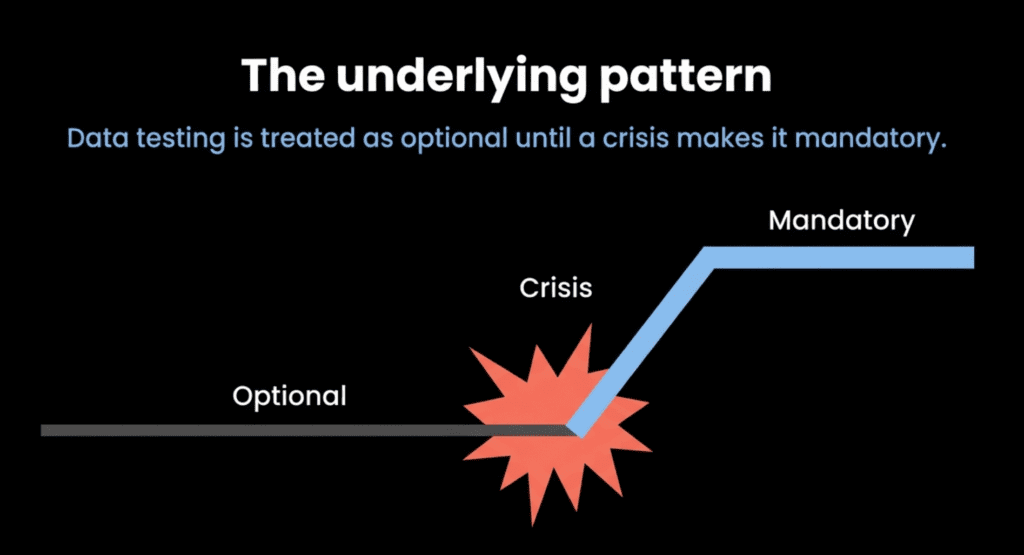

The Pattern Underneath All of It

Across every comment in the thread, one pattern emerges consistently: data testing is treated as optional until a crisis makes it mandatory.

Most teams build test coverage reactively — one incident at a time — rather than proactively as a standard engineering discipline. The barriers are a mix of technical difficulty, organizational culture, misaligned incentives, and resource constraints. Tooling is rarely the limiting factor.

Gil’s own closing summary of the thread named six core impediments:

- Test noise — false positives erode trust in the entire suite

- Time pressure — feature delivery consistently wins over quality investment. No one has time to write tests.

- Scale — at high volume, writing and maintaining comprehensive testing becomes impractical

- Test rot — upstream changes break tests, and maintenance is never prioritized

- Perverse incentive — catching errors makes them your problem

- Domain knowledge gaps — without business context, it’s hard to know which tests actually matter

These aren’t problems that disappear when you hand a team a testing tool. They require investment in process, culture, and clear operational norms around what testing means and what happens when it fails.

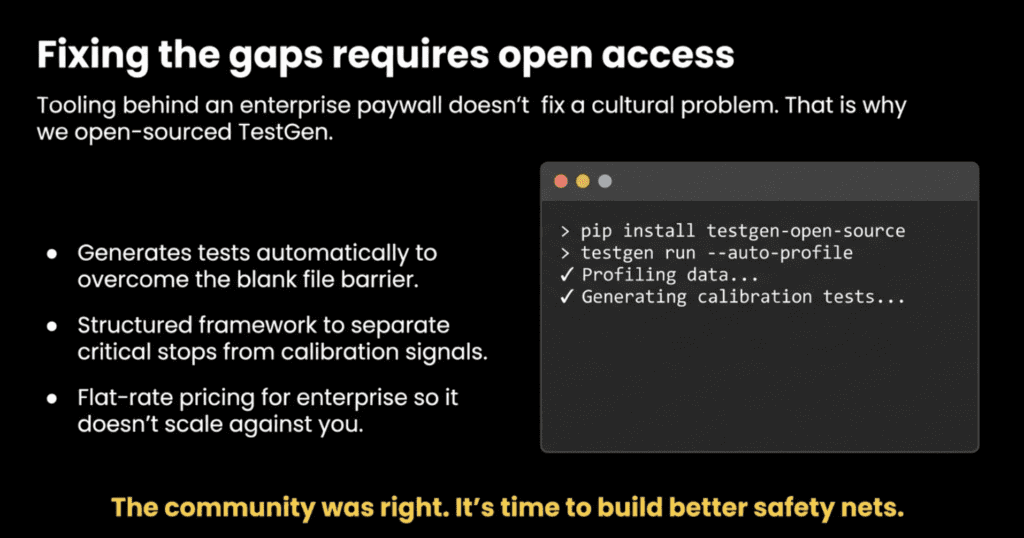

We’re Data Engineers Too — This Is Why We Open-Sourced TestGen

If you’ve read this far, you’ve probably recognized your own team in at least a few of these barriers. We did too — and it’s exactly why we decided to open source a full-featured version of TestGen.

The most common reasons teams don’t test aren’t technical. They’re about time, trust, incentives, and not knowing where to start. A tool sitting behind an enterprise paywall doesn’t fix any of that. Giving engineers direct, no-commitment access to a production-ready testing tool — one that profiles your data and generates tests automatically, so you’re not starting from a blank file — actually does.

TestGen is free to download, free to use, and built to address the specific gaps this community named: it reduces the expertise barrier for knowing what to test, cuts the time cost of writing tests from scratch, and gives teams a structured framework for deciding which tests are critical, which are warnings, and which are just calibration signals. We’ve been doing data engineering for a long time, and have run into every issue listed in the article. And we built TestGen as th free antidote.

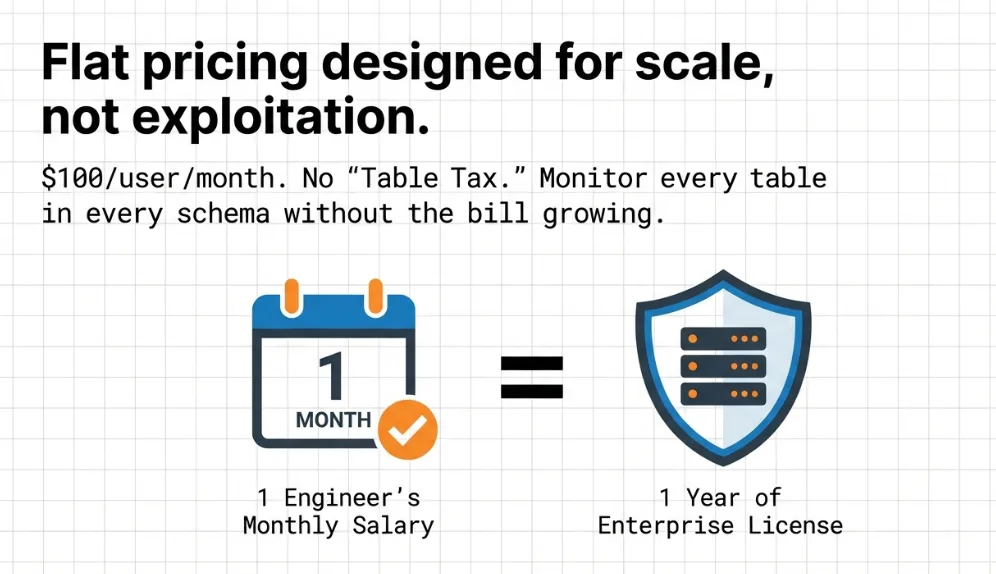

For teams that want to go further — shared test suites, collaboration across engineers, and enterprise support — our flat-rate pricing means you’re not getting hit with per-user or per-usage fees that scale against you as your team grows. One predictable number, regardless of how many tables, pipelines, or tests are involved.

Get started with open source TestGen →

Because the community was right: the problem is real, it’s widespread, and it’s been undersolved for too long.

Source: r/dataengineering thread, “What is actually stopping teams from writing more data tests?” — community responses plus OP follow-up from DataKitchen co-founder Gil Benghiat.

Frequently Asked Questions, TLDR;

What is the Summary of this article?

Data engineers know they should test more — but a candid Reddit thread reveals the real reasons they don’t. The barriers are less about tooling and more about human and organizational dynamics: good tests are hard to write without getting burned first, there’s no clear playbook for what to do when a test fails, and feature delivery always wins the sprint. Even teams that invest in testing watch their suites decay as upstream schemas shift, and maintenance never gets prioritized. Underneath it all sits a perverse incentive — finding a problem means owning it — and a culture in which data failures remain invisible until they’re embarrassing. These are exactly the barriers DataKitchen built TestGen to address, and why they open-sourced it.

The Sales-y Stuff: How TestGen Answers Each Barrier to Data Testing

The r/dataengineering community named eight specific reasons data engineers don’t write more tests. Here is how TestGen was built to address every one of them.

TestGen Fixes Barrier 1: Writing Good Tests Is Genuinely Hard

The community’s top-voted concern was that writing tests that catch real bugs without generating noise requires deep expertise that most teams simply haven’t had time to develop. TestGen removes this barrier at the source through what it calls one-button data quality checks: point TestGen at your data, and it generates tests automatically without requiring deep knowledge of the underlying dataset, custom coding, or sprawling YAML configuration.

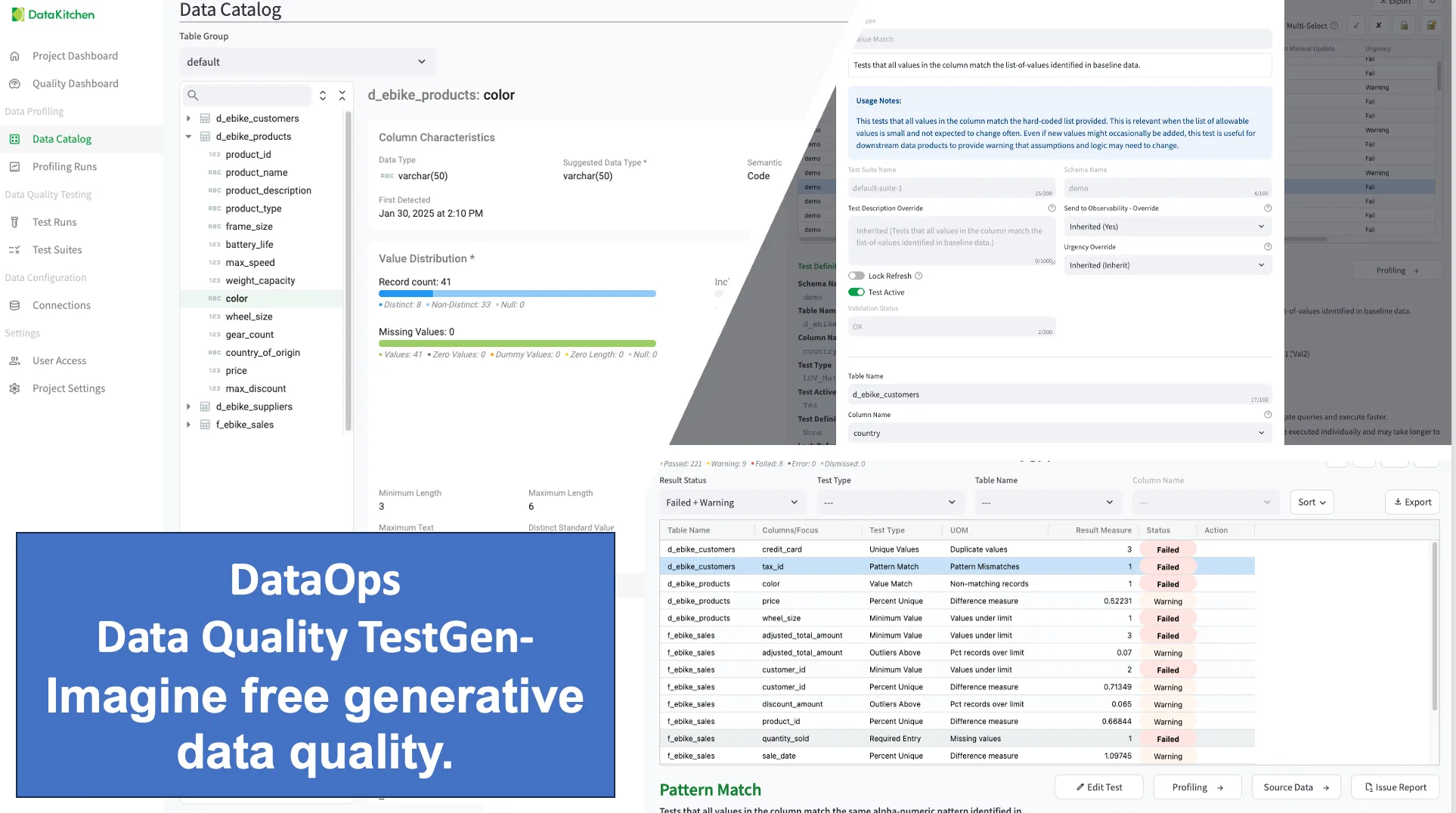

The mechanism behind this is data profiling. TestGen performs a column-level X-ray of every table, gathering extensive information about what each column actually contains — averages, min and max values, unique value counts, date characteristics, percentiles, data types, and more. Based on those profiling results, the algorithm generates over 120 AI-driven tests covering data integrity, hygiene, and quality. These include checks for things like alpha truncation, average shift, constant value presence, future dates, distinct value changes, and daily record counts — tests that experienced engineers write after getting burned, but that TestGen generates proactively before a single pipeline runs.

Underpinning all of this is a semantic data model that captures the meaning and relationships of your data assets, not just their structure. This means TestGen doesn’t just know that a column exists — it understands what the column represents, which allows generated tests to be meaningfully calibrated to the data’s actual purpose rather than its raw technical characteristics alone.

#image_title

The explicit design goal is to cast a wide net for problems that can’t be predicted by targeted testing alone. TestGen describes it as the equivalent of setting up a burglar alarm by covering all possible entrances rather than guessing which window someone will try.

TestGen Helps Barrier 2: No One Has a Playbook for When Tests Fail

The thread’s second most-discussed barrier was operational: teams don’t know which failures should stop a pipeline, which should trigger a warning, and which are just signals to monitor. Without answers to those questions, writing tests feel premature.

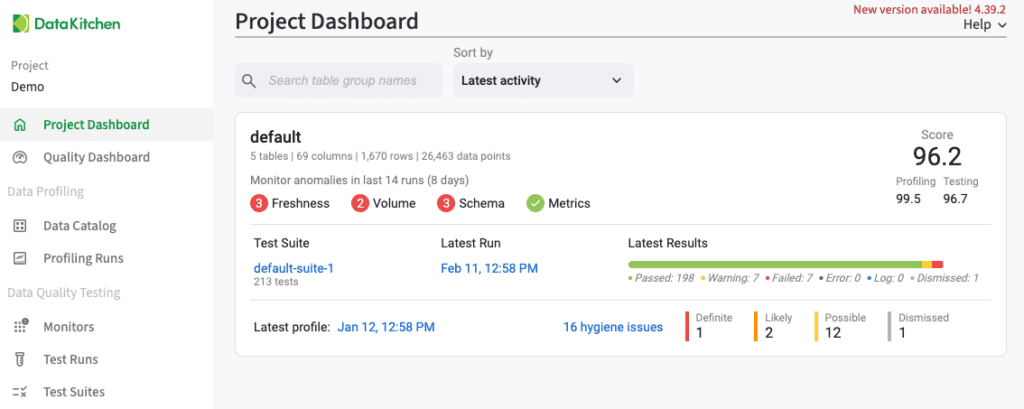

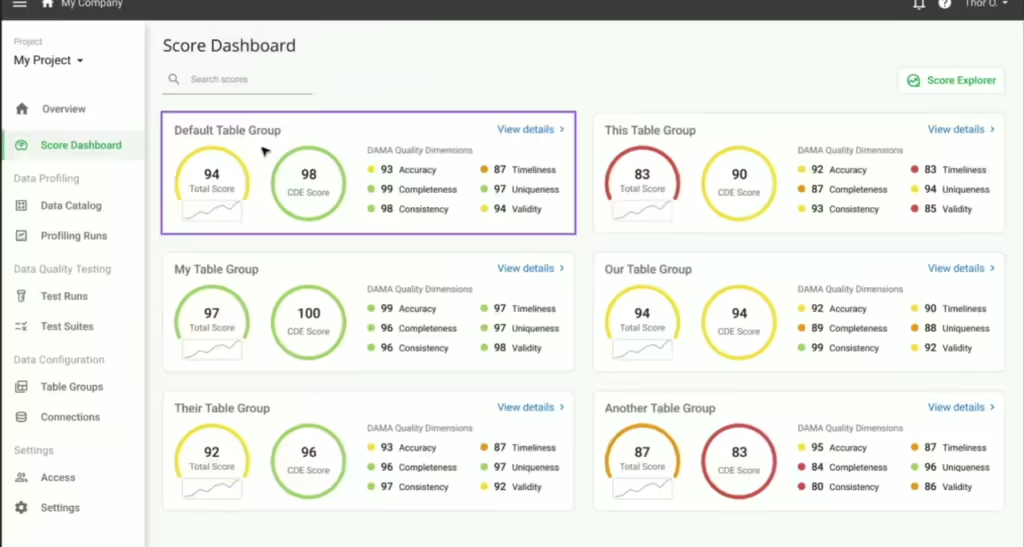

TestGen addresses this structurally with customizable quality scoring and dashboards. Every test result feeds into automated scorecards that can be configured by DAMA data quality categories, by Critical Data Element designation, by a specific data science model, or by current business goals. Teams can drill down from a high-level score into the specific tables and columns driving quality issues, which turns a wall of test results into a ranked, prioritized view of what actually needs attention.

#image_title

Critically, this isn’t just a tool for engineers. Because the open-source version ships with a full UI out of the box, business partners and data consumers can participate directly in testing alongside the engineers who build the pipelines. Tests can be reviewed, annotated, and managed collaboratively without anyone needing to touch the command line. Business rules can be configured and shared with non-technical stakeholders rather than requiring a database programmer to translate requirements into SQL. This shared interface is what makes it possible to align on which test failures are critical, which are warnings, and which are simply signals to monitor over time — turning what is usually a solo engineering judgment call into a team decision.

On top of that, TestGen’s shareable issue reports let engineers get influence and action on data quality problems with a single click, pushing findings to the people who need to act on them rather than leaving results buried in a dashboard nobody opens.

TestGen Helps Barrier 3: Feature Delivery Always Wins

Time is the most universal barrier in the thread. Testing loses to delivery every sprint because manual test authorship is genuinely time-consuming — weeks to cover a fraction of the tables, as TestGen’s own positioning acknowledges.

#image_title

TestGen’s answer is speed through automation. Because test generation flows directly from profiling, an engineer can go from connecting a database to running thousands of tests covering every table and column in under an hour — without writing a single test by hand. What typically takes data teams weeks to build manually, and still covers only a fraction of their tables, TestGen delivers complete test coverage in a single session. TestGen also runs those tests with blazing-fast in-database execution, pushing queries directly into the data warehouse rather than extracting data first, which means the performance cost of running tests is low enough not to become another argument against doing it. The time investment drops sufficiently that testing no longer competes with delivery and becomes something that happens alongside it.

TestGen Fixes Barrier 4: Tests Rot When Upstream Data Changes

Even teams that invest in testing watch their suites decay as upstream schemas shift. Tests written against yesterday’s data structure start firing on irrelevant conditions or silently passing on newly invalid data.

TestGen’s anomaly detection is built specifically to catch this. It continuously monitors for schema changes, data drift, freshness degradation, and volume anomalies, generating automated alerts when something deviates from expected behavior. But the more important answer to test rot is a practice TestGen is designed to support directly: regular re-profiling and test regeneration. Rather than treating a test suite as a one-time artifact that slowly decays, TestGen encourages teams to re-run profiling on a scheduled basis and regenerate tests from the updated profile. When upstream data changes, the new profile reflects those changes, and the regenerated tests are calibrated to what the data actually looks like now rather than what it looked like six months ago. This turns test maintenance from a manual, easily-skipped chore into a repeatable, automated part of the data pipeline lifecycle. The data catalog adds another layer: it provides a 360-degree view of every table’s metadata, profile results, hygiene issues, and test results, so engineers can see when the ground has shifted rather than discovering it after a silent failure.

TestGen Fixes Barrier 5: You Don’t Know What to Test Until It’s Already Broken

Most data testing knowledge is reactive. Engineers add tests after failures, which means every test was preceded by a production incident.

TestGen’s dataset screening feature addresses this directly. Before production testing begins, TestGen performs an initial assessment of new data sources, running 27 data hygiene detector tests that automatically identify structural problems: invalid zip code formats, leading spaces, multiple data types in a single column name, non-standard blank values, columns with no values, and more. These are the kinds of issues that cause silent failures downstream but are invisible until something breaks. TestGen surfaces them upfront, during the screening phase, before data moves further into the pipeline.

From that same profiling and screening pass, TestGen automatically generates a full suite of production-ready validation tests — no manual authoring required. The auto-generation doesn’t just create generic checks; it creates tests calibrated to the actual characteristics of each column, using the semantic data model and profiling results to derive thresholds, expected value ranges, and format rules that would take an engineer hours to specify by hand. For teams that have historically relied on experience and incident history to know what to test, this is the most direct possible answer: TestGen builds the institutional knowledge into the generation process itself.

Combined with the 51 column-level profiling characteristics, engineers get a complete picture of what a dataset actually contains before they write a single transformation. The data catalog then surfaces potential PII risks and Critical Data Elements within the same view, thereby partially filling domain knowledge gaps with what the data itself reveals.

TestGen Fixes Barrier 6: Data Failures Are Invisible Until They’re Embarrassing

Code breaks loudly. Pipelines that produce garbage data complete successfully and turn green. The wrong numbers sit quietly in a dashboard until they surface in the wrong meeting.

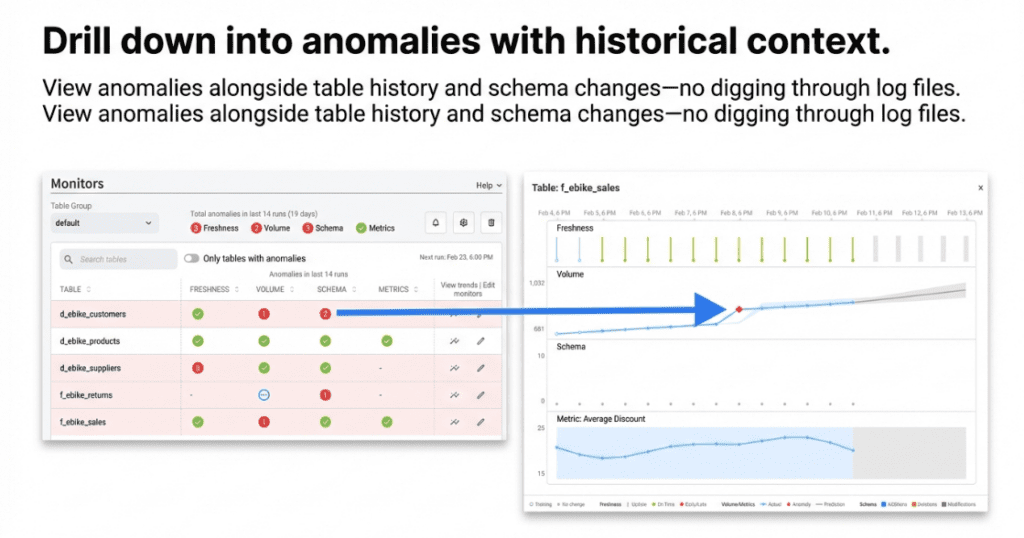

TestGen’s ML-based anomaly detection is the direct answer to this. It continuously monitors data for freshness issues (did the data update when expected?), volume anomalies (is row count trending in the right direction?), schema changes (has something been added, removed, or altered upstream?), and data drift (have the statistical characteristics of key columns shifted?). Crucially, the system is designed to alert without overwhelming — the explicit goal is to get alerted without being bothered by every transient issue, which means the signal stays meaningful rather than becoming noise.

TestGen’s integration with DataOps Observability extends this visibility into something much more powerful. DataOps Observability is also open source and monitors the entire data journey from source to customer value in a single place — not just the data itself, but every tool that acts on it. Where TestGen catches problems in the data, DataOps Observability catches problems in the pipelines, jobs, and systems surrounding it. Together, they give teams one unified view of both data errors and tool errors, so when something goes wrong, the question of whether the problem is in the data or in the infrastructure that moves it is answered immediately rather than after a multi-hour investigation. Silent failures don’t just become visible — they become localized, contextualized, and actionable within a single shared surface that the whole team can see.

TestGen Helps Barrier 7: Catching the Error Makes It Your Problem

One of the sharpest observations in the thread was that in many organizations, the engineer who finds a problem owns it. That perverse incentive actively discourages engineers from looking too hard.

TestGen changes the accountability dynamic in two concrete ways. First, the shareable issue reports make it possible to share findings with stakeholders and upstream data suppliers with a single click, turning discovery into a collaborative handoff rather than an individual burden. Second — and more fundamentally — the open source UI gives the entire organization, including data stewards, business analysts, and data owners, a shared surface for managing test results together. Rather than test outcomes living in an engineer’s terminal or a private dashboard, TestGen’s UI makes results visible and actionable across roles. Data stewards can review failing tests, annotate them with business context, and confirm whether a failure represents a real quality issue or an expected edge case. Business partners can see quality scores for the datasets they depend on and flag concerns directly rather than waiting for something to break in a report. This shared visibility is what shifts data quality from one engineer’s burden to an organizational practice — and it makes problem discovery a shared accountability rather than a trap for whoever looks hardest.

TestGen Helps Barrier 8: At Scale, Comprehensive Testing Isn’t Viable

For some teams, the barrier is not skill or culture but physics and economics. At hyperscale, exhaustive row-level validation at every stage is too slow and too expensive.

TestGen handles this in two ways. The in-database execution model is central here: rather than pulling data out of the warehouse to test it, TestGen pushes queries directly into the database. This makes testing dramatically faster and more cost-efficient than approaches that require data movement, and it keeps data within the warehouse’s security boundary rather than exposing it to external systems. For environments where even in-database full scans are prohibitive, TestGen supports configurable sampling — teams can set a sample percentage and minimum count per table group, so coverage stays broad without the compute cost of scanning billions of rows on every run.

On pricing, the flat-rate model of $100 per month per user and per database connection with unlimited tables means there is no per-table tax that grows as the data estate expands. Teams at scale are not penalized for having more data, which removes the economic argument against expanding coverage.

The Bottom Line

The Reddit community named eight specific reasons data testing doesn’t happen. TestGen was built to dismantle all eight — through one-button automated test generation, in-database execution, ML-based anomaly detection, dataset screening, shareable issue reports, quality scoring, and flat-rate pricing that doesn’t punish scale. It is Apache-2.0-licensed and available as open source, with no feature gates or usage limits. For teams that want shared scorecards, cross-engineer collaboration, and enterprise support, pricing remains predictable regardless of the number of tables, pipelines, or tests involved.