Announcing Actionable, Automated, & Agile Data Quality Scorecards

Unlocking Data Team Success: Are You Process-Centric or Data-Centric?

We want to share our observations about data teams, how they work and think, and their challenges. We’ve identified two distinct types of data teams: process-centric and data-centric. Understanding this framework offers valuable insights into team efficiency, operational excellence, and data quality.

How Data Quality Leaders Can Gain Influence And Avoid The Tragedy of the Commons

Using the ecological idea of the ‘Tragedy Of The Commons’ as a metaphor for the eternal issue of data quality, we talk about how data quality leaders can leverage Dale Carnegie’s 100-year-old ideas on influencing people and wrapping this improvement process with DataOps iterative improvement.

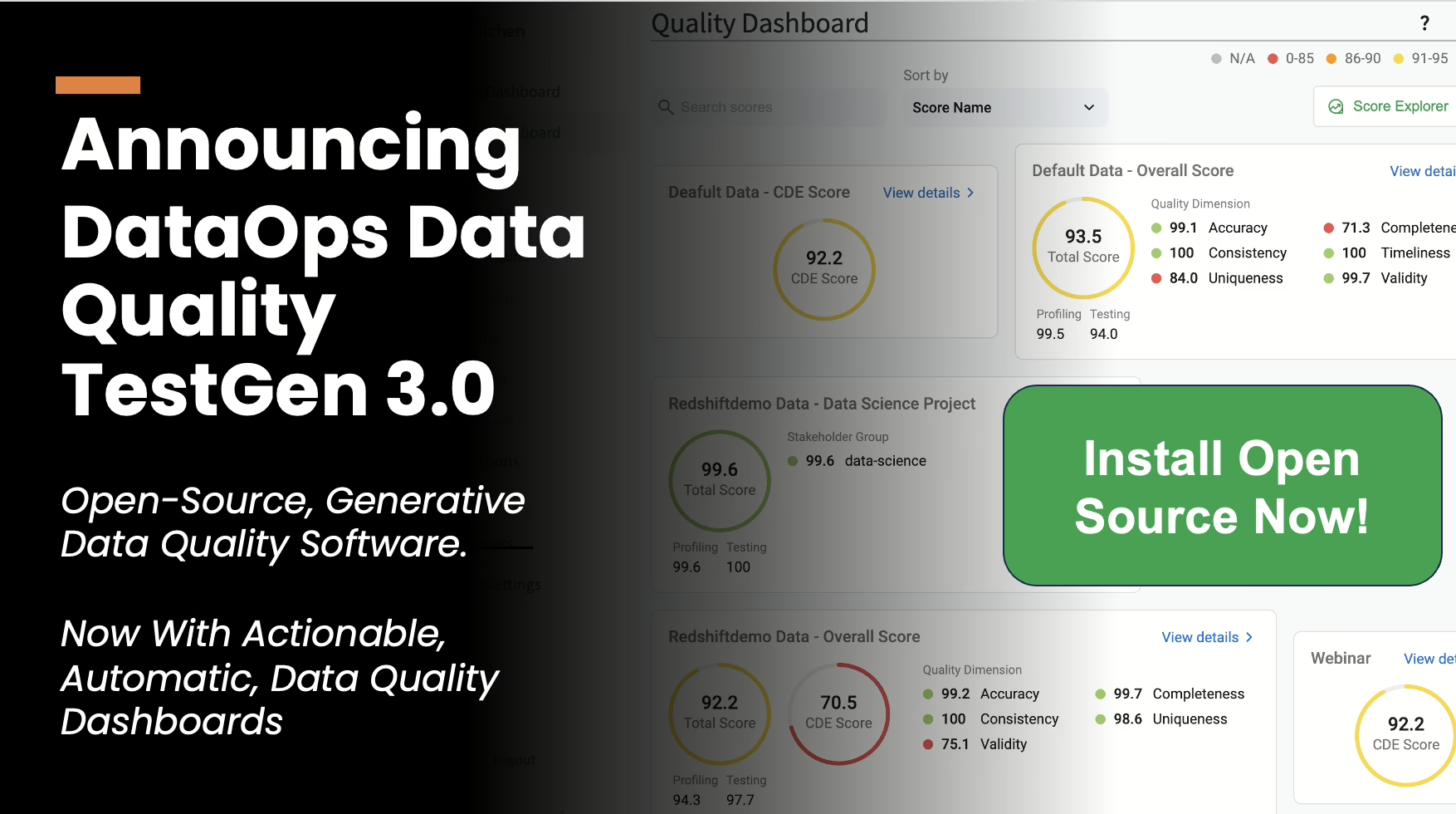

Announcing Open Source DataOps Data Quality TestGen 3.0

Now With Actionable, Automatic, Data Quality Dashboards. Learn about DataOps Data Quality TestGen 3.0.

No Python, No SQL Templates, No YAML: Why Your Open Source Data Quality Tool Should Generate 80% Of Your Data Quality Tests Automatically

The reality is that 80% of data quality tests can be generated automatically, eliminating the need for tedious manual coding. Learn how to do it today.

Summary of the Gartner Presentation: “How Can You Leverage Technologies to Solve Data Quality Challenges?”

Summary of the Melody Chien from Gartner Presentation: “How Can You Leverage Technologies to Solve Data Quality Challenges?”

Webinar: Data Quality in a Medallion Architecture – 2024

Would you like help maintaining high-quality data across every layer of your Medallion Architecture?

A Guide to the Six Types of Data Quality Dashboards

Not all data quality dashboards are created equal. Their design and focus vary significantly depending on an organization’s unique goals, challenges, and data landscape. This blog delves into the six distinct types of data quality dashboards, examining how each fulfills a specific role in improving Data Quality.

The Race For Data Quality in a Medallion Architecture

The Medallion Data Lakehouse Architecture Has A Unique Set Of Data Quality Challenges. Find Out How To Take The Gold In This Tough Data Quality Race!

Webinar: DataOps For Beginners – 2024

If you’ve ever heard (or had) these complaints about speed-to-insight or data reliability, you should watch our webinar, DataOps for Beginners, on demand.