In the chaotic lives of data & analytics teams, a day without hearing of any data-related errors is a blessing. Your team is on top of things, deliveries are on schedule (you think), and no major complaints are making their way to your desk. It’s tempting to adopt the ” What, me worry? ” attitude under these seemingly calm conditions. But here’s the catch: just because you ‘re not hearing about errors doesn’t mean they don’t exist. This silence could be a ticking time bomb for underlying issues yet to surface. Here are seven compelling reasons why you should care and be proactive, even when all seems well.

The Illusion of Perfection: Hidden Problems

Firstly, not hearing about errors doesn’t necessarily equate to their absence. Problems are often hidden, either unintentionally due to a lack of observability or deliberately as a cover-up. The absence of noise around data errors could indicate a culture where mistakes are silently corrected or ignored, leading to compounded issues down the line.

The Patchwork Quilt: Fixes by Another Team

Another team may be picking up the slack, fixing and patching errors before they become visible to higher management or external stakeholders. This creates an imbalance in workload and resource allocation and prevents a holistic view of data system health and efficiency.

The Unknown Unknowns

The most daunting are the errors that remain undetected – the unknown unknowns. You’re not aware of these issues simply because you haven’t found them yet, not because they don’t exist. This blind spot in your operational oversight can lead to critical failures at the most inopportune moments.

The False Calm Before the Storm

A lack of reported problems doesn’t guarantee a smooth sail ahead. It could be the calm before the storm, where underlying issues accumulate to the point of causing significant operational disruptions. Being proactive rather than reactive in seeking out potential data errors can save your team from future blow-ups. The worst thing I have said to a business sponsor is: ‘Yes, there was an error. Yes, it was our fault, and the data was wrong for the past year.‘

The Business Imperative: Need for Accuracy

Regardless of current error reports, your business depends on data accuracy for making informed decisions. Even minor errors can have substantial consequences in industries where precision is non-negotiable. Assuming everything is accurate without rigorous verification is a gamble that most businesses cannot afford.

Denial: “We Don’t Want to Know”

The analogy of an ostrich burying its head in the sand aptly describes some data teams’ approach to handling data errors. This metaphor, often misunderstood in the animal kingdom, effectively captures the tendency of individuals or groups to ignore problematic situations, hoping they will resolve themselves or go unnoticed. In the context of data teams, this behavior manifests as a denial or avoidance of acknowledging and addressing data errors, coupled with a readiness to shift blame onto other teams when issues inevitably surface.

The Stakeholder Confidence Crisis

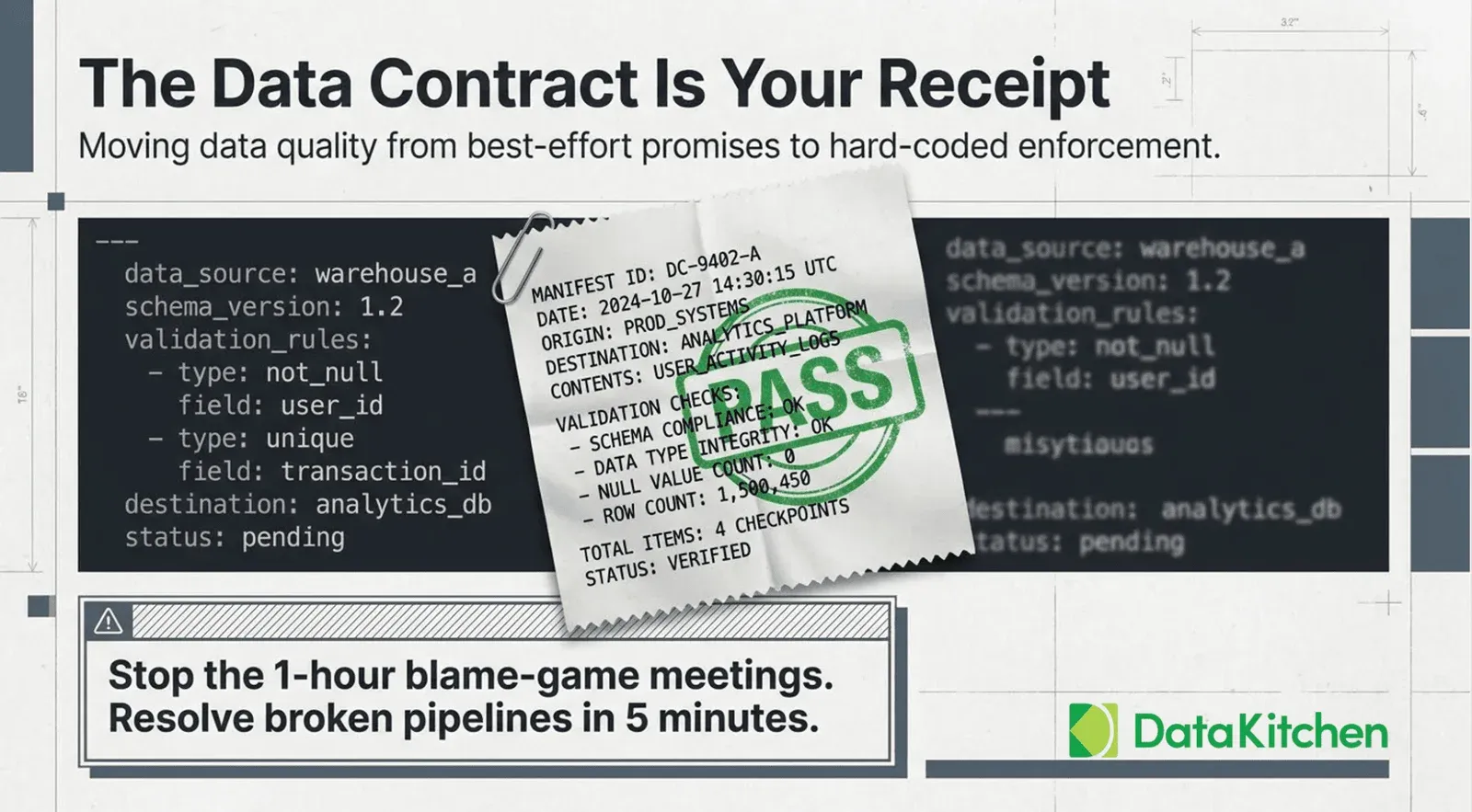

Relying on hope as a data accuracy and integrity strategy is fraught with risks. Stakeholders, from internal teams to external clients, seek confidence in the data they use and depend on. This confidence can only be built through transparency, proactive error detection, and a robust data quality and observability framework. Do you want to drive to work daily, expecting the following significant error to drop?

Conclusion: Action Over Complacency

Adopting a proactive stance towards data management and error detection is critical to avoiding potential issues. Implementing rigorous DataOps Observability practices, including automated testing, observability tools, and a culture of continuous improvement, can transform how your team addresses data reliability and accuracy. Data teams need to foster a culture of transparency and accountability where errors are not feared but seen as opportunities for improvement

Complacency is the enemy of progress. Not hearing about errors is not a sign to rest easy but a call to action for deeper investigation and proactive measures. You will be in trouble if you are not measuring data quality or delivering error rates and SLAs. It’s just a matter of time. By embracing a culture of transparency, continuous monitoring, and rigorous testing, data teams can ensure their silence is truly golden, not just the calm before an inevitable storm. Let’s shift from hoping things are correct to knowing they are, building a foundation of confidence and reliability in our data-driven decisions.