Data migration projects, such as moving from on-premises infrastructure to the cloud, are critical and complex projects that involve transferring data across different systems while ensuring data integrity and consistency. This blog post explores the fifth use case for Data Observability and Data Quality Validation—data Migration—focusing on how DataKitchen’s Open-Source Data Observation software ensures these migrations are successful and error-free.

NOTE

The Five Use Cases in Data Observability

Data Evaluation: This involves evaluating and cleansing new datasets before being added to production. This process is critical as it ensures data quality from the onset.

Data Ingestion: Continuous monitoring of data ingestion ensures that updates to existing data sources are consistent and accurate. Examples include regular loading of CRM data and anomaly detection.

Production: During the production cycle, oversee multi-tool and multi-data set processes, such as dashboard production and warehouse building, ensuring that all components function correctly and the correct data is delivered to your customers.

Development: Observability in development includes conducting regression tests and impact assessments when new code, tools, or configurations are introduced, helping maintain system integrity as new code of data sets are introduced into production.

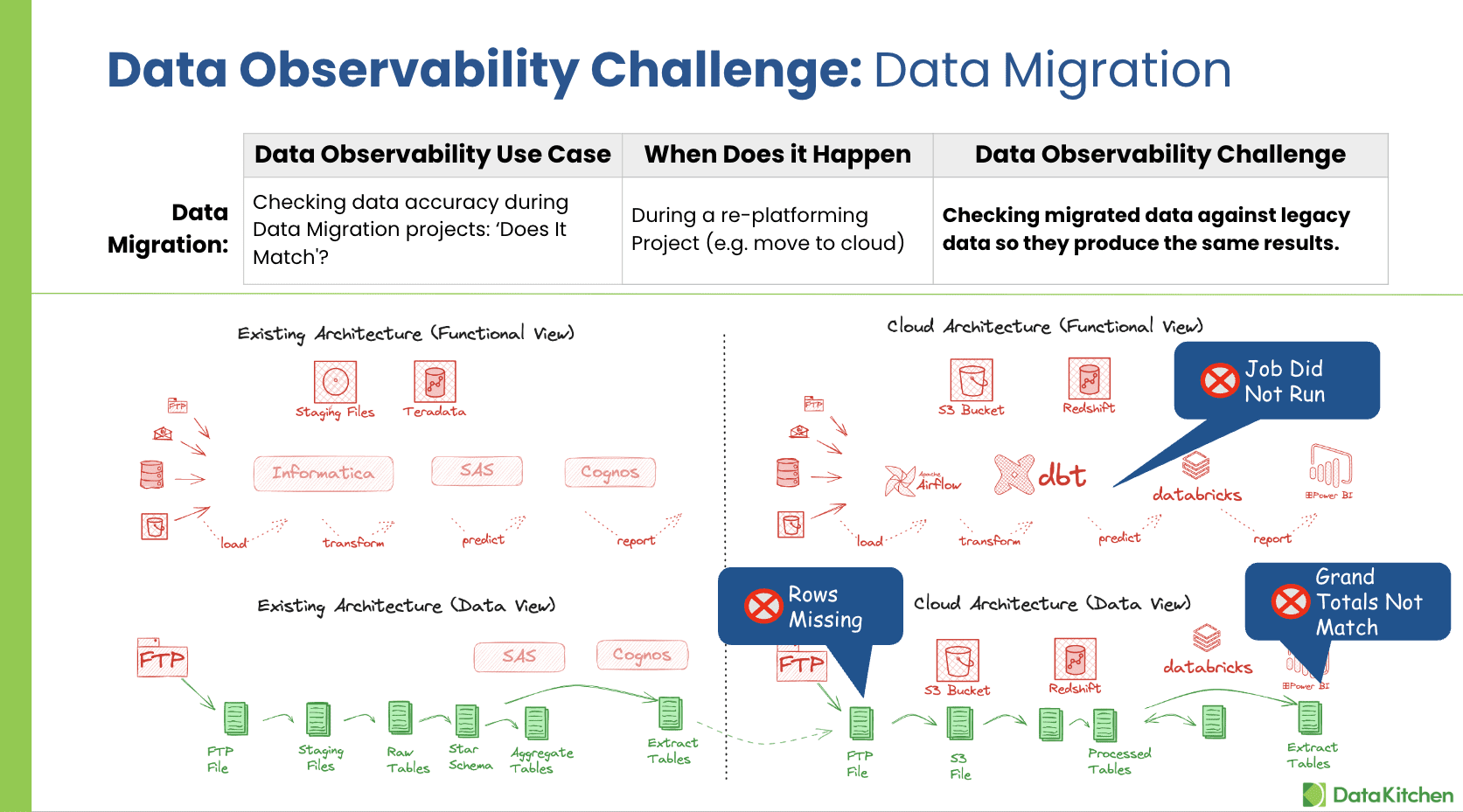

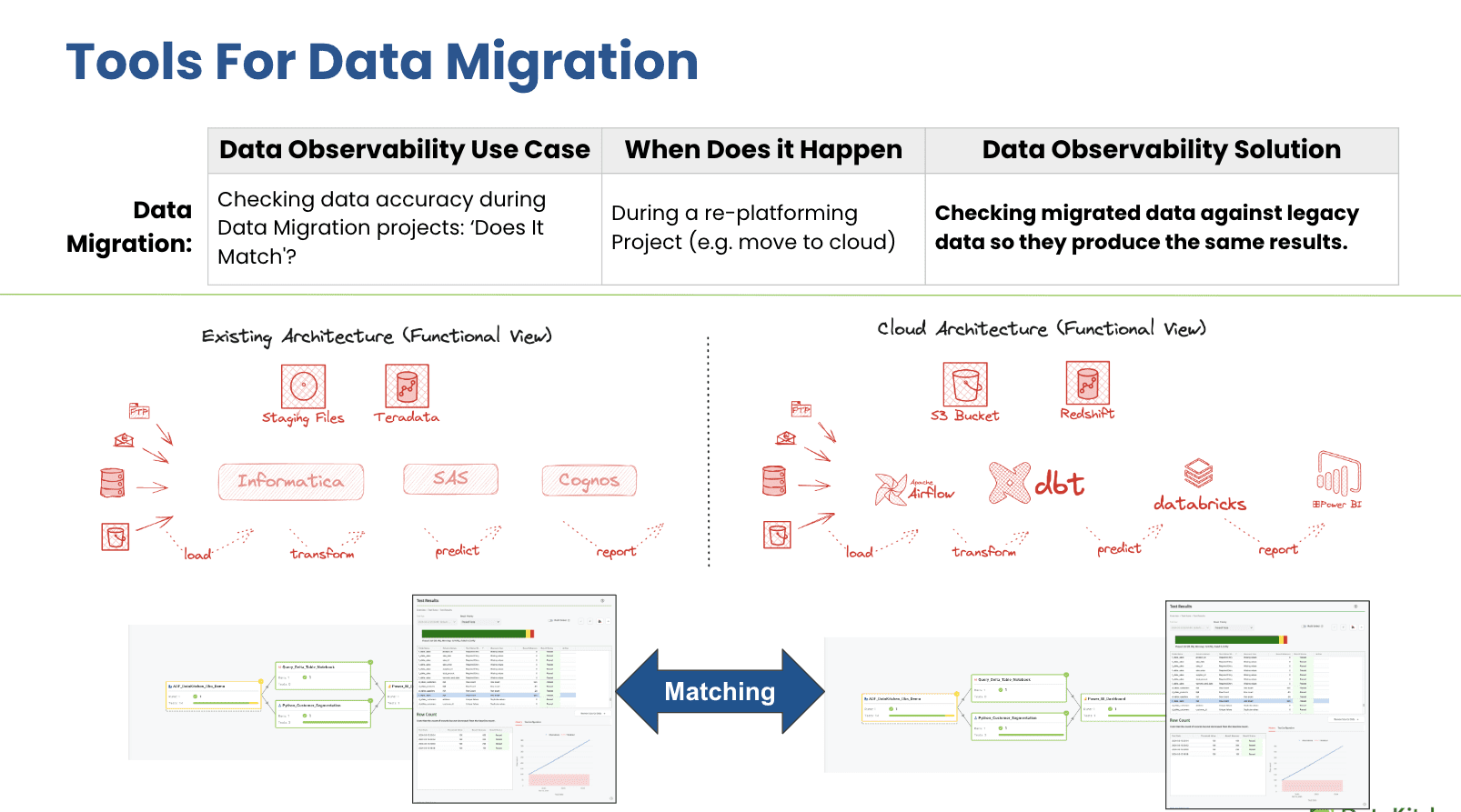

Data Migration: This use case focuses on verifying data accuracy during migration projects, such as cloud transitions, to ensure that migrated data matches the legacy data regarding output and functionality.

The Challenge of Data Migration

Data migration is more than just moving data; it’s about ensuring that the migrated data functions identically in a new environment without any loss or corruption. The key challenge in data migration is verifying that the data remains consistent before and after the move. This involves ensuring that:

- Data is not corrupted during the transfer.

- All data rows are accounted for between systems.

- Business logic and reporting dashboard results align between the old and new systems.

Critical Questions for Successful Data Migration

A thorough data migration strategy must address several critical questions to confirm the success of the migration:

- Has My Data Been Corrupted In The Old And New System?

- Do I Have All The Rows Of Data Between The Old And New Systems?

- Do The Reports Batch Between Old And New?

- Is There Some Business Logic Missing Between The Old And New Systems?

- Did I capture all the data fields derived from old and new systems?

- Is The New System Being Updated At The Same Rate As The Old?

- Can I Run The New System In Parallel For A While? Can I Cross-Check the Results Easily?

- What Data Do I Have To Prove That The Migration System Is Producing The Right Results?

- Can Prove Matching Fast And Easily?

How DataKitchen Addresses Data Migration Challenges

DataKitchen’s Data Observability solutions provide powerful tools to tackle the complexities of data migration:

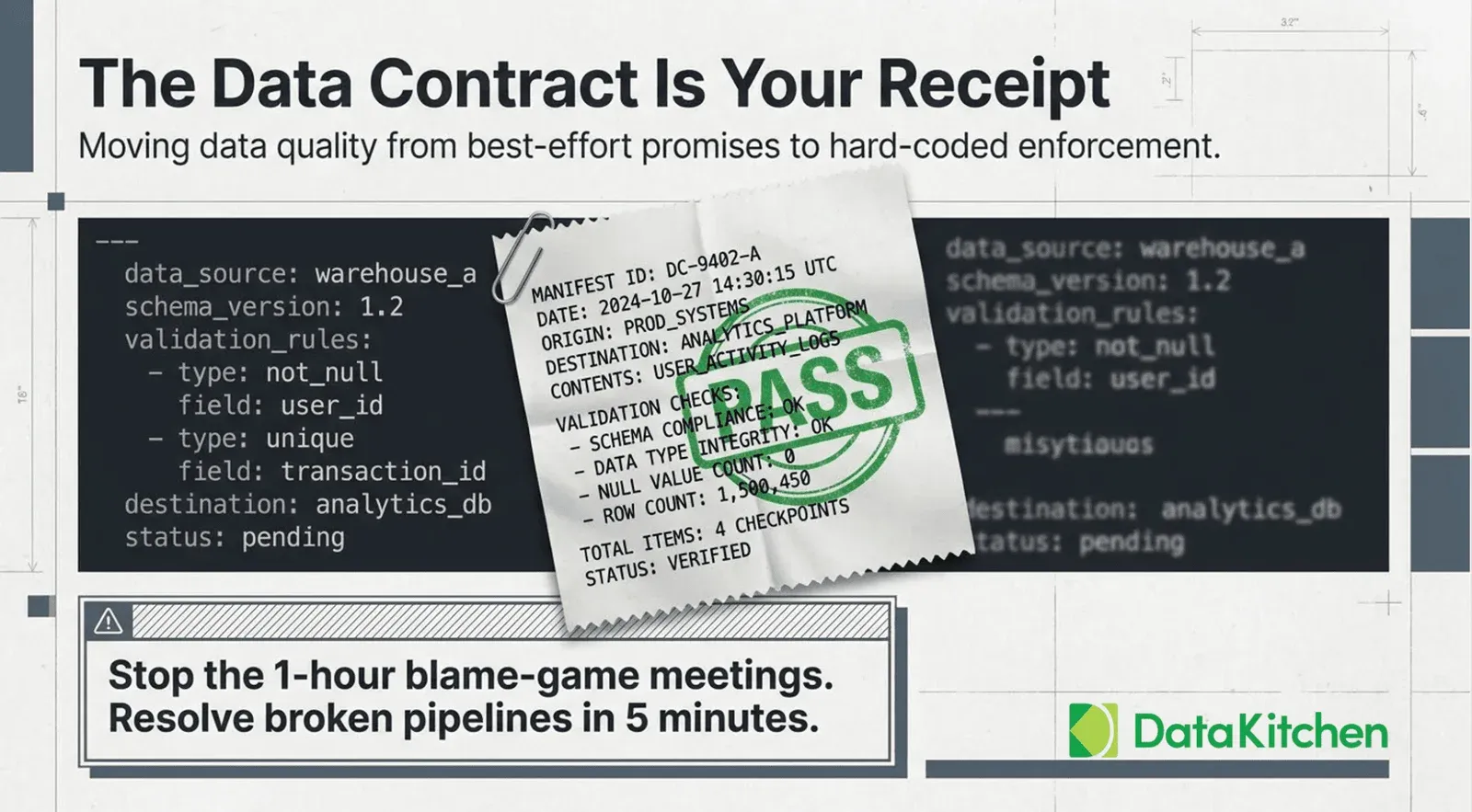

- Migration Data Tests: DataOps TestGen automatically generates quality validation tests comparing source and target data systems. By focusing on detailed aspects such as data completeness, accuracy, and consistency, TestGen helps identify discrepancies early in the migration process.

- Parallel System Monitoring : DataOps Observability allows for the simultaneous monitoring of legacy and new systems, making it easier to run parallel tests and validate the migration process continuously.

- Comprehensive Coverage: The end-to-end Data Journey mapping provides a complete overview of data interactions and dependencies. It is crucial for tracking data flow and transformations during migration and for system-to-system balancing.

- Real-time Monitoring and Alerts : Continuous monitoring capabilities ensure any issues are immediately identified and addressed, preventing the propagation of errors.

Benefits of Effective Data Observability During Data Migration

Implementing DataKitchen’s Open Source Data Observability tools during data migration projects offers significant benefits:

- Reduced Rework : Organizations can avoid costly rework by catching errors early and ensuring the migration process stays on schedule.

- Improved Accuracy and Productivity: Automated tests and validations streamline the migration process, boosting team productivity and ensuring schedule adherence.

- Enhanced Confidence: With comprehensive testing and real-time monitoring, teams can have greater confidence in the accuracy of the migrated data, leading to smoother transitions and quicker adoption of new systems.

Conclusion

Data Migration problems can be a thankless project, particularly those that involve moving to a cloud-based environment. With its robust testing and monitoring capabilities, DataKitchen provides the tools to ensure data migrations are successful, accurate, and efficient. By leveraging these advanced observability tools, companies can ensure their data remains robust and reliable, no matter where it resides.

Next Steps: Download Open Source Data Observability, and Then Take A Free Data Observability and Data Quality Validation Certification Course