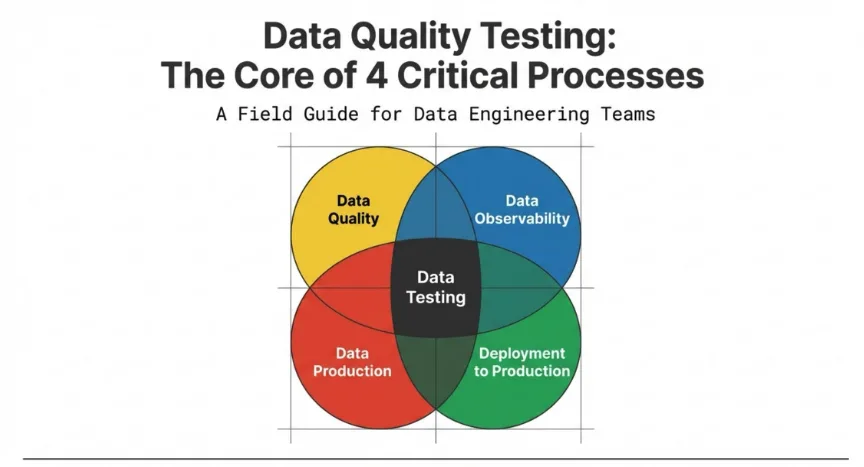

Every data and analytics team juggles multiple responsibilities. You are expected to ensure your data is accurate and fit for purpose. You need to prevent problematic data from entering your data environment. You must stop your data pipelines from delivering embarrassing errors to your stakeholders. And you have to deploy new code and data sets into production without breaking what already works. These four activities might seem distinct at first glance, but they share a fundamental characteristic: they all depend on robust data quality testing to succeed.

The challenge is that most organizations treat these activities as separate concerns, with different tools, different teams, and different approaches. This siloed thinking obscures a crucial insight. Data quality testing is not just one activity among many. It is the foundational capability that enables all four of these processes. When you understand this connection, you can build a more coherent and effective approach to managing your data estate.

The Four Processes That Define Data Team Operations

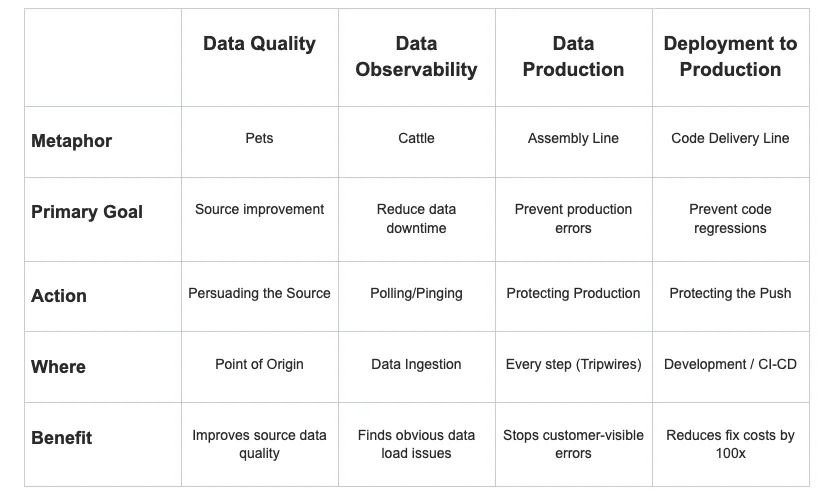

Before diving into how testing connects these activities, let us establish what each process entails and why it matters to your organization.

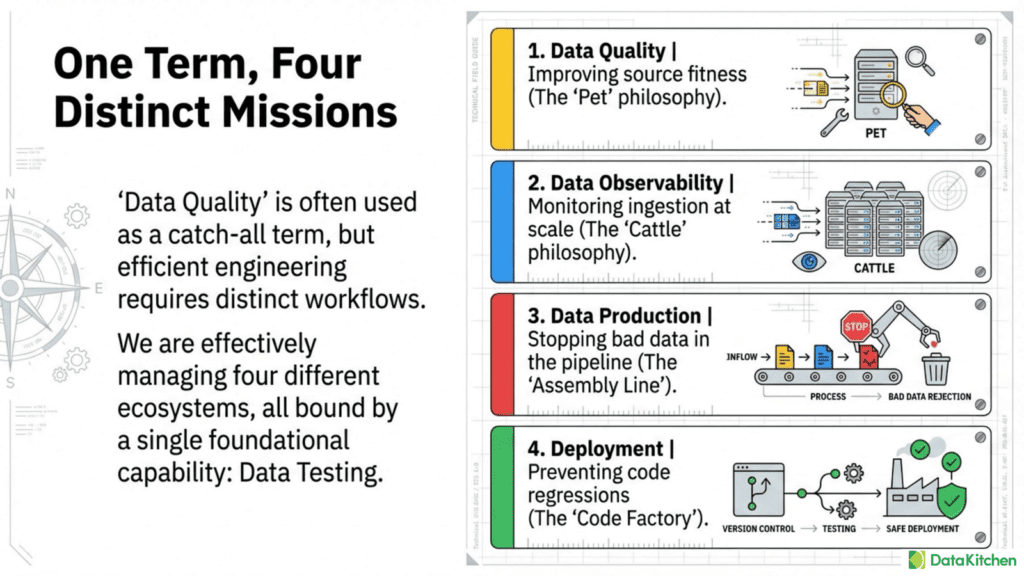

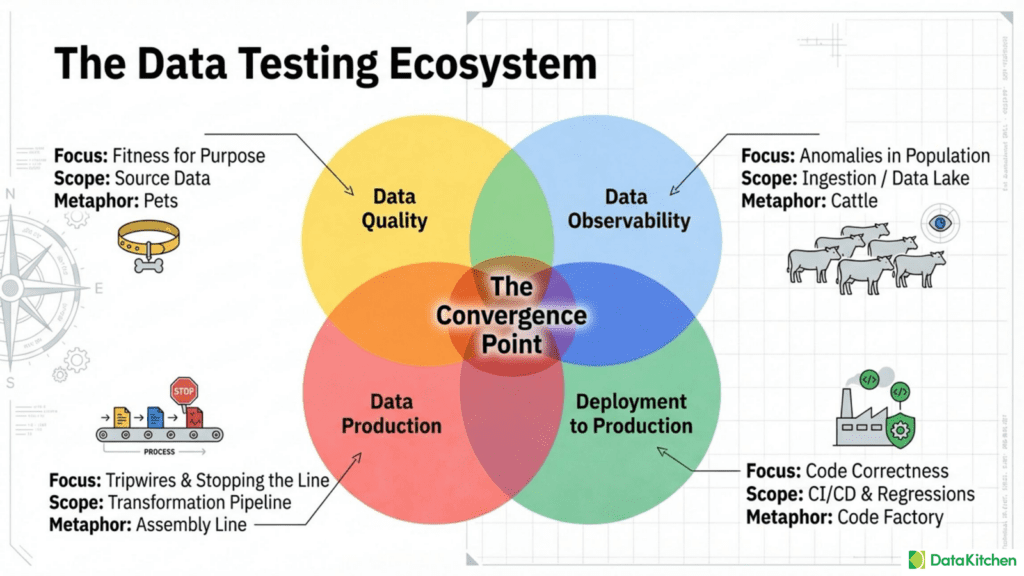

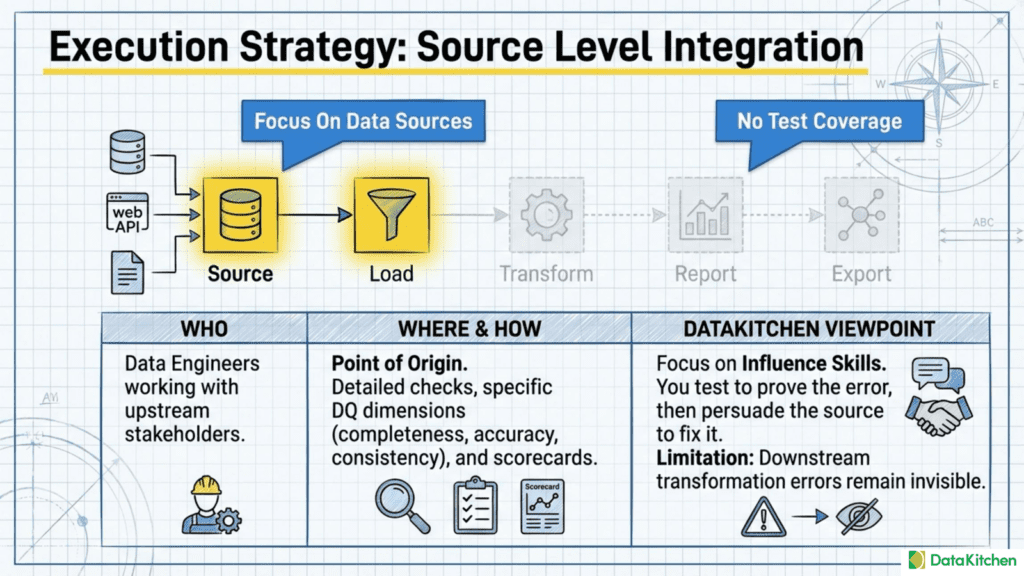

The first process is Data Quality, which focuses on improving the quality of your data sources and ensuring they are fit for purpose. This is the most traditional understanding of data quality work. Teams engaged in this process spend their time examining specific tables and datasets, running detailed checks against data quality dimensions, building dashboards to track quality scores, and working to influence upstream data sources to fix problems at their origin.

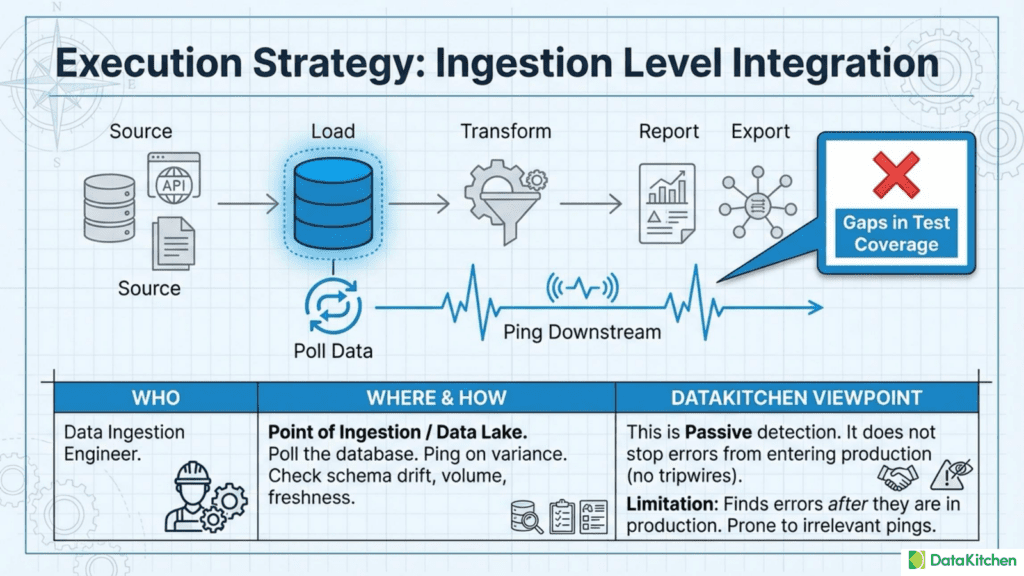

The second process is Data Observability, which has emerged as a critical discipline for teams managing large numbers of tables and datasets. The core problem here is avoiding blame for obvious data problems that slip through unnoticed. When you have hundreds or thousands of tables, you cannot manually inspect each one. Data observability solutions poll your databases looking for anomalies in freshness, volume, schema changes, and statistical patterns. The goal is to identify data ingestion errors and reduce data downtime.

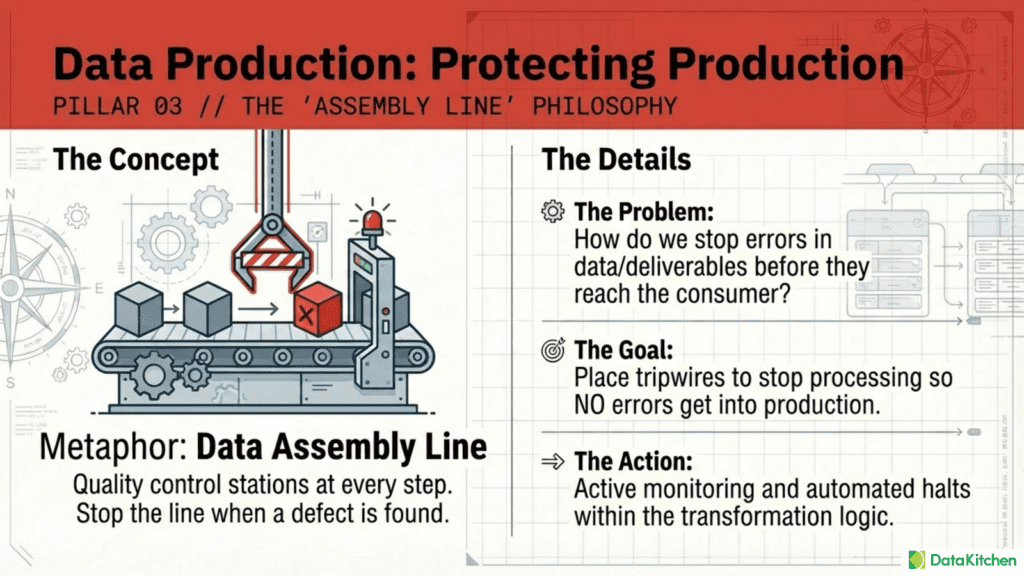

The third process is Data Production, which we might also call Data Journey Quality. This goes beyond simply observing data at rest to monitoring the entire journey that data takes through your production systems. The fundamental question here is how to stop errors in data and deliverables before they reach production. This requires placing tests and monitors at every step of the data production process, with tripwires that can halt processing when problems are detected.

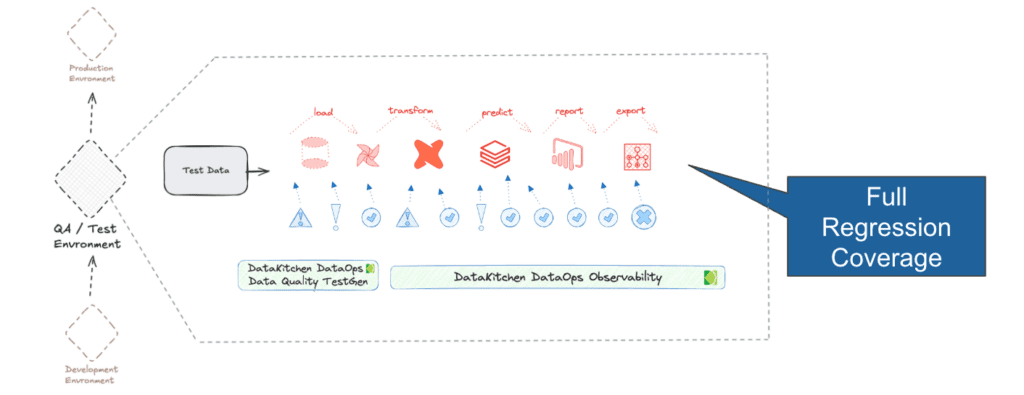

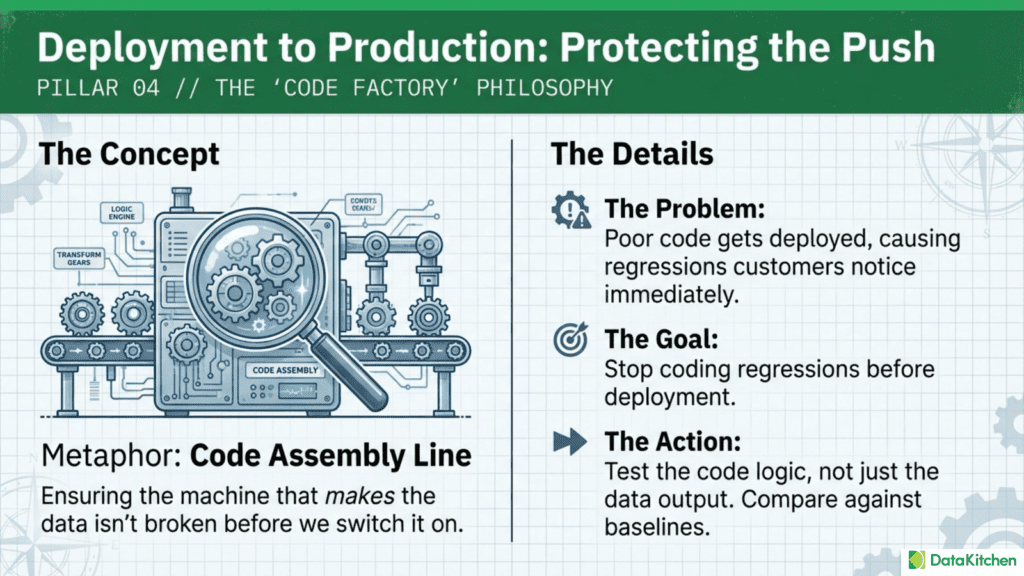

The fourth process is Deployment to Production, specifically the regression and impact testing of new code. Your team of data engineers, scientists, and analysts is constantly writing and modifying code. Before that code reaches production, you need to ensure it has been adequately tested. This means going beyond simple unit tests to include full regression testing and functional testing in development environments during CI/CD.

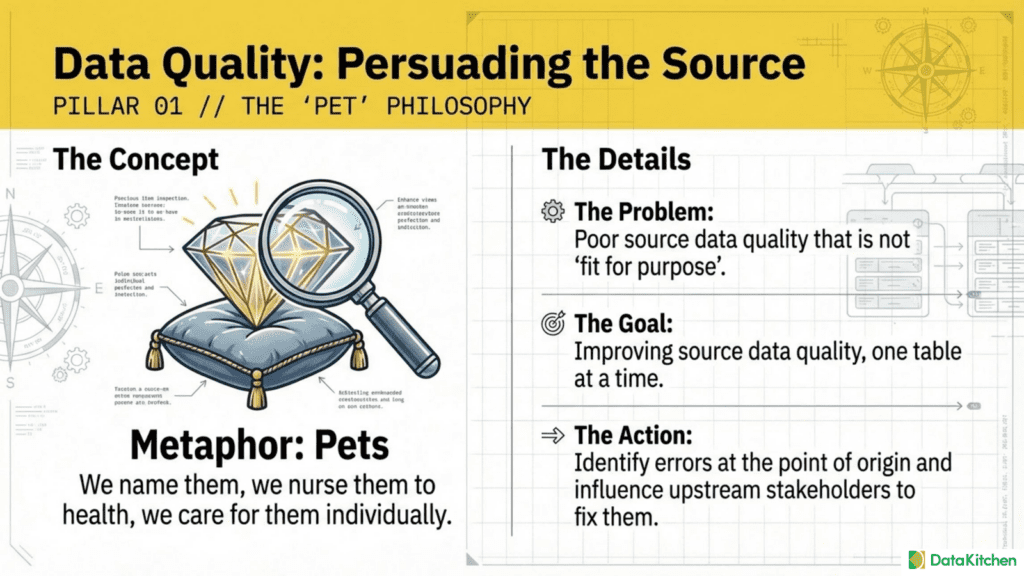

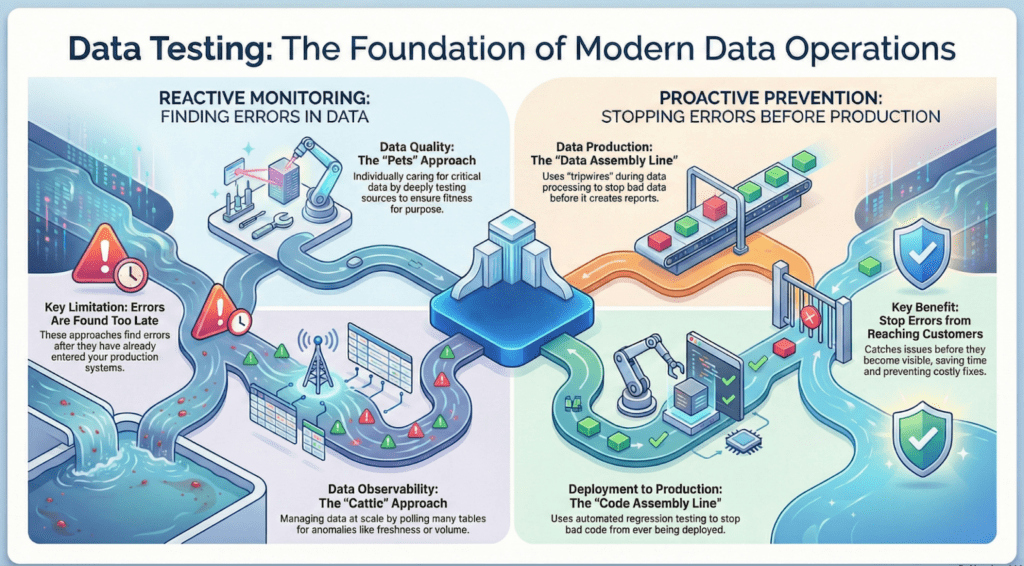

The Data Quality Process: Treating Your Critical Data Like Pets

The metaphor of “pets versus cattle” has become common in infrastructure discussions, but it applies equally well to data quality work. When you treat data like pets, you give individual datasets names, you care for them individually, and you invest significant effort in keeping each one healthy. This approach makes sense for your most critical data assets.

Data quality work in this mode involves persuading the source to improve its data quality. You are not just detecting problems; you are building the case for why upstream systems and processes need to change. This requires finding errors at the point of origin, as close to where data enters your systems as possible. The work involves creating detailed data quality dashboards that track specific tests across data quality dimensions like completeness, accuracy, consistency, validity, timeliness, and uniqueness.

The testing approach for traditional data quality work tends to be intensive and specific. You write lots of tests for each table, covering every aspect of the data that matters to your business. You score and track results over time. You use those results to influence your data sources, whether those are internal systems, external vendors, or manual data entry processes.

This focused approach has real benefits. It keeps things relatively simple at the data level and allows you to build deep expertise in specific datasets. However, it also has significant limitations. When you focus exclusively on source data quality, errors that occur in downstream tools and deliverables remain invisible to you. Your customers might receive incorrect analytic results even though your source data quality scores look excellent.

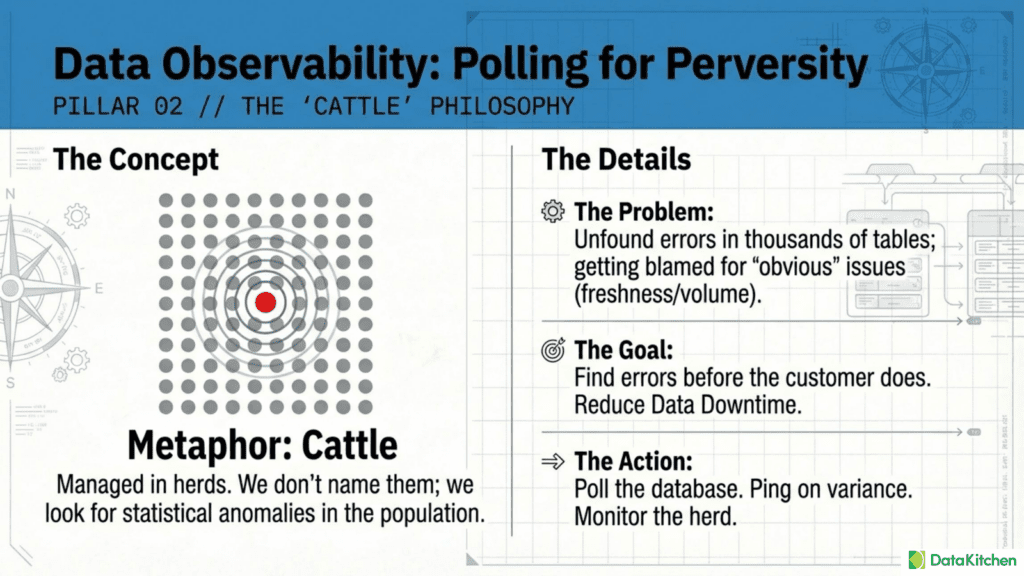

The Data Observability Process: Managing Data Scaled Like Cattle

When you have thousands of tables spread across multiple databases and data platforms, the pet approach simply does not scale. You cannot write hundreds of specific tests for every table. You cannot manually review quality dashboards for each dataset. You need a fundamentally different approach.

Data observability treats data more like cattle. Instead of naming and caring for individual animals, ranchers manage herds at scale using statistical sampling and pattern recognition. Similarly, data observability solutions monitor your databases and alert you to errors. They look for variance across large populations of tables, checking for things like unexpected changes in row counts, unusual freshness delays, schema modifications, and statistical drift that might indicate data problems.

The target audience for data observability is typically the data ingestion engineer responsible for reliably getting data into the platform. These engineers need to find errors in large databases before customers do. The primary goal is to reduce data downtime, which is the period when data is unavailable, inaccurate, or otherwise unusable.

This polling approach enables coverage across far more tables than would be possible with hand-crafted tests. It also provides visibility into data lineage, helping you understand how problems propagate through your systems. However, the observability approach has its own limitations. It finds data errors after they have already gotten into production systems. The automated anomaly detection can generate irrelevant pings that waste time and erode trust. And the anomaly testing, while broad, is often shallow compared to what you would achieve with specific hand-crafted tests for critical tables.

Perhaps most importantly, data observability alone does not prevent errors from reaching production. It detects problems after the fact rather than preventing them from reaching your stakeholders.

The Data Production Process: Protecting Production with Data Journey Quality

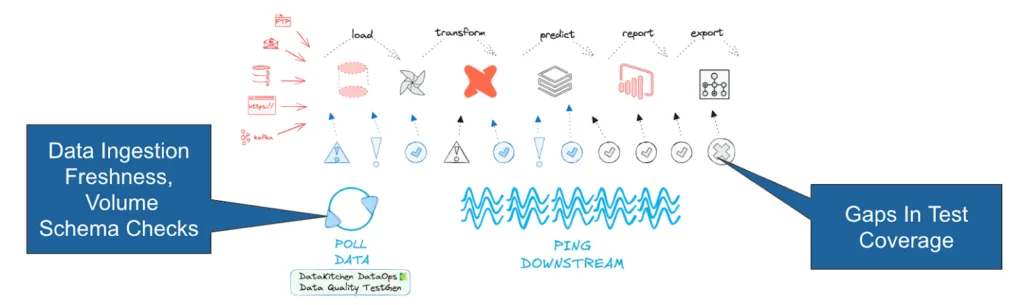

The third process addresses a fundamental gap in the first two approaches. Traditional data quality focuses on sources, and data observability polls data at rest. Neither adequately addresses what happens during the data production process itself. This is the data journey from raw inputs through transformation, enrichment, and aggregation to final deliverables.

Data Journey Quality operates on a different metaphor: the assembly line. In manufacturing, quality control does not just inspect raw materials at the loading dock or finished goods in the warehouse. Inspections happen at every step of the production process, with the ability to ‘stop the line’ when problems are detected. This prevents defective work from moving forward and wasting resources on products that will ultimately fail.

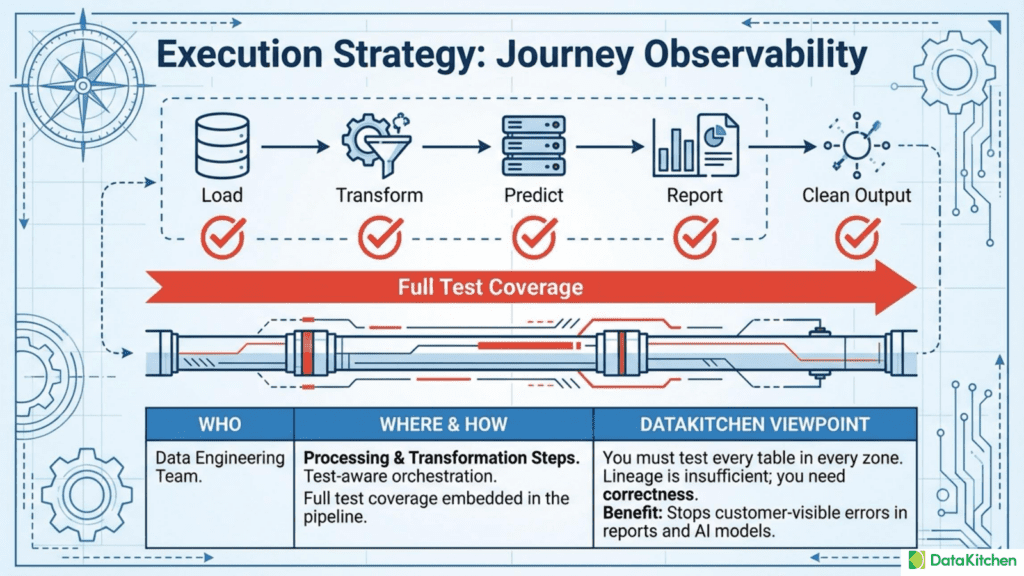

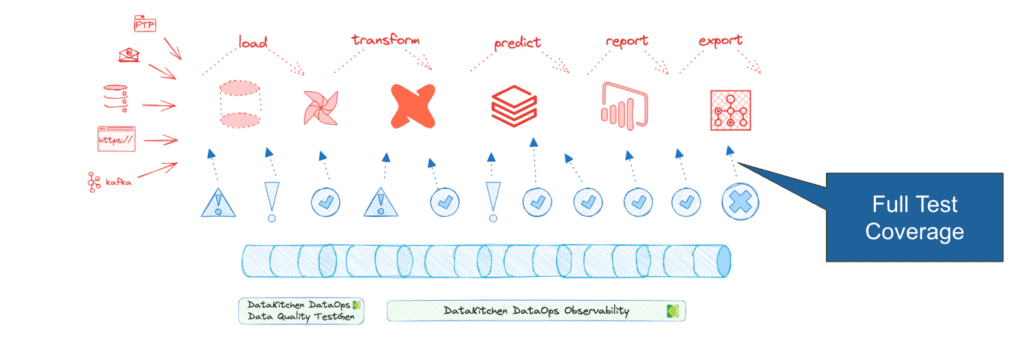

The core question for data production is straightforward but challenging: how do you stop errors in data and deliverables before they reach production? This requires placing tests and monitors within the data production processing steps themselves, not as an afterthought. You need either a test-aware orchestrator that can respond to test failures or a dedicated data journey observability tool that integrates with your existing infrastructure.

The goal is full test coverage across your production process, with the ability to find all errors during data production and place tripwires that stop processing. When an error is detected, the system should halt before the problem propagates further downstream or reaches customer-facing systems.

This approach requires testing every table in every zone of your data platform. It means monitoring every tool involved in your data production process and integrating them into a single unified view. Data lineage alone is insufficient because it tells you how data flows but not whether that data is correct at each step.

The testing requirements for data production are substantial. You need both data integration and tool integration, which makes implementation more complex than either traditional data quality or data observability alone. But the benefit is significant: you can actually stop customer-visible errors in data, reports, and models before they cause damage.

The Deployment to Production Process: Regression and Impact Testing

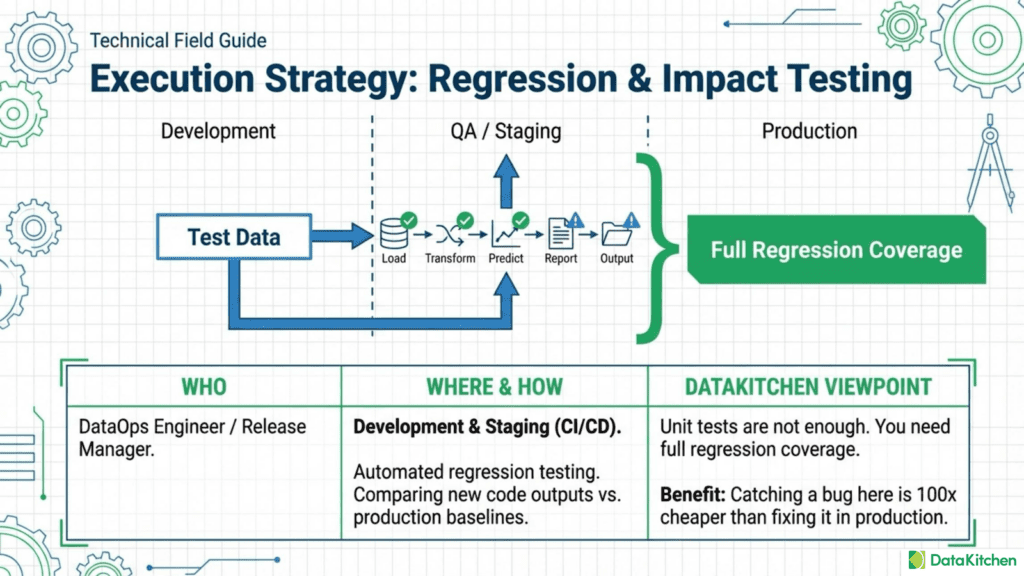

The fourth process shifts focus from data to code. Your data engineering team is constantly writing new transformations, building new pipelines, updating existing logic, and deploying changes to production. Each of these changes carries risk. A modification that works perfectly in development might break something in production. A new feature might introduce a regression in existing functionality.

The metaphor here is another assembly line, but this one produces code rather than data. Just as the data production assembly line needs quality gates, the code production process needs its own checkpoints to ensure that poor code does not reach production, where customers will notice the problems.

The traditional approach to code testing relies heavily on unit tests and manual business reviews. Unit tests verify that individual functions work correctly in isolation, and business reviews ensure that outputs look reasonable to human experts. These approaches are necessary but not sufficient. Unit tests cannot catch problems that only emerge when components interact in production environments. Manual reviews cannot scale to cover every possible scenario and are prone to human error and fatigue.

What data teams need is a fully automated system with adequate test coverage. This includes regression testing that compares the outputs of new code against baseline results from the existing production system. It also includes impact testing that identifies which downstream assets might be affected by a change. And it means integrating these tests into your CI/CD pipeline so they run automatically before any code can be deployed. Great test data is key. Some teams say: today’s code, yesterday’s data’ for deployment testing.

The testing limitations here involve practical concerns: you need appropriate test data that reflects production scenarios, tools to automate comparison, and CI/CD integration that enforces testing requirements without creating bottlenecks for your development team.

The benefit of getting this right is substantial. Every regression error that slips into production costs far more to fix than one caught during development. The commonly cited figure is that production bugs cost one hundred times more than bugs caught earlier in the development process. This multiplier reflects not only the technical cost of fixing the bug but also the customer impact, the loss of trust, and the emergency response required by production incidents.

The Common Thread: Testing at the Core

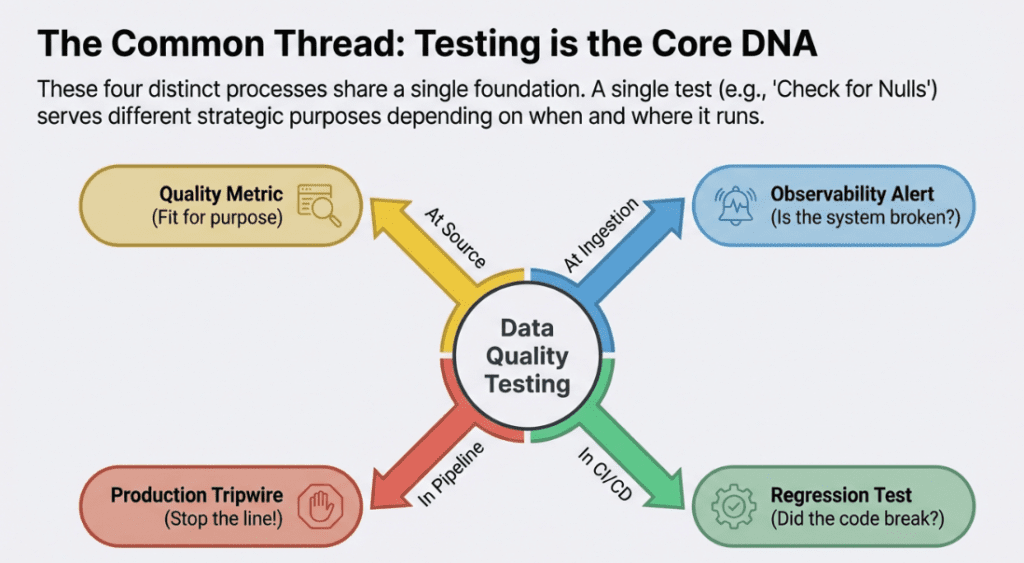

When you examine these four processes carefully, a pattern emerges. Each one depends fundamentally on data quality testing, but each applies testing in a different context and for a different purpose.

Testing techniques overlap significantly across these domains. A test that checks for null values in a critical column might serve data quality in one context, data observability in another, and deployment validation in a third. The difference lies not in the test itself but in when it runs, what triggers it, and what happens when it fails.

This insight has practical implications for how you structure your testing capabilities. Rather than building separate testing infrastructure for each of these four processes, you can create a unified testing foundation that serves them all. A comprehensive test suite can feed data quality dashboards for source improvement work while simultaneously providing the tripwires needed for data production protection and the regression baselines needed for deployment validation.

Bridging the Gaps Between Processes

Understanding how these four processes relate reveals several gaps that many organizations struggle with.

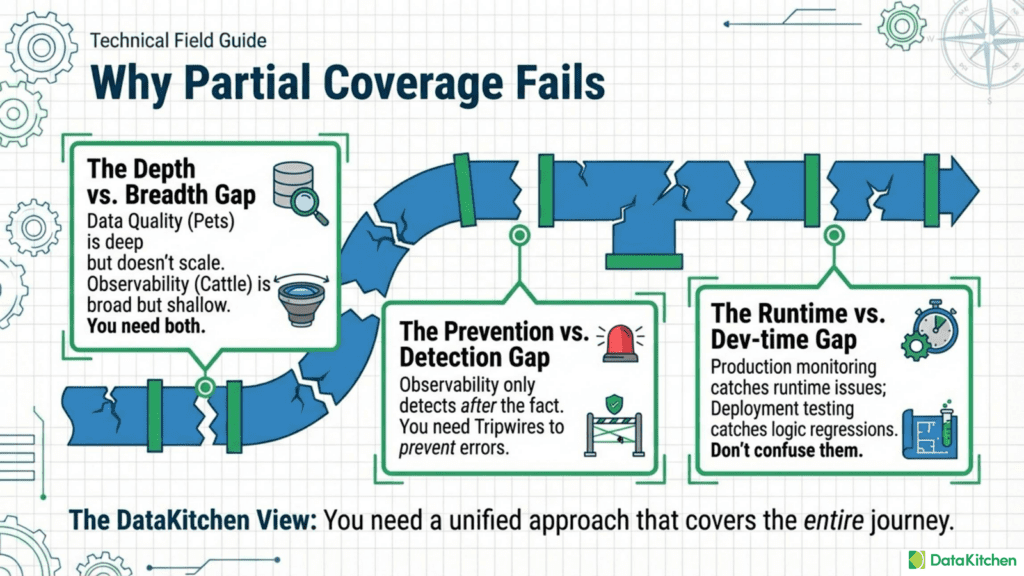

The gap between data quality and data observability is a matter of depth versus breadth. Data quality provides deep testing for critical tables, but cannot scale to cover everything. Data observability provides broad coverage but often misses problems that only detailed tests would catch. Organizations need both approaches, with clear criteria for which tables get the pet treatment and which are managed as cattle.

The gap between data observability and data production lies between prevention and detection. Observability tells you when something has gone wrong. Data journey observability with tripwires can prevent errors from propagating. Organizations that rely solely on after-the-fact detection will always be playing catch-up, fixing problems after customers have already been affected.

The gap between data production and deployment testing is about runtime versus development time. Data production monitoring catches problems during actual production runs. Deployment testing catches problems before code even reaches production. Both are necessary because some problems manifest only at scale in production environments, while others can and should be caught earlier in development.

Building a Comprehensive Testing Strategy

Given that testing underlies all four of these critical processes, how should data teams approach building their testing capabilities?

The first step is recognizing that testing is not a one-time project but an ongoing capability that requires investment and maintenance. Your test suite will need to evolve as your data assets, pipelines, and business requirements change. Building tests is not enough; you also need processes for reviewing, updating, and retiring tests as circumstances warrant.

The second step is prioritizing test coverage based on business criticality. Not every table needs the same level of testing attention. Your most critical datasets, the ones that drive key business decisions or feed customer-facing applications, deserve the intensive pet treatment. Less critical tables can be managed with a lighter-touch cattle-style observability approach. The key is making this prioritization explicit rather than letting it happen by accident.

The third step is to integrate testing into your production processes rather than treat it as a separate activity. Tests should run automatically as part of your data pipelines, with clear tripwires that halt processing when critical thresholds are breached. This requires both technical integration with your orchestration tools and organizational agreement on which severity of test failure should stop production.

The fourth step is connecting testing to your deployment workflow. Every code change should trigger appropriate regression and impact tests before it can reach production. This requires investment in test automation and CI/CD integration, but the payoff in prevented production incidents is substantial.

The fifth step is to build feedback loops that use test results to drive improvement. Test failures should not just trigger alerts; they should inform root-cause analysis and drive permanent fixes. Data quality scores should drive conversations with upstream data sources. Deployment test failures should prompt an investigation into why the problem was not caught earlier in development.

The Cost of Inadequate Testing

Organizations that underinvest in data quality testing pay the price across all four of these processes. The 1:10:100 rule applies: find problems early to avoid paying the high price of failure.

Without adequate source data quality testing, problems at the origin propagate through your entire data estate, multiplying their impact at every step. You might clean up the symptoms downstream, but the root cause continues generating new problems.

Without adequate data observability testing, embarrassing errors slip through to your customers. You get blamed for obvious problems that automated checks should have caught. Your credibility with business stakeholders erodes over time.

Without adequate data production testing, errors in your transformation logic or pipeline configurations reach production systems. You might not even know about the problems until a customer reports incorrect numbers in a dashboard or a model starts making bad predictions.

Without adequate deployment testing, code changes introduce regressions that break things that used to work. Your team spends increasing amounts of time on emergency fixes and production support, leaving less time for the new development work that the business is waiting for.

The cumulative effect of these testing gaps is a data platform that becomes increasingly unreliable and a data team that becomes increasingly reactive. Instead of building new capabilities and delivering business value, you spend your time fighting fires and apologizing for problems.

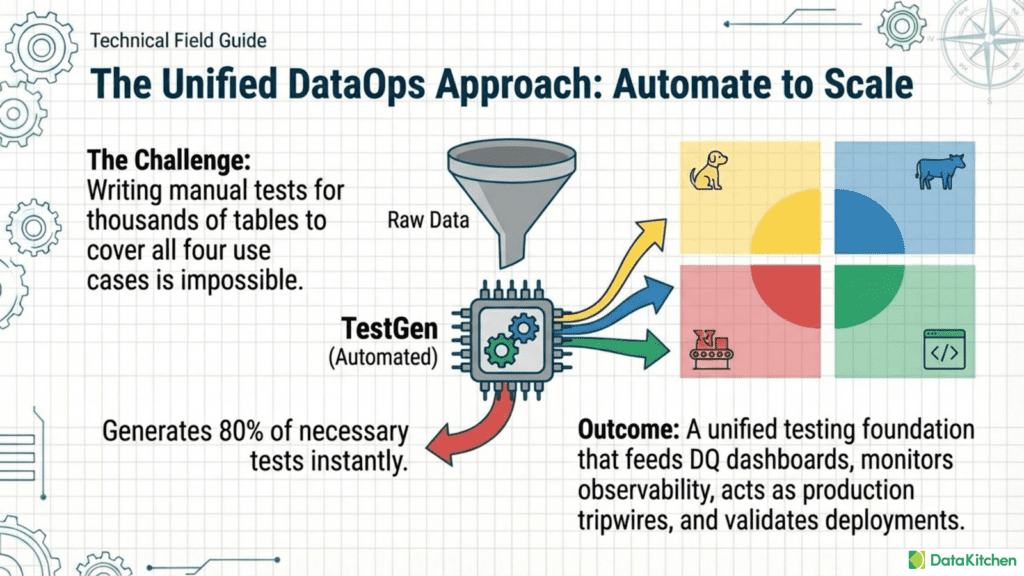

The Testing Challenge and a Practical Solution

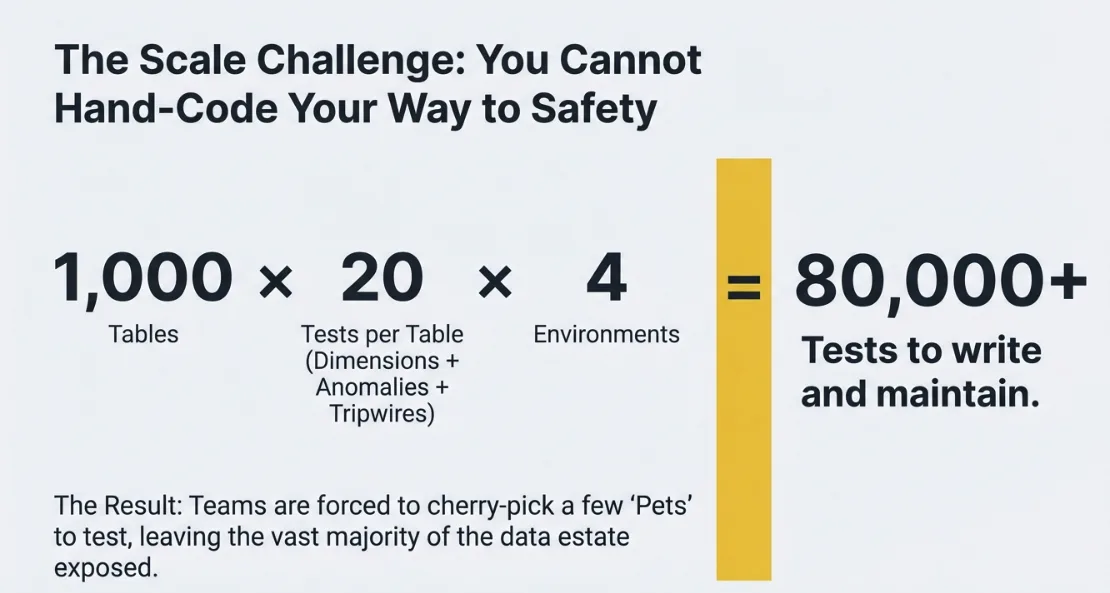

By now, the central role of data quality testing should be clear. Whether you are improving source data quality, implementing data observability across hundreds of tables, building tripwires into your data production pipelines, or validating code deployments, you need tests. Lots of tests. Tests that cover your data comprehensively and catch problems before they reach your customers.

Here is the problem: achieving adequate test coverage across all four of these use cases is extraordinarily time-consuming. Writing tests manually does not scale. A single table might need dozens of tests to cover all relevant data quality dimensions, detect anomalies, serve as a production tripwire, and provide a regression baseline. Multiply that by hundreds or thousands of tables, and you are looking at months of work just to configure or code the tests you need. Most teams never get there. They write tests for a handful of critical tables and hope for the best with everything else.

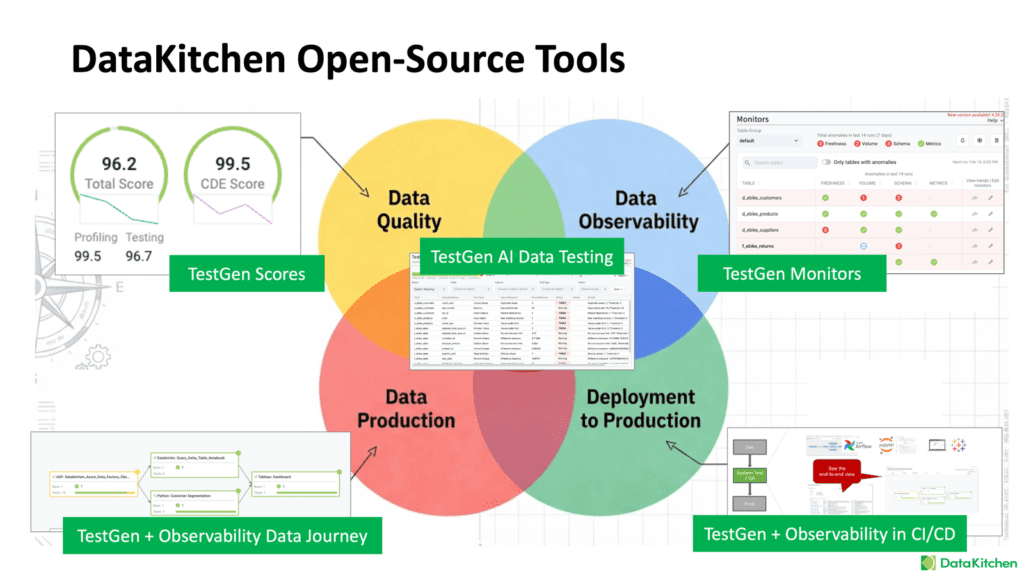

This is where automation becomes essential. You need a tool that can analyze your data and automatically generate the tests you need. DataKitchen’s open source TestGen does exactly this. TestGen profiles your data and creates comprehensive test suites that address all four testing use cases: data quality scoring, observability monitoring, production tripwires, and deployment regression testing. Instead of spending months writing tests by hand, you can generate eighty percent of the tests you need in just a few clicks.

The remaining twenty percent, the tests that encode specific business rules and domain knowledge unique to your organization, still require human input. But starting with a solid foundation of automatically generated tests means your team can focus their limited time on those high-value custom tests rather than writing boilerplate checks that a tool can handle. This is how you achieve the comprehensive test coverage that makes all four of these critical processes work effectively. This is how you move from reactive firefighting to proactive data quality management.

Frequently Asked Questions, TLDR;

What is the Summary of the Data Quality Testing At The Core of 4 Very Important Processes?

Having adequate data quality test coverage is very important to four key activities that every data and analytics team does:

Data Quality: trying to improve the source quality of your data, making sure it’s fit for purpose.

Data Observability: This is where you try to ensure you don’t have data errors when you have lots of tables and get blamed for obvious data problems like freshness.

Data Production: Monitoring the data journey and stopping it with tripwires before errors reach production.

Deployment to Production: You have a team of data engineers, scientists, and others who are writing code. You want to ensure the code is adequately tested. Before it gets into production, this means going beyond unit tests into full regression and functional tests in development.

What is the Summary of the Data Quality Testing for Each of The Processes?

Data Quality Process

Metaphor: Pets

Action Tagline: Persuading the Source

Problem: Poor source data quality, not fit for purpose

Where: Find errors at the point of origin.

Goals: improving source data quality, one table at a time

How: lots of specific tests, DQ dimensions, data quality dashboards, detailed checks. Just focus on testing and scoring, then influencing your data sources

DataKitchen Viewpoint: DataOps Way to Data Quality; focussed test driven data quality dashboards; influence skills

Testing Limitations: Errors in downstream tools and deliverables are invisible; customer-delivered data analytic results may be incorrect

Benefit: Simple Data Level Integration

Data Observability Process

Metaphor: Cattle

Action Tagline: Polling For Perversity

Problem: Unfound data errors in large sets of data tables, getting blamed for ‘obvious data problems’

Who: Data Ingestion Engineer

Where: Find errors at the point of Data Ingestion

How: Just Poll Your Database and Ping people when there may be an error; polling 100s of tables to find variance. frequency, volume, schema, drift, time series anomaly detection

Goals: find errors in large databases with 1000s of tables before your customer does. Reduce data downtime

DataKitchen Viewpoint: Does not stop errors from getting into production; no production tripwire, no Data Journey observability

Testing Limitation: Finds data errors after they get into production, irrelevant pings, and partial test coverage

Benefit: Simple Data and Data Lineage Level Integration

Data Production Process: Data Journey Observability

Metaphor: Data Production Assembly Line

Tagline: Protecting Production

Problem: How do you stop errors in data and deliverables before they get into production?

Who: Data Engineering Team

Where: Find errors during data production and place a tripwire to stop processing

How: Put tests and monitors as part of the data production processing steps. Use a test-aware orchestrator or a Data Journey Observability tool. Full test coverage

Goals: Find all errors during data production and place a tripwire to stop processing so that no errors get into production

DataKitchen Viewpoint: You need to test every table in every zone. Monitor every tool and integrate them into a single view of your process, with trip wires to stop production at every step of the Data Journey. Data Lineage is insufficient.

Testing Limitation: Requires both data and tool integration

Benefit: Stop customer-visible errors in data, reports, and models/AI

Deployment to Production Process: Regression & Impact Testing Of New Code

Metaphor: Code production Assembly Line

Tagline: Protecting the Push to Production

Problem: Poor code gets into production, and your customers notice.

Who: Data Engineering team; DataOps Engineer who controls the deployment process

Where: Find errors in code during development and deployment to production

How: Regression and impact testing in development and CI/CD

Goals: Stop coding regression before they get deployed to production

DataKitchen Viewpoint: Unit tests and manual business reviews are not enough; you need a fully automated system with adequate test coverage

Testing Limitation: Requires test data, tools, and CI/CD Integration

Benefit: Stop coding regression before it gets deployed to production; every regression error is 100x more expensive to fix in production