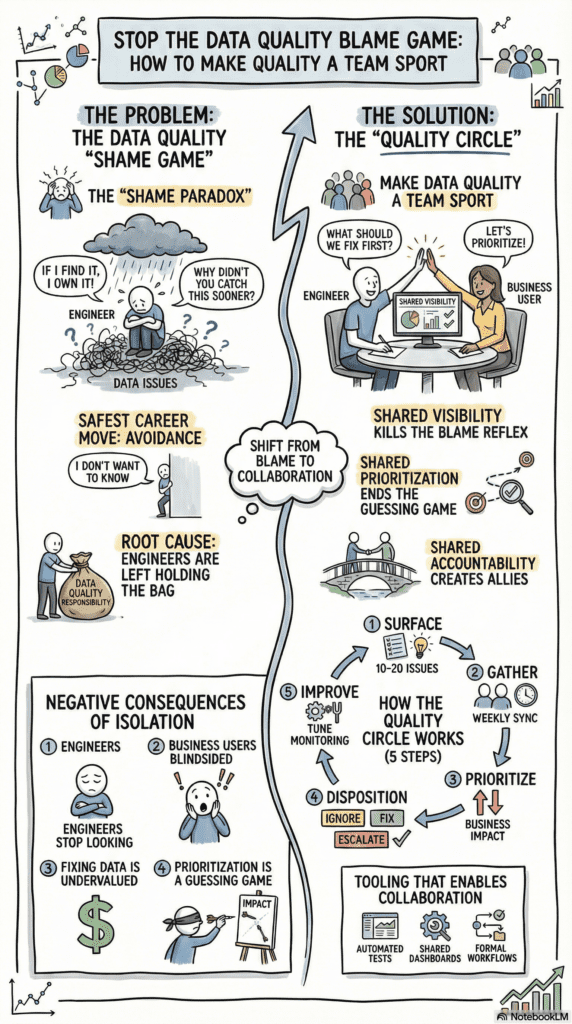

Here’s something we don’t talk about enough in data engineering: shame. The quiet, corrosive feeling that if something is wrong with the data, it must be your fault. And if you’re the one who finds the problem, you’re the one who’ll have to answer for it.

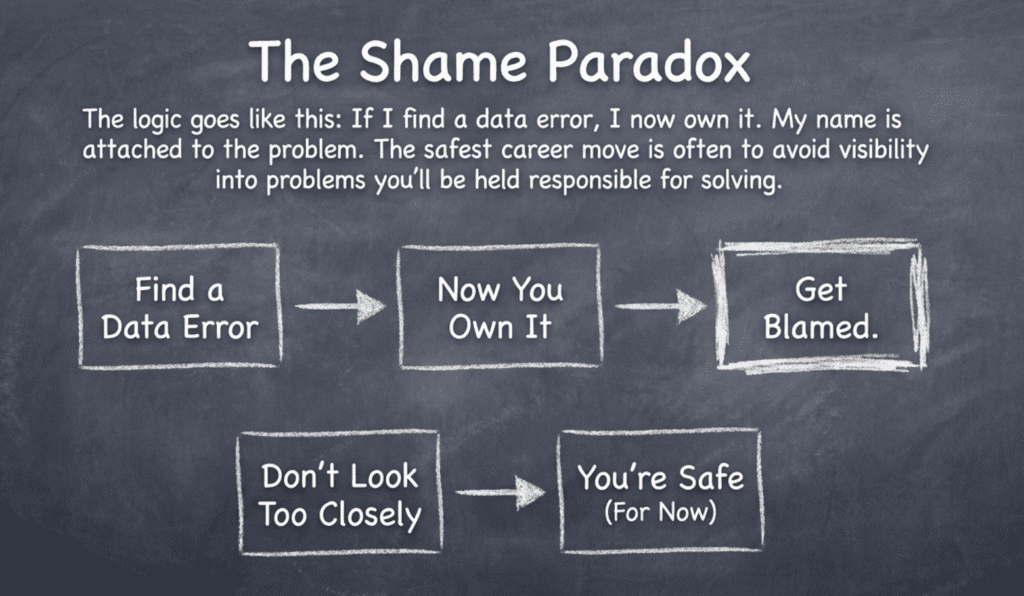

This shame creates a strange and painful paradox. The very people best positioned to catch data quality issues — the data engineers closest to the pipelines — often develop an unspoken preference not to look too closely. Not because they don’t care, but because they’ve learned that discovering a problem means owning the blame for it.

The Real Problem: Engineers Holding the Bag Alone

When data quality responsibility lives entirely within the engineering team, every error feels personal. You’re expected to anticipate problems before they happen, catch them instantly when they do, and fix them without anyone noticing there was ever an issue. The business sees clean dashboards and timely reports. They don’t see the anxiety underneath.

This isolation creates a no-win situation:

- Engineers are blamed for errors they discover, so they stop looking

- Business users are blindsided by issues they never saw coming

- Fixing data is treated as free (“just clean it up”) because stakeholders don’t see the work

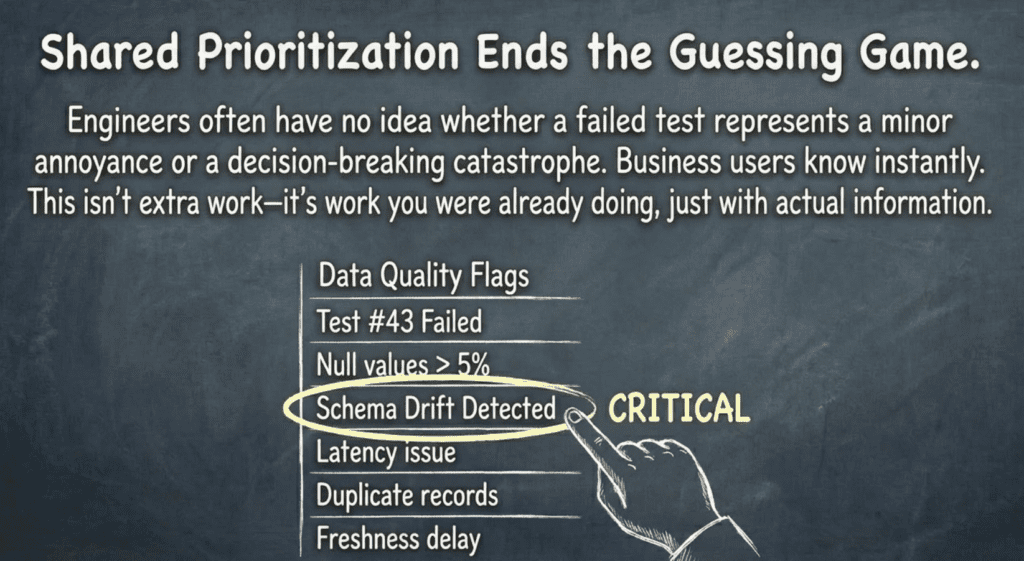

- Prioritization is a guessing game because engineers have no idea which errors actually hurt the business

And when something does slip through? The questions come. Why didn’t you catch this? How long has this been happening? The unspoken accusation hangs in the air: you should have known.

Over time, this creates a defensive posture. If I don’t find the error, I can’t be blamed for it. If someone else discovers it later, at least I’m not the face of the failure. It’s not a conscious strategy — it’s an emotional survival mechanism. The shame of being blamed is so uncomfortable that avoiding knowledge becomes preferable to seeking it out.

The tragedy is that this makes everything worse. Problems grow in the dark. Small issues become big ones. And when they finally surface — as they always do — the blame lands harder than it would have if someone had felt safe enough to look.

The Fix: Make It a Team Sport

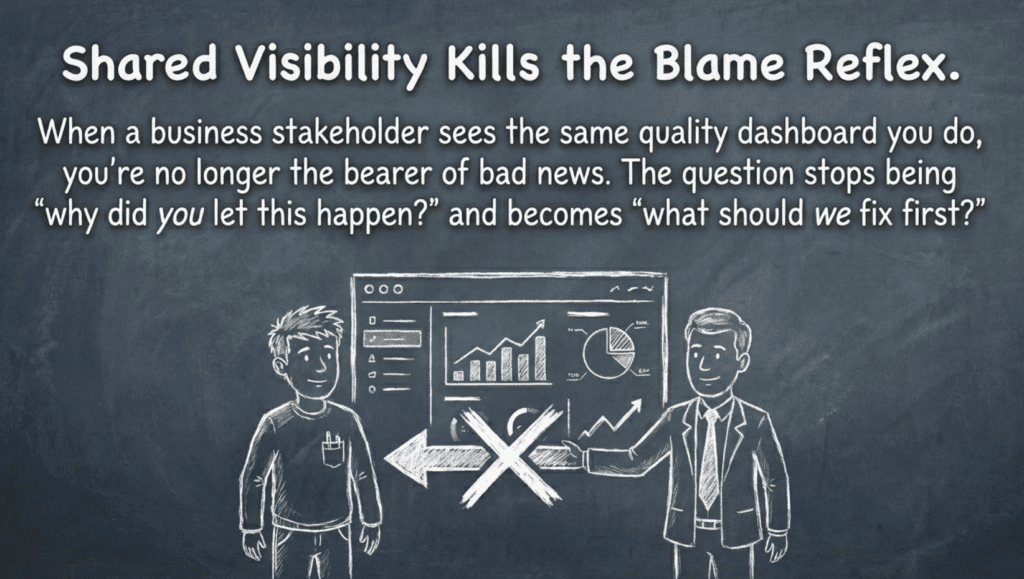

But here’s the hopeful truth: shame thrives in isolation, and it dissolves in partnership.

When data engineers and business users look at quality problems together, something remarkable happens. The engineer is no longer the sole bearer of bad news, nervously presenting failures to stakeholders who’ve been kept in the dark. Instead, everyone is looking at the same reality at the same time. The dynamic shifts from “what did you miss?” to “what should we tackle first?”

This isn’t just a nice idea — it fundamentally changes the emotional texture of the work. When a business analyst sits beside you reviewing a list of anomalies, they bring context you don’t have. They know instantly whether a flagged issue is a minor quirk or a decision-breaking catastrophe. They understand which upstream teams need to hear about source data problems. And critically, they become co-owners of the prioritization. The decision about what to fix isn’t yours alone to defend.

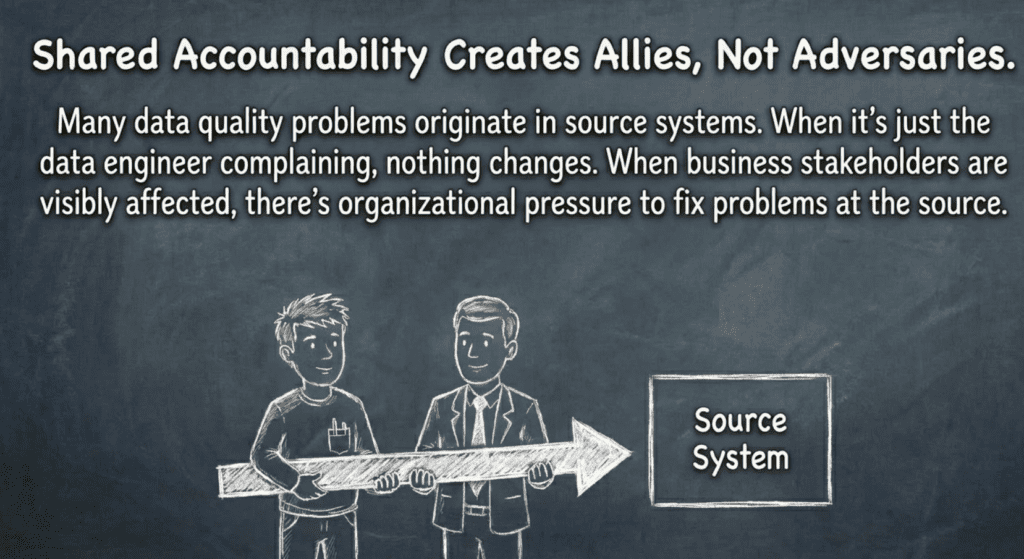

Shared visibility also creates shared accountability in the best sense. When stakeholders see the volume and complexity of data quality issues firsthand, they stop treating fixes as free. They understand the work. They become advocates rather than critics. And when problems originate in systems outside your control, you have allies who can push for fixes at the source — not just downstream workarounds that you’ll be blamed for when they’re not perfect.

Building a Culture of Collaborative Quality

The practical path forward is more straightforward than it might seem. Start by surfacing a manageable set of quality issues — perhaps fifty or a hundred flagged problems — and invite business stakeholders into a regular review. This could be a weekly half-hour meeting, an async Slack thread, or a shared dashboard where people can comment and discuss. The format matters less than the principle: nobody looks at these problems alone.

In these sessions, we work together to identify what actually matters. Out of a hundred automated flags, maybe ten represent genuine business risk. The engineers can explain what the tests are catching; the business users can explain what the downstream impact would be. Together, you disposition each issue: this one is noise and can be tuned out, this one is critical and needs immediate attention, this one reflects a source system problem that needs to be escalated. Every decision is made collectively and documented transparently.

Over time, these collaborative reviews become a feedback loop that improves the entire system. You learn which tests generate false alarms and which catch real problems. You tune the automated checks to be smarter and quieter. You build institutional knowledge about what quality means for your specific data and your specific business. And perhaps most importantly, you build relationships. The business users who once felt like distant judges become partners who understand and appreciate what you’re doing.

How Open Source TestGen Supports This Shift

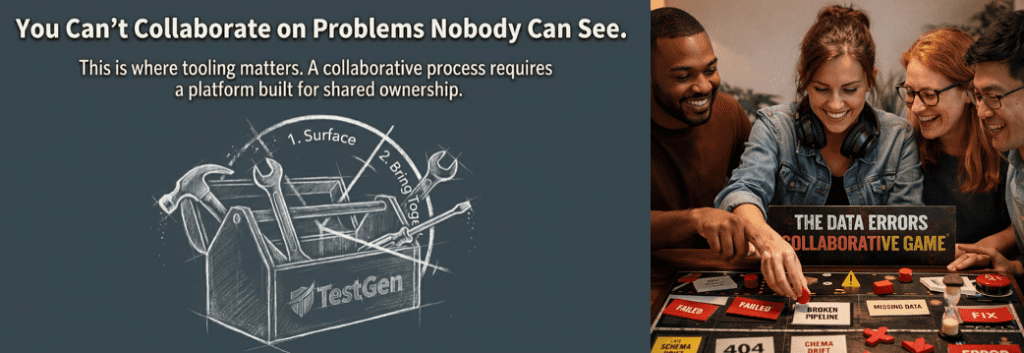

Tooling alone won’t transform your culture, but the right tools make transformation possible. DataKitchen’s TestGen is designed with collaborative quality in mind.

The automated test generation means you get comprehensive coverage without having to anticipate every possible failure mode. You discover problems you didn’t know to look for, and you’re not shamed for missing tests you couldn’t have predicted. The stakeholder-accessible dashboards mean business users can see quality status directly, removing the engineer from the uncomfortable role of sole messenger. Disposition workflows give your quality circle a structured way to review, prioritize, and resolve issues together, with decisions tracked and visible. And audit trails in the enterprise version document the collaborative process — not as surveillance, but as proof that quality decisions were thoughtful and shared.

From Shame to Confidence

The shame cycle in data quality isn’t inevitable. It’s a symptom of isolation, of engineers bearing impossible expectations alone while the people who could help remain unaware of the challenges.

The antidote is simple in concept and profound in effect: stop hiding the problems, and stop facing them alone. When you build a quality circle that includes both the people who build the data systems and the people who depend on them, you replace shame with solidarity. You replace defensiveness with curiosity. You replace the exhausting performance of perfection with the honest, hopeful work of continuous improvement.

You don’t have to carry this alone. Invite your stakeholders in. Look at the problems together. And discover that the thing you were afraid of — visibility — is actually the thing that sets you free.

Ready to build a collaborative approach to data quality? Check out DataOps Data Quality TestGen — open source tooling designed for teams who believe quality is a shared responsibility.

Frequently Asked Questions

The Quality Circle: What does this look like in practice?

Surface a manageable list of issues. Start with 10-20 flagged quality problems — enough to be meaningful, not so many that it’s overwhelming.

Bring engineers and business users together. This can be a weekly 30-minute review, an async Slack thread, or a shared dashboard with commenting. The format matters less than the participation.

Collaboratively pick what matters. Of 20 flags, only a few actually impact business decisions. The business users know which one. Without them in the room, engineers are guessing.

Disposition together. “This is noise, ignore it.” “This is critical, fix it now.” “This is a source system problem; escalate it.” Every decision is shared, documented, and defensible.

Learn and improve. Those disposition decisions become training data. Which tests cry wolf? Which catches real problems? Tune the system over time so it gets brighter and quieter.

What are the key points of this blog post?

Shame drives avoidance. Engineers learn that finding problems means owning the blame—so they stop looking.

Isolation makes it worse. When quality is one team’s burden alone, every error feels personal and inescapable.

Collaboration breaks the cycle. Shared visibility turns “what did you miss?” into “what should we fix first?”

Build a quality circle. Bring stakeholders into regular reviews. Prioritize together. Document decisions collectively. Replace defensiveness with continuous improvement.

What is a way to show this shame in an infographic?

How can teams effectively transition from a reactive to a proactive data culture?

First, gather facts showing there is a problem. Using a tool like Open Source TestGen, you can profile your data, get instant data hygiene test results, and have a data quality dashboard in less than an hour.

Second, show that adding data quality tests is not particularly time-consuming. Tools like TestGen automatically generate several dozen data quality tests with the push of a button.

Finally, show your team that data quality testing in production is not a burden but a benefit.