Too Much Money, Too Many Vendors, Your Problem

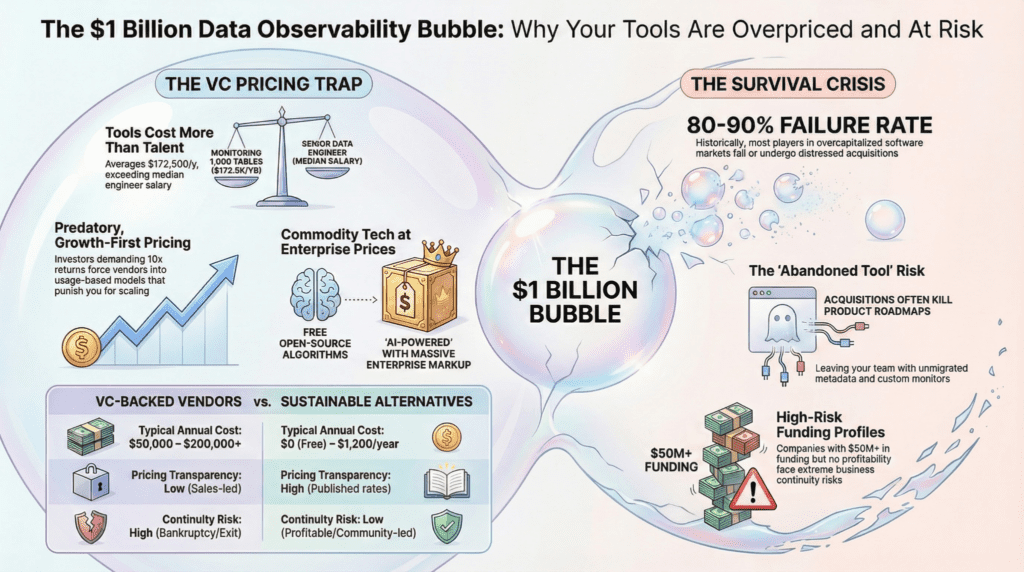

You have probably noticed that your inbox is full of data observability or data quality vendor pitches, your conference has a dozen booths for tools that all look roughly the same, and somehow every budget conversation for any of them starts at six figures. There is a reason for that, and it is not the technology’s complexity. Gartner estimates that poor data quality costs organizations an average of $12.9 million per year. The tools designed to prevent that loss are now priced so that most teams can afford to monitor only a fraction of their data.

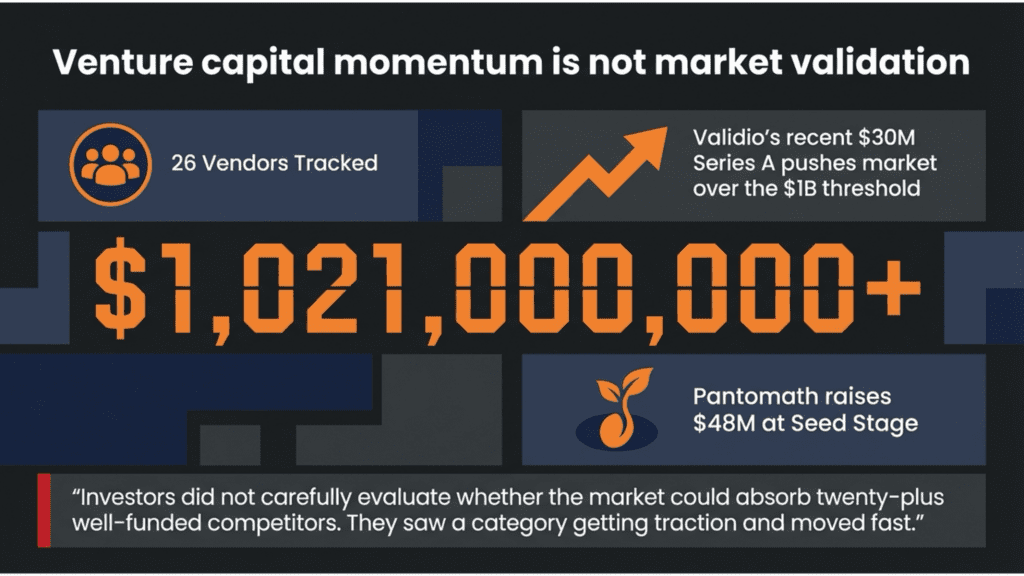

Last week, the data observability and data quality industry crossed a remarkable threshold: over one billion dollars in cumulative venture capital funding, most of it raised in the last five years. The milestone was marked by Validio’s $30 million Series A, a raise framed around strengthening enterprise data quality for AI adoption. If you are a data engineer, a data quality manager, or someone responsible for choosing tools to monitor your organization’s data pipelines, that number matters to you far more than you might realize. It is not a sign of a healthy, maturing market. It is a warning.

Venture capital investing runs on narrative momentum. When a category starts generating headlines, investors pile in, often regardless of whether the underlying economics justify the capital being deployed. The VC pile-on in data observability and data quality began around 2022 and accelerated through 2023. Investors did not carefully evaluate whether the market could absorb twenty-plus well-funded competitors. They saw a category getting traction and moved fast.

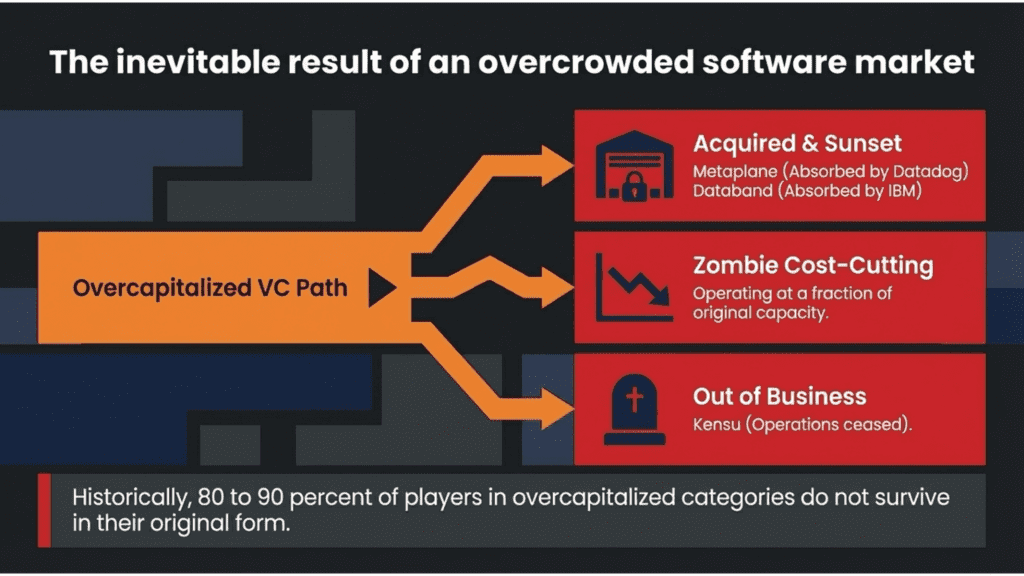

The predictable result is what always follows an overcrowded VC-funded market: the vast majority of companies will either fail outright or be absorbed through distressed acquisitions. Historically, in overcapitalized software categories, roughly 80 to 90 percent of the players do not survive in their original form. Some shut down. Some are acquired for their customer lists, and the product is quietly sunset. Some raise no further capital, survive on whatever revenue they have, and slowly reduce headcount until they become what VC’s call ‘zombie companies’.

For your team, this is entirely bad news. The companies that do survive face pressure to increase revenue because their investors need a return on investment. That pressure flows directly to you in the form of price increases, contract restructuring, and feature gating that did not exist when you signed your original agreement.

26 Vendors, $1 Billion, and a Price Increase Clock That Is Already Ticking

Here is the funding status across the major players in the space. These are not abstract numbers. They represent the financial pressure sitting behind every enterprise sales conversation you will have.

The acquisitions of Metaplane, Databand, and, just this week, Synq, warrant careful analysis. When a data infrastructure company acquires a point solution, it rarely invests heavily in that product. Founders leave, moving on to their next startup with a feather in their cap. Usually, the acquiring company aims to eliminate a competitive overlap, absorb a customer base, or add a feature to an existing product bundle. In both cases, the independent roadmap for the acquired product typically comes to an end. If you were a Metaplane customer, you are now a Datadog customer, whether you wanted that or not.

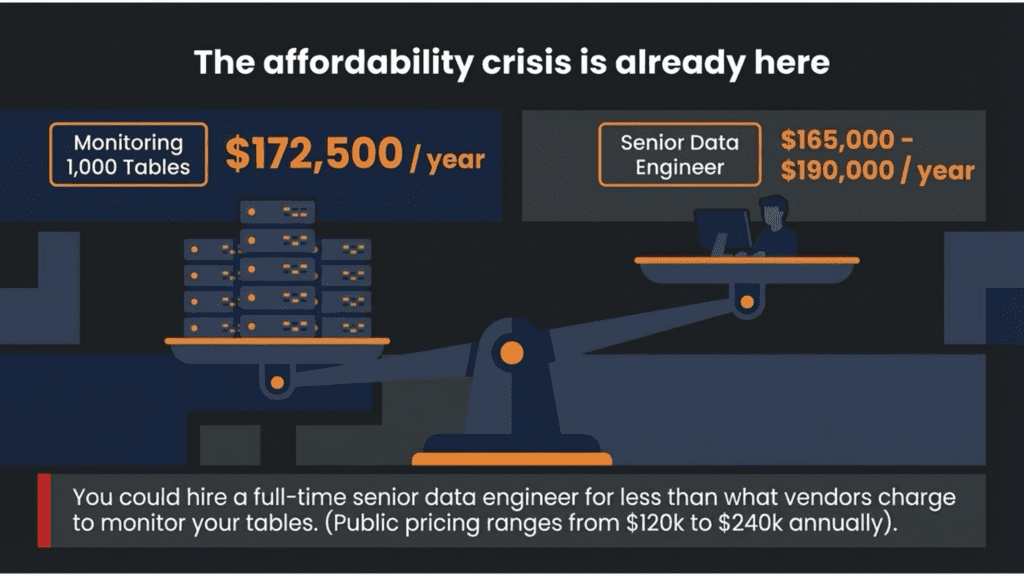

Monitoring 1,000 Tables Costs More Than Hiring an Engineer.

The affordability crisis in data observability is here. The funding situation has produced a concrete, measurable problem for buyers today. The industry average cost to monitor 1,000 tables is approximately $172,500 per year.

For context, the median annual salary of a senior data engineer in the United States is roughly $165,000 to $190,000. You could hire a full-time engineer for less than what some vendors charge to monitor your tables. When surveying publicly available pricing from major data observability vendors for a 1,000-table monitoring scenario, the results ranged from approximately $120,000 to $240,000 annually. When the floor is $120,000, teams stop asking whether to monitor everything and start asking which tables they can afford to leave dark.

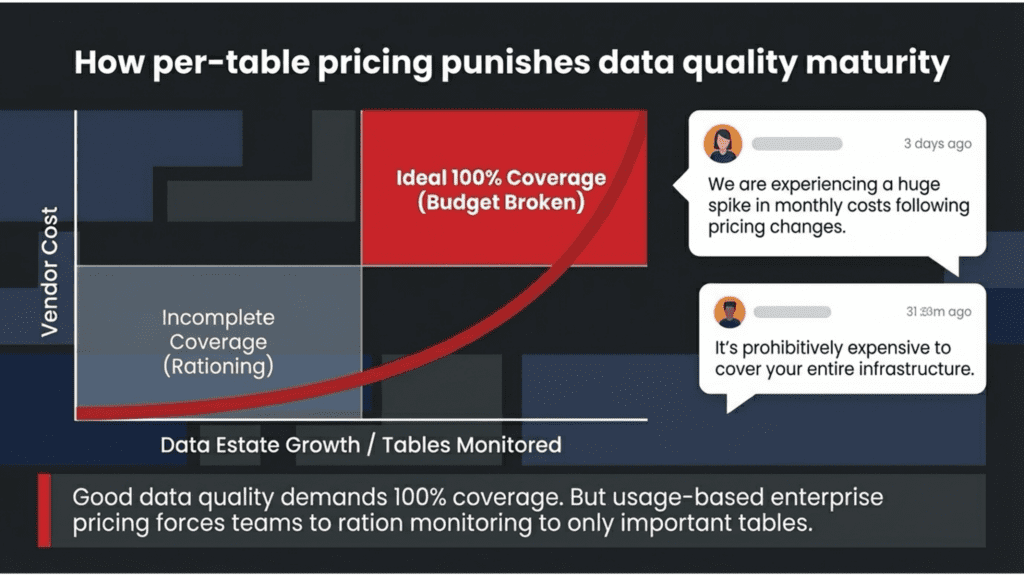

Usage-based pricing creates a perverse outcome for teams trying to do the right thing. Instead of monitoring everything, they are forced to pick and choose. They decide which tables are “important enough” to monitor and leave the rest uncovered. Testing limits is the opposite of good data quality practice. In any serious quality assurance framework, coverage is the point. You do not get to choose which parts of your factory floor to inspect and which to ignore.

To be fair, Monte Carlo and Acceldata primarily sell to Fortune 500 data organizations with hundreds of engineers, multi-cloud environments, and dedicated data infrastructure budgets. But the pricing model does not stay in that lane. The same per-table pricing that lands at $500,000 for a large enterprise lands at $100,000 for a mid-market team with 600 tables, which is most of the teams reading this.

In today’s AI-driven world, data quality is no longer a luxury. But most data teams are caught in a vise: the cost of data quality is unrealistic, and the price of failure is unacceptable. It’s not just that enterprise data quality software is outrageously priced. It’s that data quality needs are too often underestimated and underfunded, until it’s too late. The fact is that data quality in the real world has to be achievable, affordable, and efficient.

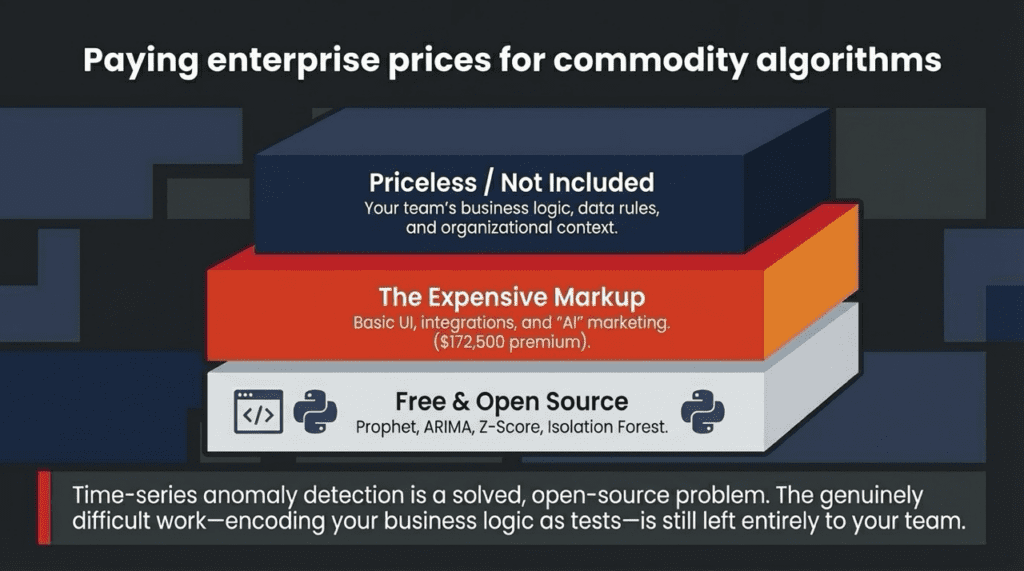

You Are Paying Enterprise Prices for Commodity Algorithms

Every data observability vendor on that list runs on the same open-source algorithms: Facebook’s Prophet, ARIMA, Z-score, and Isolation Forest. Their Python implementations are on GitHub. A working implementation is half a page of code. The $1 billion in VC funding did not yield better algorithms. It produced better sales teams and higher price targets.

Most vendors wrap these free components in a UI, add data connectors, and go-to-market as “AI-powered enterprise software.” The mere mention of AI in a vendor’s pitch is not evidence of proprietary technology. It is evidence of a higher target price point, set by investors who need a return on $1 Billion. That is what produces high-cost, extractive, usage-based pricing.

The anomaly detection is the easy part. The hard part is knowing that your revenue table should never have a negative value, or that a specific column cannot be null after a certain date. None of that knowledge lives in an algorithm. It lives in your team. The expensive vendors are not selling you that knowledge. They are selling you the easy part at a premium price.

Building it yourself is an option (until it becomes a second job). dbt tests and a few Python scripts can get a small team meaningful coverage for close to zero dollars. Maybe you will just ask Claude. But the maintenance compounds quietly. Alerting breaks when pipeline topology changes. The profiling logic that no one documented becomes a Friday night incident. The business analyst who needs to understand why last Tuesday’s report was wrong has no UI to adjust the test parameters. And every new engineer who joins inherits a bespoke monitoring stack with no owner, no roadmap, and no community.

Your Invoice Is Paying for Investor Returns, Not Product Value

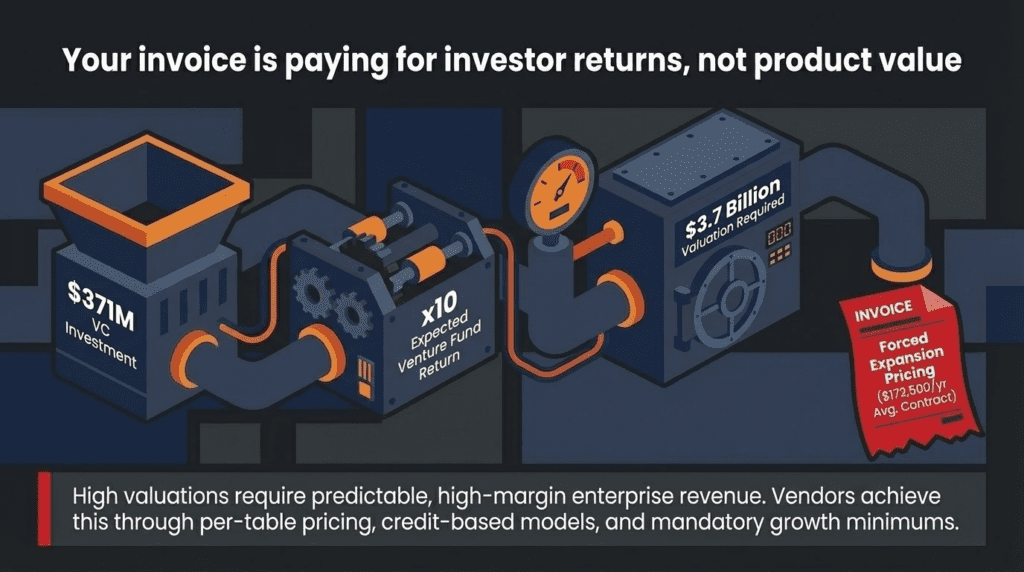

Understanding why these prices exist requires understanding the financial math behind the companies charging them. Monte Carlo has raised $371 million. A typical venture fund models for a 10x return on successful investments. That means investors in Monte Carlo’s later rounds need the company to reach a valuation of several billion dollars to produce the returns they modeled. That valuation requires predictable, high-margin, enterprise-scale revenue.

The way you get to that revenue is not by charging $10,000 a year. It is by charging $120,000 to $200,000 or more, anchored on a pricing model that grows with usage. Per-table pricing, credit-based consumption models, and annual contracts with mandatory growth minimums are not coincidental choices. They are deliberate mechanisms to extract increasing revenue from existing customers year over year.

In 2022, DataDog charged one of its customers (Coinbase) $65 million for one year of enterprise IT Observability. This is not a misprint. The next year, the crypto industry hit a sudden bump, hurting Coinbase’s business. As revenue dried up, the company turned its attention to reducing its overly high costs. For IT observability, Coinbase moved away from DataDog and onto an open-source Grafana/Prometheus/ClickHouse stack.

In their VC pitches, Monte Carlo called themselves ‘DataDog for data engineers.’ This was not a functional definition. It’s a pricing and valuation vision for their investors. In its latest investor deck, Datadog discussed its land-and-expand pricing model. They start at $50K and expand to over $500K per year on average, with over 600 $1M+ and 34 $10M+ customers. Data is the lifeblood of many companies. And those six, seven, eight-figure numbers are what’s in your future with the current Data Observability market.

The boards of data observability are looking at the parallels with IT observability. “One customer went from $50K to $24 million in 15 years, growing 48 percent annually.” A Redmonk analyst cited the statistic: “Most companies now spend somewhere between 20 and 25% of their entire infrastructure budget on their IT observability tools.” The boards of your data observability vendor see this as a preview of what data quality pricing looks like if this market matures the same way. 25% of your budget is genuinely alarming.

As one data engineer noted in a public thread on r/dataengineering after a vendor repricing event: “We are experiencing a huge spike in monthly costs following pricing changes.” Another in the same community warned: “It’s prohibitively expensive to cover your entire infrastructure.” These are not isolated complaints. Search any data engineering forum, and you will find the same pattern repeating: a team signs a contract at one price, grows their data estate as good data teams do, and discovers the bill has compounded in ways that were not obvious at signing.

These are not edge cases. They are the predictable outcome of investors who need their portfolio companies to demonstrate rapidly expanding revenue, channeled through pricing models that make expansion the default behavior. The cost of the technology does not set the pricing. It is not set by the value delivered to you. It is set by what a spreadsheet in an investment committee meeting says the company needs to justify its valuation.

When the Funding Stops, So Does Your Vendor

A significant number of companies in the table above will not be able to raise their next funding round. The venture market for data infrastructure has cooled substantially since 2022. Investors who were willing to fund a Series B at a 20x revenue multiple in 2022 are looking for actual profits, or at a minimum, a credible path to them, in 2026. There is another VC bubble where everyone is putting their funding in 2026: AI.

Companies that raised large funding rounds but haven’t reached the revenue level to justify their next valuation will face tough choices. They can attempt to raise money in a down round, which hurts morale, resets option strike prices, and signals trouble. They might try to be acquired, often at a price that eliminates the value of common stockholders, including employees. They could also try to cut costs enough to become profitable, which usually involves significant layoffs. Or they might shut down. None of these options is ideal for the teams that built their data quality programs on top of their products.

If you are currently evaluating a vendor that has raised $50 million or more and has not disclosed anything resembling profitability, you are taking on real business continuity risk. The product you buy today may not exist in its current form in eighteen months. This is not speculation. Look at the list. Kensu is gone. Databand is part of IBM, which has shown little enthusiasm for developing it. Metaplane is inside Datadog. Two acquisitions and one apparent shutdown out of roughly twenty-five significant players in a space that is only a few years old.

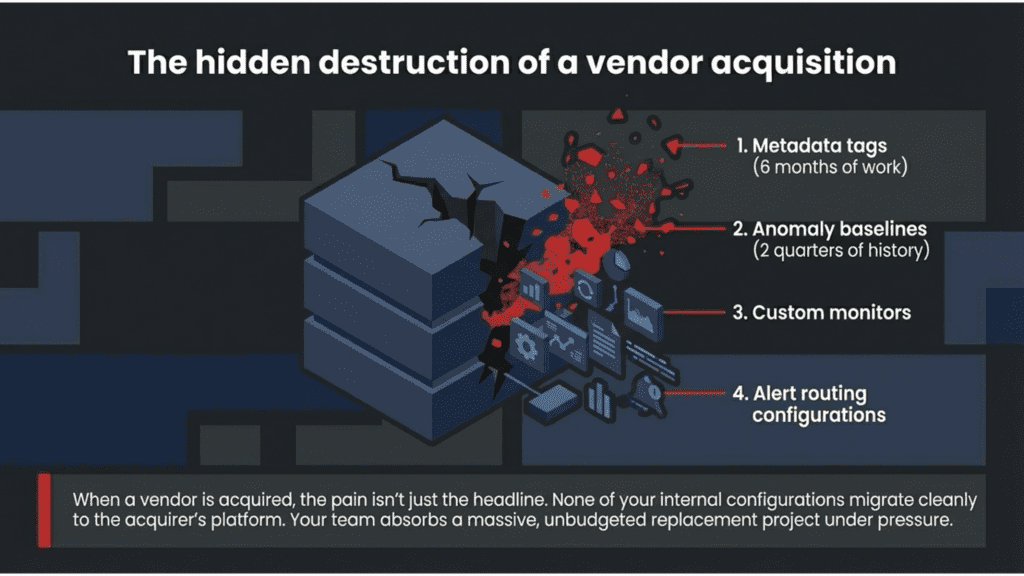

And when an acquisition happens, the pain is not just the headline. It is everything your team built inside that tool. The metadata your engineers tagged over six months. The anomaly baselines took two quarters of ingestion history to become meaningful. The custom monitors are tuned to your specific data. The alert routing for your on-call rotation depends on. None of that migrates cleanly to the acquirer’s platform. In most cases, it does not migrate at all. Your team absorbs a replacement project under pressure, on top of everything else they were already doing. That cost never appears in the original contract. It should.

A reasonable counterargument is that the market consolidating to three or four well-capitalized survivors with stable roadmaps and deep integrations could be better for enterprise buyers than twenty fragmented point solutions competing for the same budget. History suggests otherwise. The IT observability market consolidated around Datadog, New Relic, and Dynatrace, and customers are paying more. Consolidation does not produce lower prices. It produces pricing power.

Three Questions to Ask Before You Sign Anything

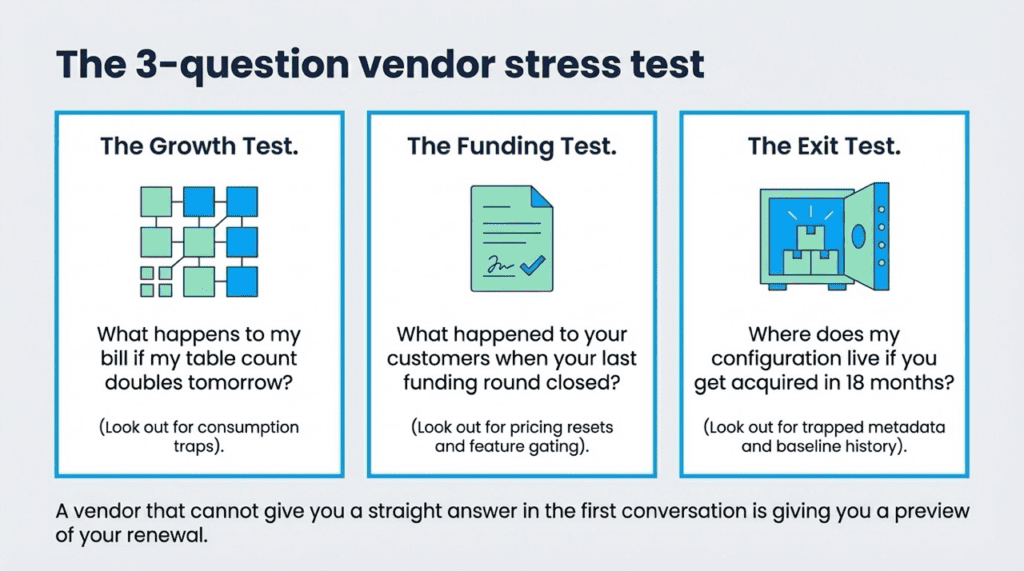

Given everything above, here is a practical framework for evaluating data observability and data quality tools. Three questions cut through most of the noise.

Ask what happens to your bill if you double your table count tomorrow. Per-table and consumption-based pricing models are designed to grow your costs every time you do the right thing. Add more data? Pay more. Run more tests? Pay more. A vendor that cannot give you a straight answer on this in the first sales conversation is telling you something important about how the relationship will go at renewal.

Ask what happened to their customers when the last funding round closed. A new investment round almost always follows pricing resets, contract restructuring, and feature gating. If the answer is nothing changed, ask for that in writing. If the answer is evasive, treat it as a preview.

Ask what your exit path looks like if this vendor is acquired eighteen months from now. What do you own? Where do your configuration, your test history, and your anomaly baselines live? Can you export it? If the honest answer is that a migration would cost your team three months of work, that cost belongs in your total cost of ownership calculation from day one.

If you are already in a contract, this section matters more to you than it does to someone evaluating for the first time. Renewal is where you have the most information and the most leverage. You know what the tool actually costs your team in time and frustration, not just in dollars. You know whether the anomaly detection produces alerts that your engineers act on or noise they have learned to ignore. You know what the vendor said during the sales process and whether it matched reality. And you have a credible exit threat, which is the only negotiating position that produces a better price. The three questions below are not just for new evaluations. They are the questions to bring into your renewal conversation.

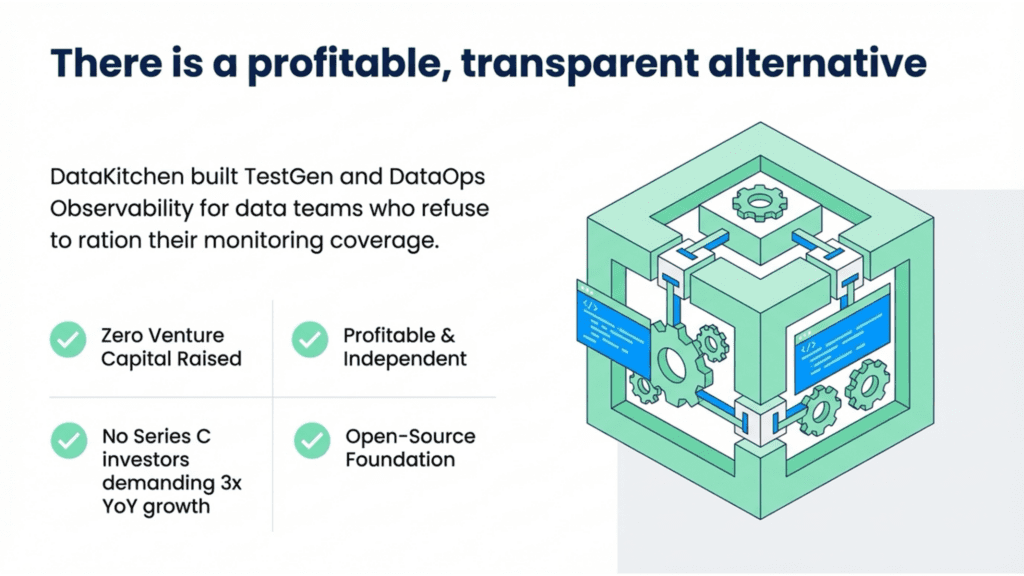

What a Data Observability Tool Looks Like When No One Needs a 10x Return

We are obviously not a neutral party here. We built TestGen because we could not justify the cost of the tools that existed, and because we kept running into data teams who were rationing their monitoring coverage for the same reason. What follows is our answer to the problem we just described. You should evaluate it with the same skepticism you would apply to any vendor.

DataKitchen’s answer is TestGen and DataOps Observability, both open-source. We have raised no venture capital. We have been profitable from day 1. We do not have a Series C to justify.

Our enterprise pricing is $100 per user per connection and $100 per database connection. Those numbers are published on our website. It does not change in your renewal conversation because we do not have an investor relations team telling us we need 3x year-over-year growth in contract value. To put it plainly: your entire team, covering every table across every database you have, should cost no more than one month of a single engineer’s salary. That is the standard we hold ourselves to, and it is the standard you should hold any vendor to. Here is how the capabilities compare across TestGen’s open-source version, the enterprise version, and the typical VC-backed data observability vendor:

The auto-generated tests deserve particular emphasis. One of the primary reasons data engineers do not test more is the overhead of writing tests manually. Writing a test for one table takes time. Writing tests for 500 tables is a multi-week project. TestGen profiles your tables, identifies the meaningful characteristics of your data, and automatically generates a complete test suite. This is not a feature the $150,000-per-year vendors offer because their revenue model depends on you needing to do more, not less.

A fair question is whether a small profitable company is any safer than a 300-person VC-backed one. The honest answer is that the size of our team is irrelevant, since TestGen is open source. If DataKitchen ceased to exist tomorrow, the code would not disappear. Your tests, your configuration, and your baselines live in your environment, not ours. That is the actual answer to the sustainability question, and it is one no VC-backed vendor can give you.

The Market Is Telling You Something. Listen

One billion dollars in venture capital invested in a software category does not make that category more trustworthy. It makes it more expensive and more fragile. The companies that raised that capital are obligated to produce returns for their investors. Those returns will come from customers. The data engineering community deserves tools that are priced based on the cost of the technology and the value it delivers, not on what a venture fund spreadsheet says a company needs to be worth.

None of this is a criticism of the founders building these companies. Starting a company is genuinely hard. We have been at this for 12 years, and we know firsthand that the late nights, stress, and struggles are real for anyone trying to build something from nothing. I hope all these founders succeed. But the nature of VC fund returns and technology markets is what it is. You did not cause them, and you cannot change them. What you can do is make a buying decision that prevents your team from being exposed when those dynamics unfold.

The conference booth size isn’t the signal; the price number on the page is.

Christopher Bergh has been building data tools for 20 years and is CEO and Founder of DataKitchen, a profitable, investor-free company. He has written three books on DataOps and has never taken a dollar of venture capital. TestGen and DataOps Observability are free and open-source at datakitchen.io. Free certification in data observability is available at datakitchen.io.

TLDR Summary:

This article warns data professionals that the billion-dollar surge of venture capital into the data observability market has created an unstable, overpriced ecosystem. The author argues that high investor expectations force vendors to implement predatory, usage-based pricing that often costs more than hiring a full-time engineer. Because the market is oversaturated, many of these companies face a high risk of bankruptcy or disruptive acquisitions, leaving customers with abandoned tools and lost metadata. Furthermore, the text claims that expensive platforms often charge premium rates for basic open-source algorithms while failing to automate the difficult aspects of business logic. To avoid these financial and operational risks, the authors advocate for transparently priced or open-source alternatives from profitable, independent providers. Ultimately, the narrative serves as a cautionary guide for evaluating the long-term viability and true cost of data quality and data observability software.

Frequently Asked Questions: The State of the Data Observability Market

Why is $1 billion in VC investment a warning rather than a sign of a healthy market?

Venture capital investing runs on narrative momentum, not market fundamentals. Investors saw a category getting traction and piled in without evaluating whether the market could support twenty-plus well-funded competitors. Historically, 80 to 90 percent of companies in overcapitalized software categories do not survive in their original form. The ones that do survive face relentless pressure to deliver returns for their investors, and that pressure lands on you, the customer, in the form of price increases, contract restructuring, and features that did not exist when you signed.

How much do these tools actually cost, and why does it matter?

The industry average to monitor 1,000 tables is approximately $172,500 per year, which is more than the median annual salary of a senior data engineer. The cost of the technology does not set that price. It is set by what a venture fund spreadsheet says a company needs to justify its valuation. The practical consequence is that teams stop asking whether to monitor everything and start asking which tables they can afford to leave dark, which is the opposite of what data quality requires.

Does genuinely advanced technology justify the high prices?

No. Every vendor on the market runs on the same four open-source algorithms: Facebook’s Prophet, ARIMA, Z-score, and Isolation Forest. Their Python implementations are on GitHub. The billion dollars in VC funding did not produce better algorithms. It produced better sales teams and higher price targets. The mere mention of AI in a vendor’s pitch is not evidence of proprietary technology. It is evidence of a higher target price point, set by investors who need a return.

Why do these pricing models penalize teams for growing?

Because VC-backed companies need to demonstrate predictable, expanding revenue to justify their valuations, they design pricing to grow automatically as your data estate grows. Per-table pricing, credit-based consumption models, and mandatory growth minimums mean that every time your team does the right thing and adds more data, your bill compounds. You are being charged for maturing as a data organization.

What happens to my investment if my vendor gets acquired or shuts down?

Kensu shut down. IBM absorbed Databand. Datadog absorbed Metaplane. When an acquisition happens, the independent roadmap ends, and your built-in work does not migrate cleanly. The metadata your engineers tagged over six months, the anomaly baselines that took two quarters of ingestion history to become meaningful, the custom monitors tuned to your data, the alert routing your on-call rotation depends on: none of that moves. Your team absorbs an unbudgeted replacement project under pressure. That cost never appears in the original contract.

What three questions should I ask before signing anything?

Ask what happens to your bill if you double your table count tomorrow. Ask what happened to their customers’ pricing when the last funding round closed. Ask what your exit path looks like if they are acquired in eighteen months and whether you can export your configuration, test history, and anomaly baselines. A vendor that cannot answer all three clearly in the first conversation is telling you something important about how the relationship will go at renewal.

Is there a viable alternative to VC-backed vendors?

Yes. DataKitchen’s TestGen and DataOps Observability are open-source and free to start. The enterprise tier is $100 per user per connection, published on our website, and does not change at renewal because we have no investors demanding 3x year-over-year growth. We have been profitable for 12 years without taking a dollar of venture capital. And because TestGen is open-source, your tests, configuration, and baselines live in your environment, not ours. If DataKitchen ceased to exist tomorrow, the code would not disappear. That is the sustainability answer no VC-backed vendor can give you.

Watch The Video